Fanjia Yan

@fanjia_yan

EECS @cal

ID: 1520825657541812224

01-05-2022 18:01:16

11 Tweet

55 Followers

81 Following

🌀Check out RAFT: Retrieval-Aware Fine Tuning! A simple technique to prepare data for fine-tuning LLMs for in-domain RAG, i.e., question-answering on your set of documents 📄 Exciting collaboration with Berkeley AI Research 🤝 Microsoft Azure 🤝 AI at Meta MSFT-Meta blog:

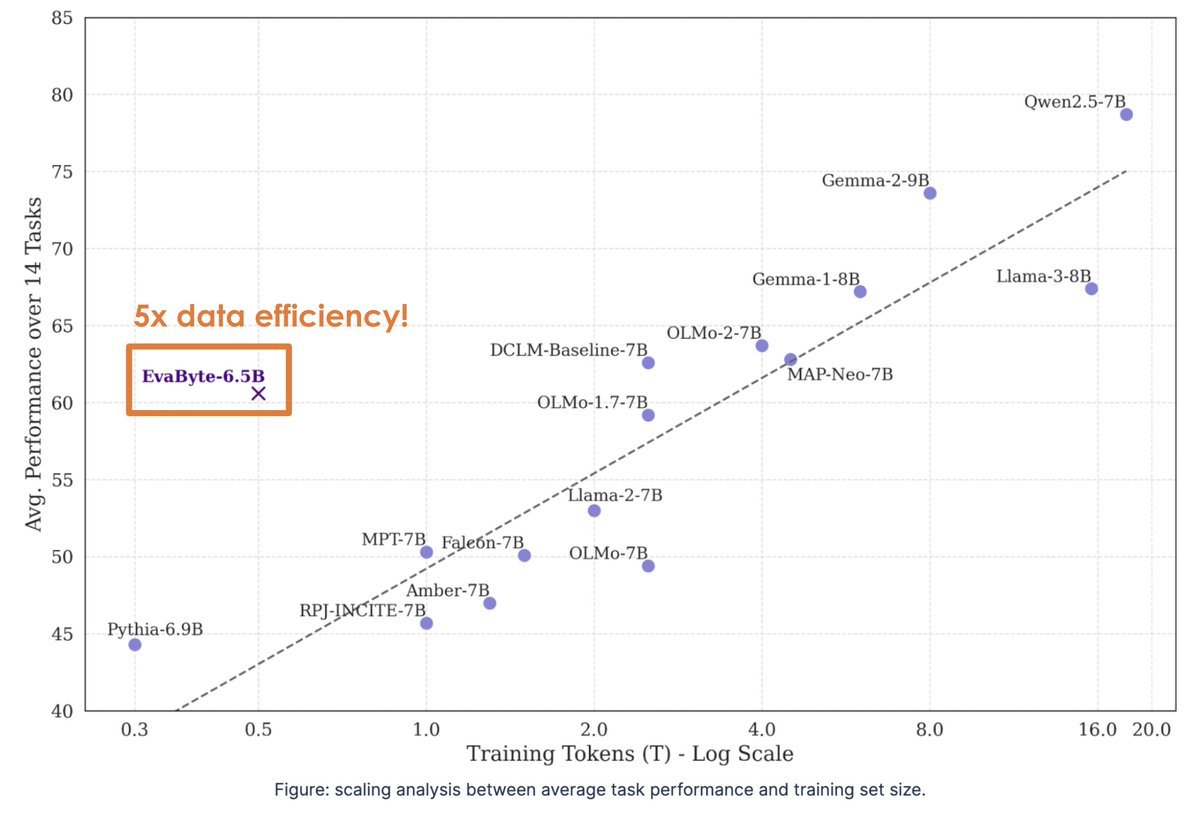

🚀Excited to announce the best open-source tokenizer-free language model! EvaByte, our 6.5B byte-level LM developed by The University of Hong Kong SambaNova , matches modern tokenizer-based LMs AI at Meta Google DeepMind Ai2 Apple with 5x data efficiency & 2x faster decoding!