Manhin Poon

@entroshape_

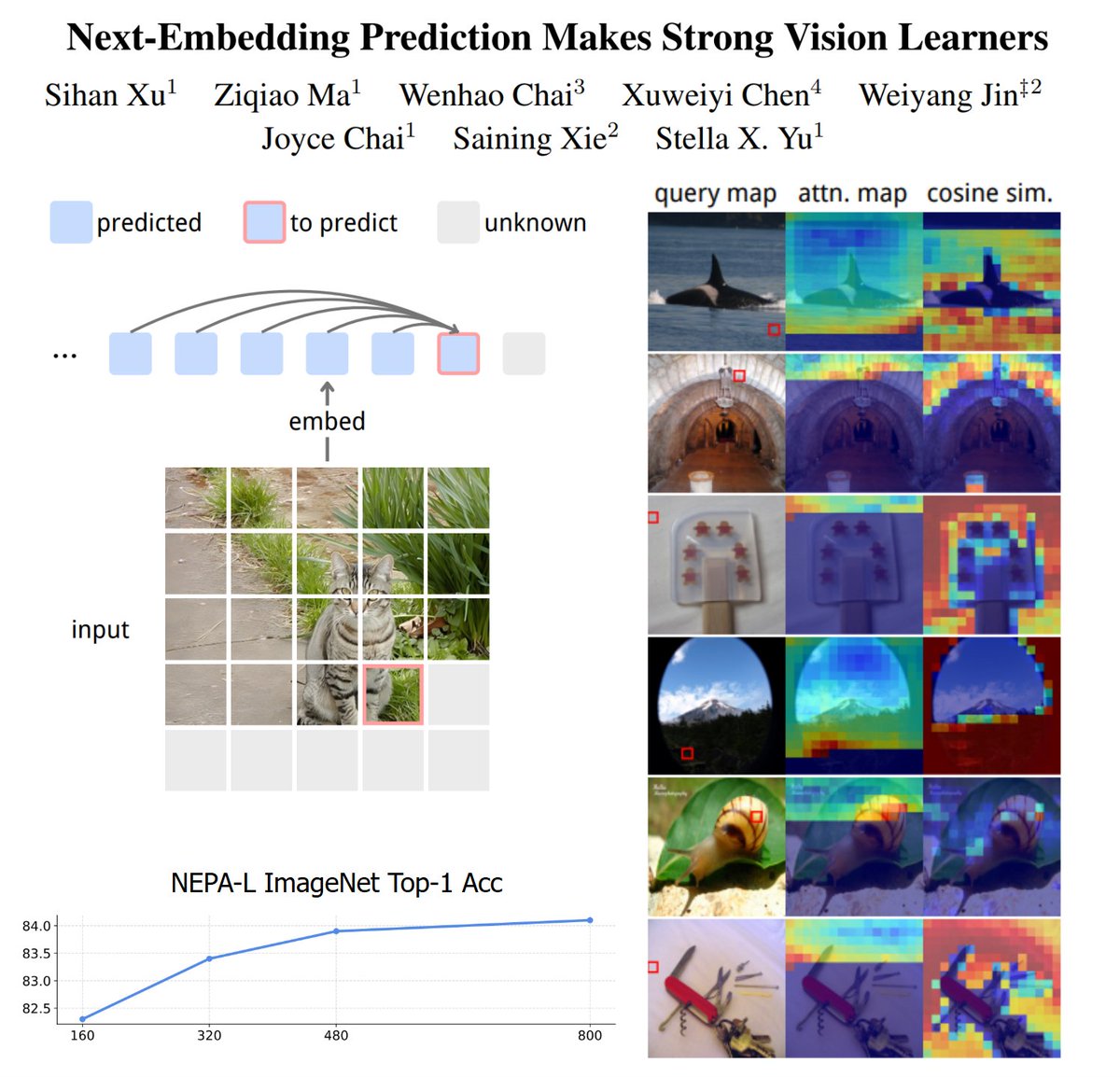

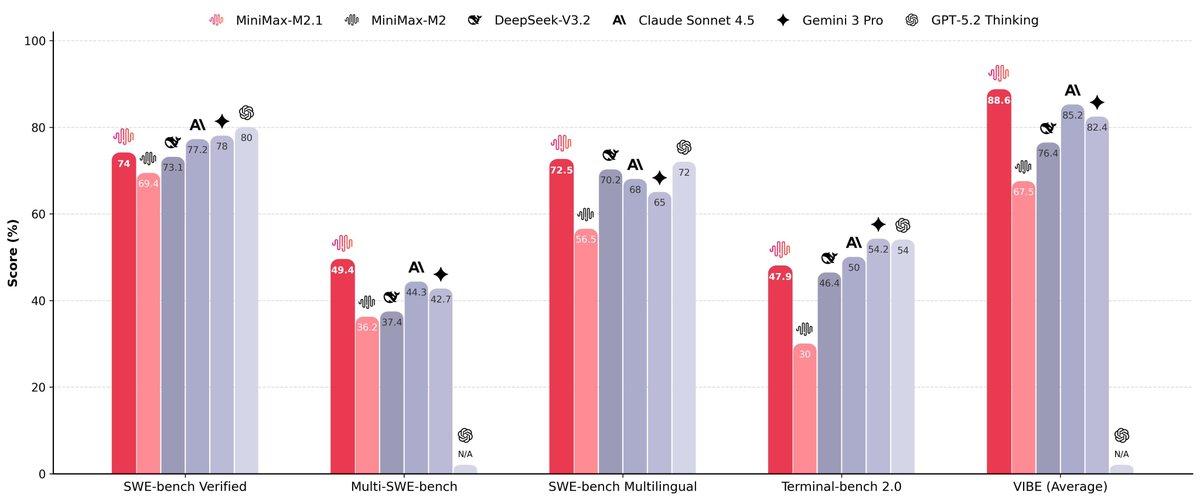

ML Theory & LLM (Agent & MLsys)

ID: 1578035067754278916

https://github.com/EntroShape 06-10-2022 14:51:12

100 Tweet

18 Followers

398 Following

Thrilled to announce that I'll be joining UIUC CS Siebel School of Computing and Data Science as an Assistant Professor in Spring 2026! 📢 I’m looking for Fall '26 PhD students who are interested in the intersection of Software Engineering and AI, especially in LLM4Code and Code Agents. Please drop me an