mukul

@mukul0x

building @theportalcorp

ID: 1258489777231237121

https://portal.so 07-05-2020 20:12:17

236 Tweet

445 Followers

525 Following

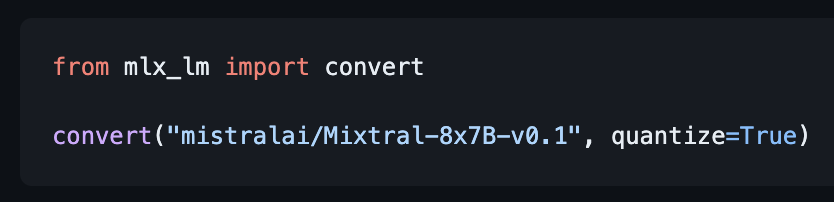

Lazy evaluation and unified memory in MLX make it super easy to quantize or merge large models on small machines. In MLX LM you can quantize Mixtral (~100GB) in < 4 mins on an 8GB M1 (h/t Angelos Katharopoulos). Example:

Tanay Jaipuria And it’s gotten worse in the 6 months since we first measured. Google is now: 15 scrape for 1 visitor OpenAI is now: 1,200 scrapes for 1 visitor