MIT NLP Group

@mitnlp

MIT Natural Language Processing group.

ID: 908046666980306947

http://nlp.csail.mit.edu 13-09-2017 19:16:10

29 Tweet

904 Followers

22 Following

Congratulations Tal! old account

Our #emnlp2019 paper is now on arxiv:arxiv.org/abs/1908.05267 * Extending #FEVER (fact-checking) eval dataset to eliminate bias. * Regularizing the training to alleviate the bias. Coauthors: Darsh Shah, Serene Yeo, Daniel Filizzola, @esantus, Regina Barzilay @emnlp2019 #nlproc

Are we protected from GPT-2, #GROVER style models generating fake content? What happens if they are also used legitimately as writings assistants? Check our new report: arxiv.org/abs/1908.09805 with Roei Schuster, @Darsh71307636, Regina Barzilay. #NLProc #emnlp2019 #FakeNews #GPT2

Check-out our new paper - arxiv.org/pdf/1909.13838… Automatic Fact-guided sentence modification. Method to automatically modify the factual information in a sentence. Joint work with old account , Prof. Regina Barzilay.

If you're at @emnlp2019, don't miss our talks: Towards Debiasing Fact Verification Models * Wednesday 15:42 (2B) * Tal Schuster Darsh J Shah Working Hard or Hardly Working: Challenges of Integrating Typology into Neural Dependency Parsers * Thursday 15:30 (201A) * Adam Fisch

In our IEEE S&P paper, led by Roei Schuster, we control the embeddings of words by introducing minimal changes to the pretraining data (e.g. #Wiki edits). This #word_embeddings attack affects many downstream #NLProc tasks! Cornell Tech MIT CSAIL link: arxiv.org/abs/2001.04935

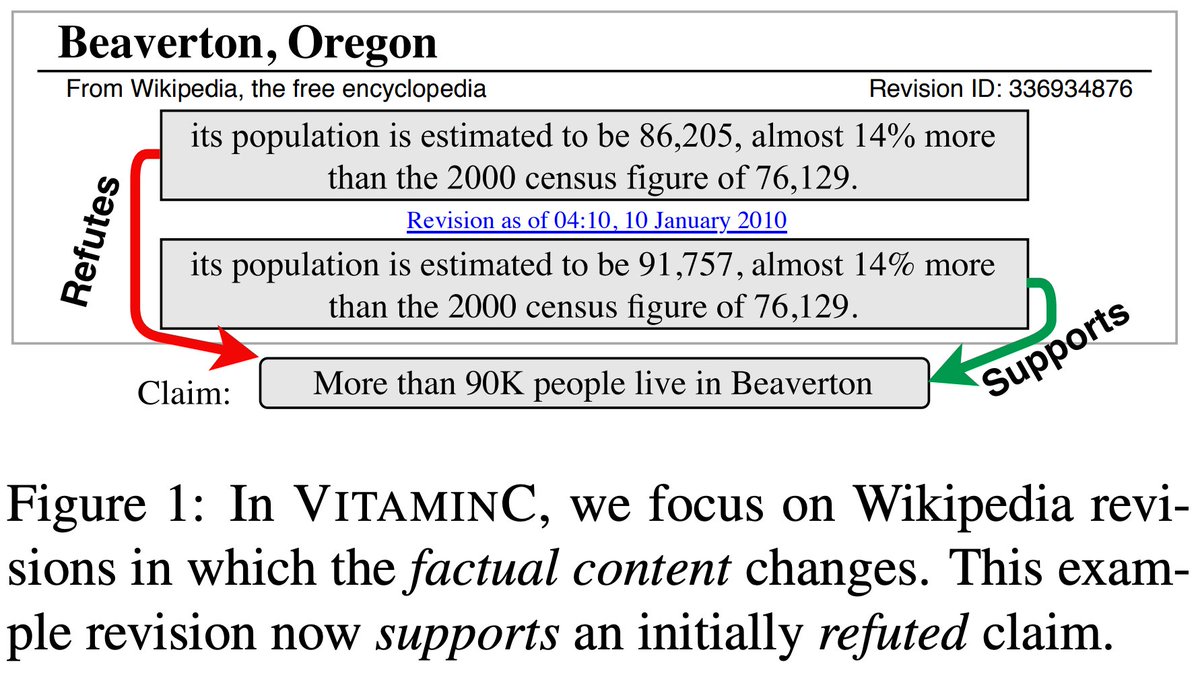

Is your Fact Verification model robust enough? Consider adding #VitaminC 🍊 Check out our new #NAACL2021 paper: "Get Your Vitamin C! Robust Fact Verification with Contrastive Evidence" with Adam Fisch and Regina Barzilay 🔗 arxiv.org/abs/2103.08541 #NLProc #FakeNews📰 🧵1/N

New #NAACL2021 paper out on robust fact verification. Sources like Wikipedia are continuously edited with the latest information. In order to keep up, our models need to be sensitive to these changes in evidence when verifying claims. Work with Tal Schuster and Regina Barzilay!

New Preprint with Adam Fisch, T.Jaakkola and Regina Barzilay. We present Consistent Accelerated Inference via 𝐂onfident 𝐀daptive 𝐓ransformers (CATs) CATs can speed up inference 😺 while guaranteeing consistency 😼. The code is available🙀 🔗people.csail.mit.edu/tals/static/Co… #NLProc

Need to debias your new task? Learn how, from your old one. Check out our #ICML2022 paper "Learning Stable Classifiers by Transferring Unstable Features" with Shiyu Chang and Regina Barzilay Paper -> arxiv.org/abs/2106.07847 Code -> github.com/YujiaBao/tofu