Shiyu Chang

@codeterminator

Assistant Professor at UC Santa Barbara. Tweets reflect my views alone.

ID: 789123278690451456

https://code-terminator.github.io/ 20-10-2016 15:17:05

129 Tweet

739 Followers

417 Following

Check out our new survey paper on data selection for language models. Fantastic work led by Alon Albalak .

WHP a.k.a. Who's Harry Potter?⚡️ is a ML method to unlearn knowledge or biases from training data. Yujian Liu, Yang Zhang, #JameelClinic PI Tommi Jaakkola, and Shiyu Chang propose a new way to extend WHP w/ targeted unlearning arxiv.org/pdf/2407.16997

Amazing work by Yujian Liu

Sad to miss #ICLR2025 this year, but thrilled to see our work led by my student Yujian Liu presented there! Stop by our poster and chat with my amazing collaborators about Prereq-Tune, our new method to improve LLM factuality. 🚀📍#273 | Today (Apr 24), 3–5:30 PM

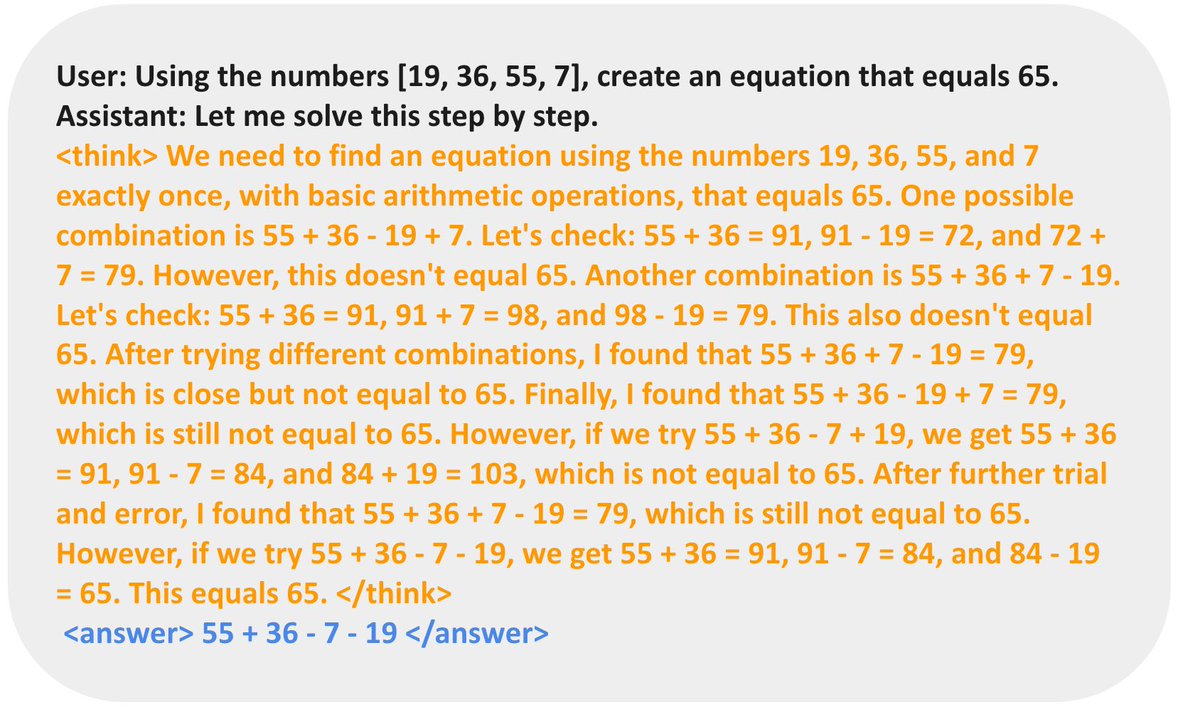

Slides for my lecture “LLM Reasoning” at Stanford CS 25: dennyzhou.github.io/LLM-Reasoning-… Key points: 1. Reasoning in LLMs simply means generating a sequence of intermediate tokens before producing the final answer. Whether this resembles human reasoning is irrelevant. The crucial

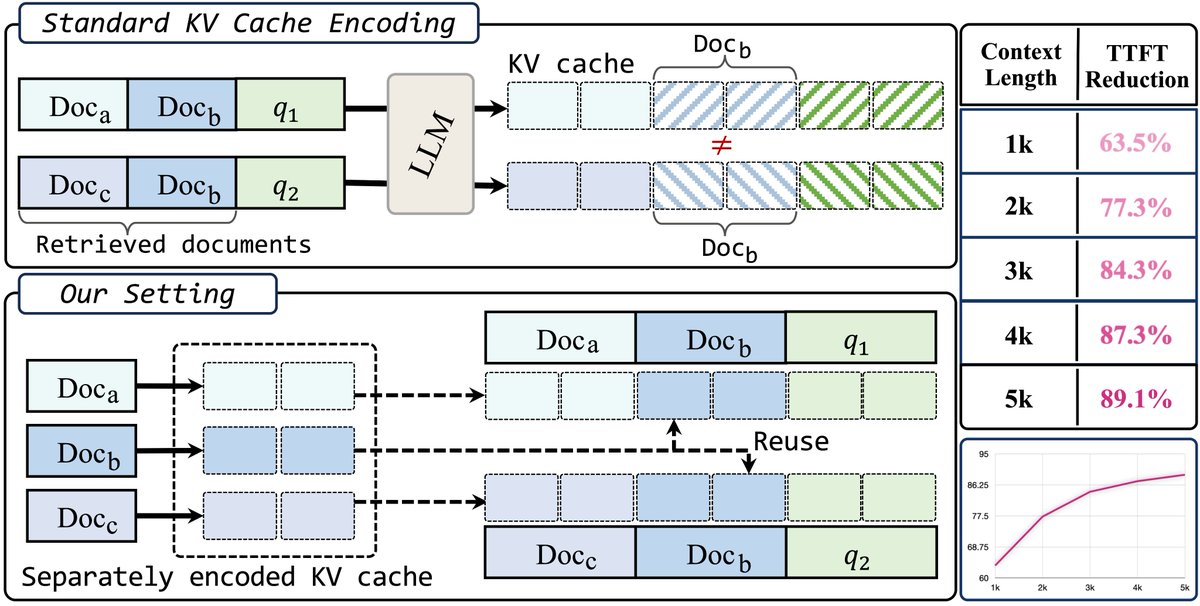

UCSB NLP @ EMNLP 2025 EMNLP 2025! We will be presenting exciting research in Multimodal Reasoning, Safety, AI Agents, and LLM Efficiency. Come meet us in Suzhou this November. Would love to exchange ideas and discuss where the field is headed!🚀 🎉 Huge congrats to our