Ariel Goldstein

@goldsteinyariel

ID: 745515944097701889

22-06-2016 07:17:06

48 Tweet

113 Followers

376 Following

We're posting this preprint alongside another Hasson Lab manuscript led by Zaid Zada and Sam Nastase where we use large language models to capture brain-to-brain linguistic coupling during dyadic conversations—check it out: doi.org/10.1101/2023.0… x.com/samnastase/sta…

In a new version of our (Mariano Schain, Ariel Goldstein, Hasson Lab) paper (arxiv.org/abs/2310.07106) we show evidence that the layered hierarchy of large language models (LLMs) may be used to model the temporal dynamics of language comprehension in the brain...

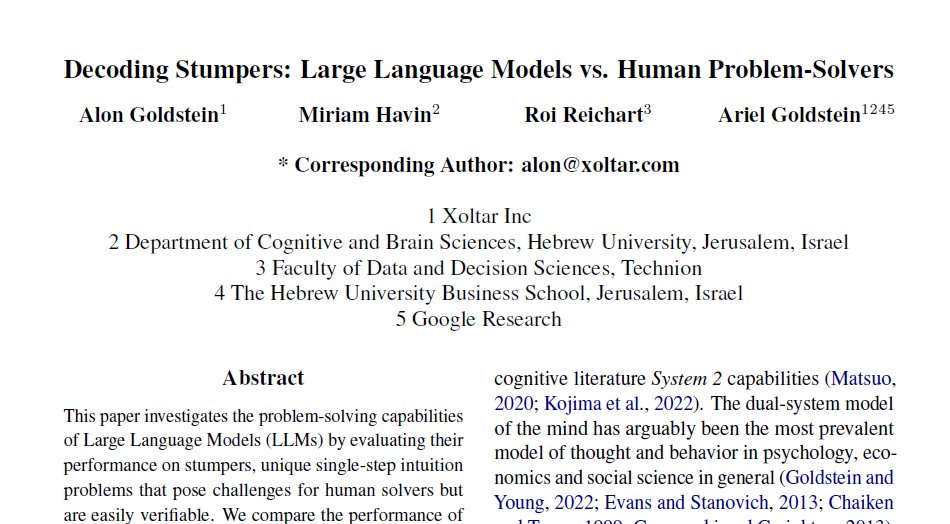

1/9: Our recent study, Decoding Stumpers: Large Language Models vs. Human Problem-Solvers, was published in #EMNLP2023. Alon Goldstein, Miriam Havin , Roi Reichart & Ariel Goldstein

How do the inherent biases in Large Language Models impact their capacity for accurately simulating human behavior? We (yanivdover, Roi Reichart, Ariel Goldstein) try to answer this question in our new paper arxiv.org/abs/2402.04049 1/9

🧠 Can LLMs truly understand text, or are they mere 🦜? We argue that this debate implicitly revolves around the function of consciousness, an age-old question which almost by definition cannot be answered via objective measurement. arxiv.org/pdf/2403.00499… w/Ariel Goldstein

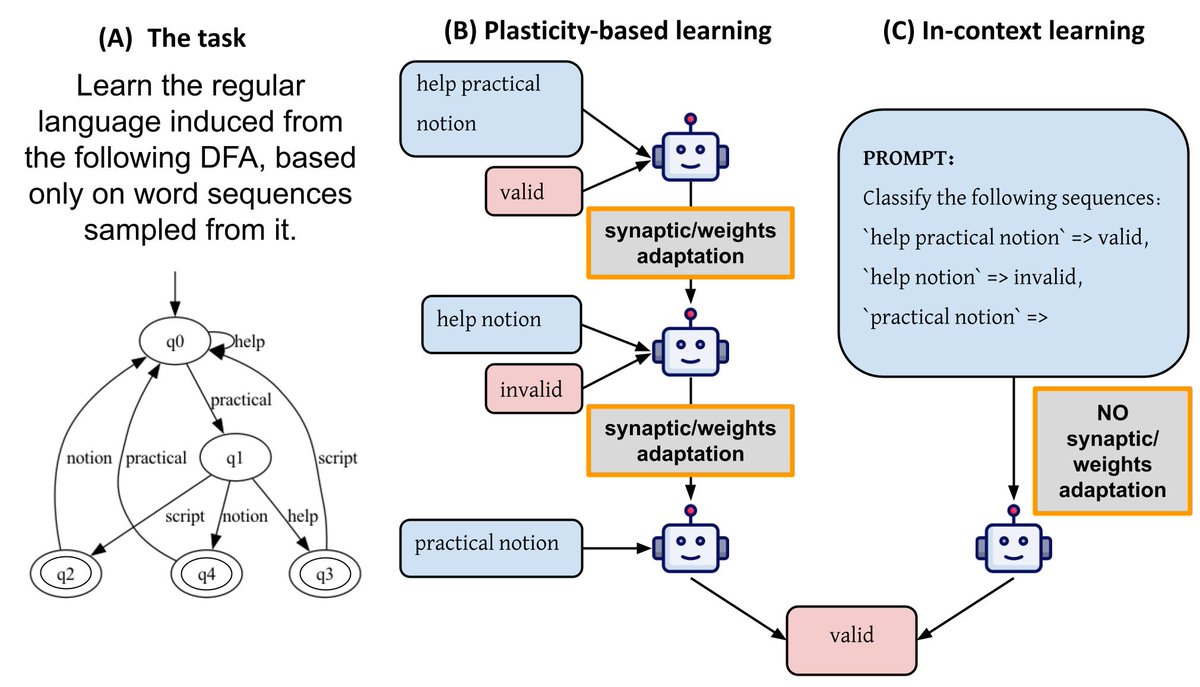

🧠🤖 Does learning in the brain inherently require plasticity? In our latest paper we question this assumption, by leveraging insights into how LLMs "learn". Check out this thread for more details! biorxiv.org/content/10.110… w/ Yuval Shalev Gabriel Stanovsky Ariel Goldstein

Chreck out our new LLM-based suicide research paper at Frontiers - Psychiatry !

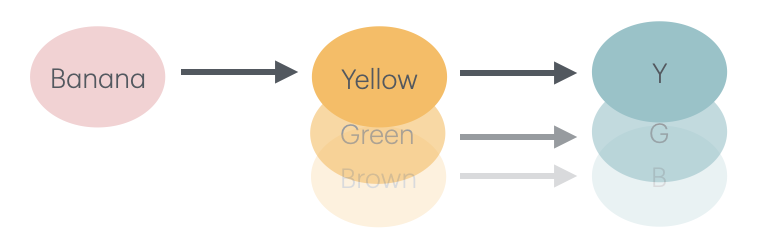

🧠🤖 How do LLMs think? What kind of thought processes can emerge from artificial intelligence? Our latest paper about multi-hop reasoning tasks reveals some new interesting insights. Check out this thread for more details! arxiv.org/abs/2406.13858 Ariel Goldstein Amir Feder

🎯 Finally, we leverage these insights to introduce a new token-level early-exit strategy that beats existing methods in balancing performance and efficiency. More accurate predictions and faster models—win-win! Joint work with Ariel Goldstein Tomer Gabriel Stanovsky 4/4

Very excited to share our new paper published in Nature Communications Nature Communications (link below). This work is part of my PhD research under the supervision of Roi Reichart (Technion), @HassonUri (Hasson Lab), and Ariel Goldstein, in collaboration with Yoav Meiri🇮🇱.