Deep SIML Labs

@deepsiml

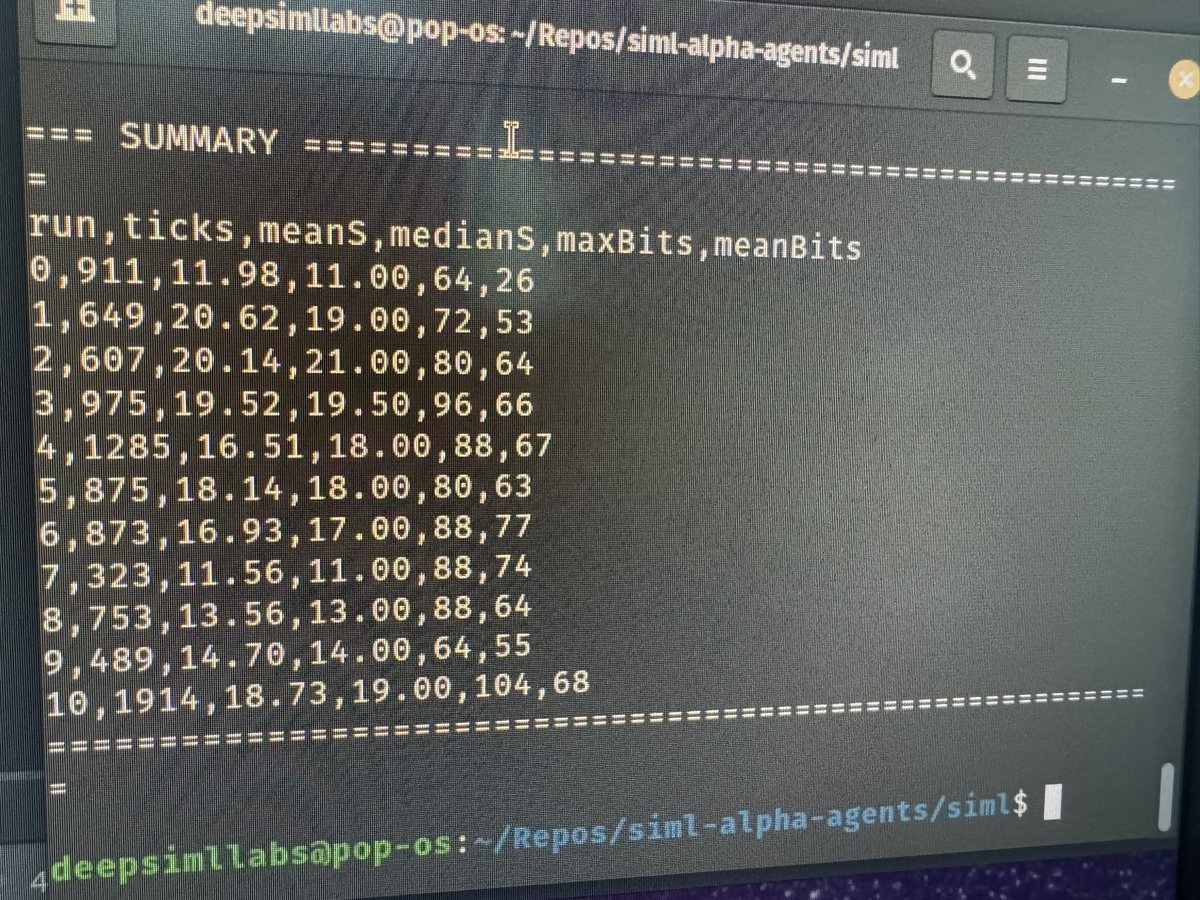

Excited for my upcoming talk at Central PA Open Source Conference on March 28th: How Minds Get Small: Cognitive Compression from Biology to SIML Deep SIML Labs will have a table there showcasing our latest releases! Ge tickets now if you haven’t!! eventbrite.com/e/central-penn…