Danqi7

@danqiliao73090

AI for BioScience. CS Ph.D. @ Yale.

Prev: Northwestern 18', Meta 20', Princeton 22'.

ID: 1739396171393536000

25-12-2023 21:22:38

50 Tweet

43 Followers

198 Following

Listening to Ilya Sutskever recent chat with Dwarkesh Patel on how we are moving from age of scaling to age of research, I just realized that research is literally "re"-"search". Like if existing ideas are explored local minimum, the job now is to search again, to explore new directions

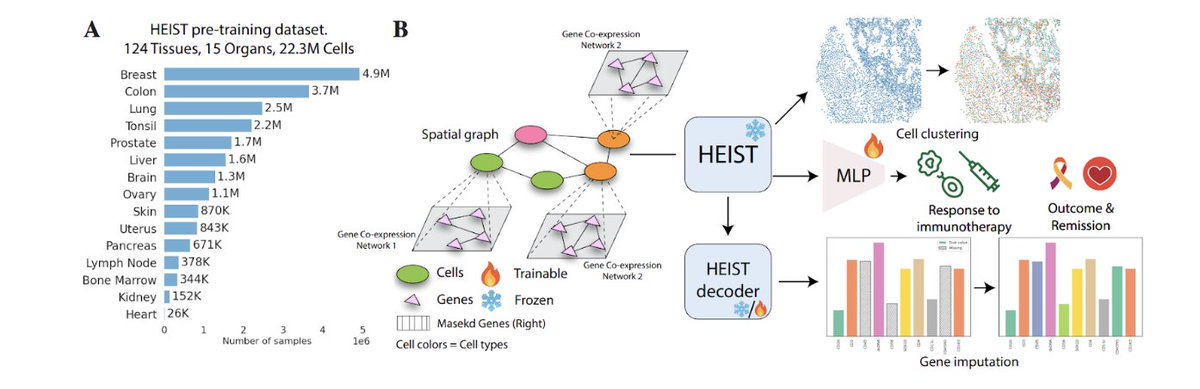

(1/n) Just in time for New Years! #ImmunoStruct, our multimodal model that predicts class I peptide-MHC immunogenicity is out at Nature Machine Intelligence ! nature.com/articles/s4225…

The GPT-2 replication tutorial by Andrej Karpathy might be the best technical video on the internet. I watched every second. One thing that surprised me: padding the tokenizer to an even number of total tokens actually speeds up training. The whole speedup section is packed with gems