Cody Yu

@codyhaoyu

MLSys, LLM Serving, Deep Learning Compiler

ID: 836647857490804736

https://www.linkedin.com/in/cody-hao-yu 28-02-2017 18:42:48

106 Tweet

176 Followers

27 Following

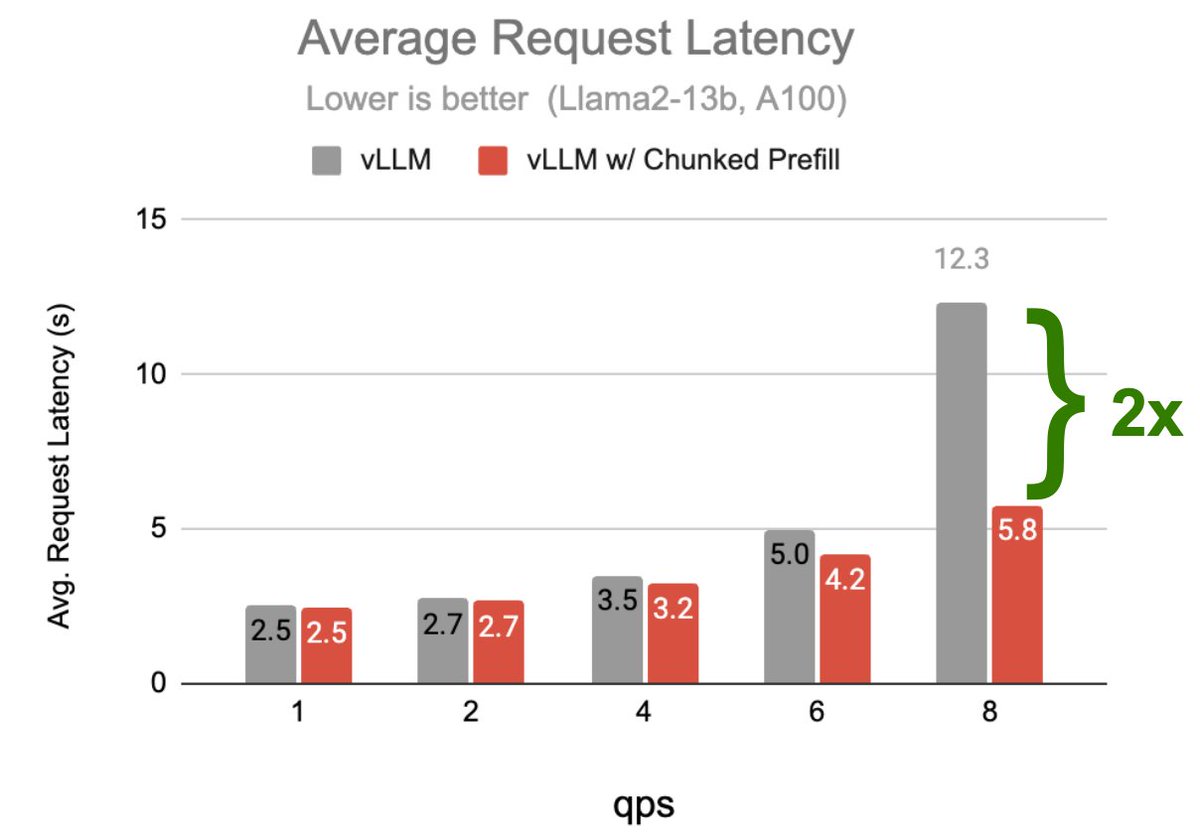

We've great projects at Anyscale, come work with us. We've shipped: • Chunked prefill Sang Cho • Multi-LoRA Antoni Baum • Dynamic spec decode Lily Liu • FP8 Cody Yu • MoE optimization Philipp Moritz • Ray, dist. compute framework used to train ChatGPT Robert Nishihara et al