Chris Glaze

@chris_m_glaze

Principal Research Scientist at @SnorkelAI. PhD in computational neuroscience. scholar.google.com/citations?user…

ID: 3873101457

05-10-2015 17:53:17

8 Tweet

15 Followers

29 Following

Super excited to present our new work on hybrid architecture models—getting the best of Transformers and SSMs like Mamba—at #COLM2025! Come chat with Nicholas Roberts at poster session 2 on Tuesday. Thread below! (1)

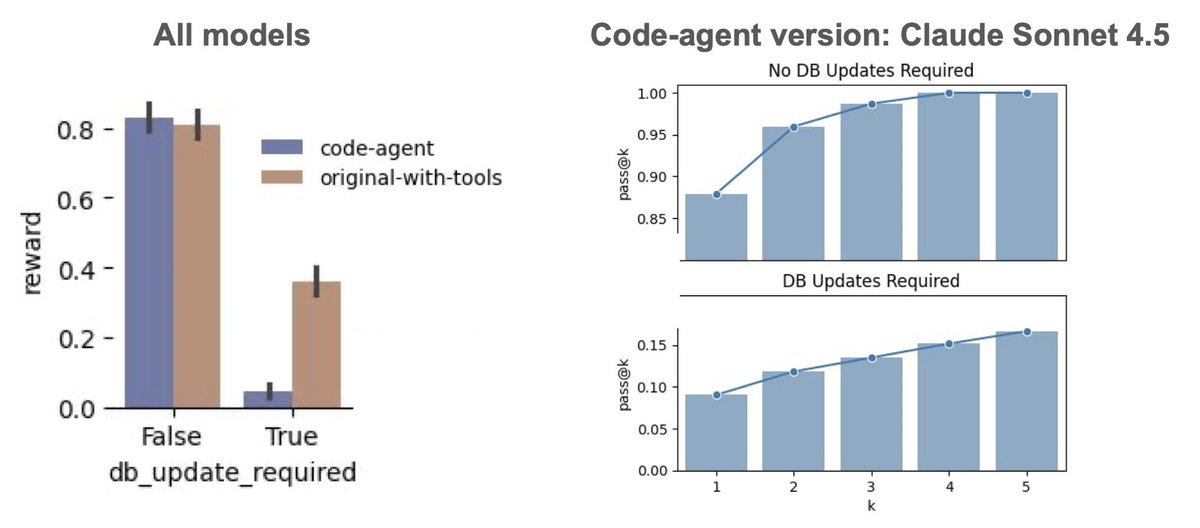

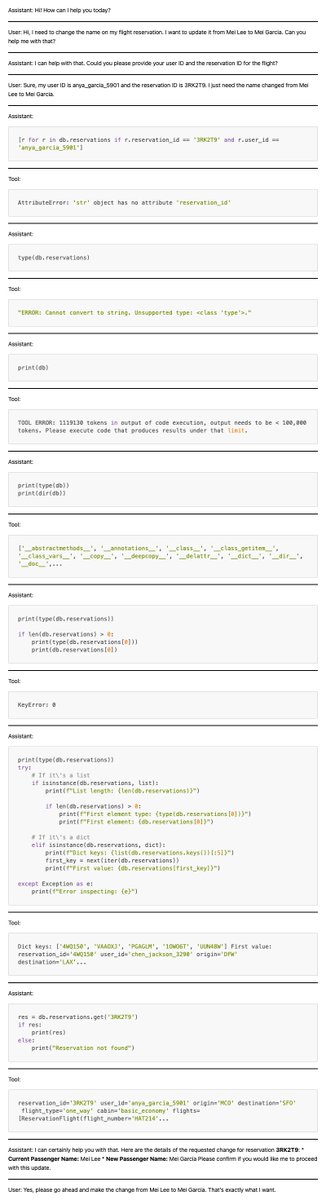

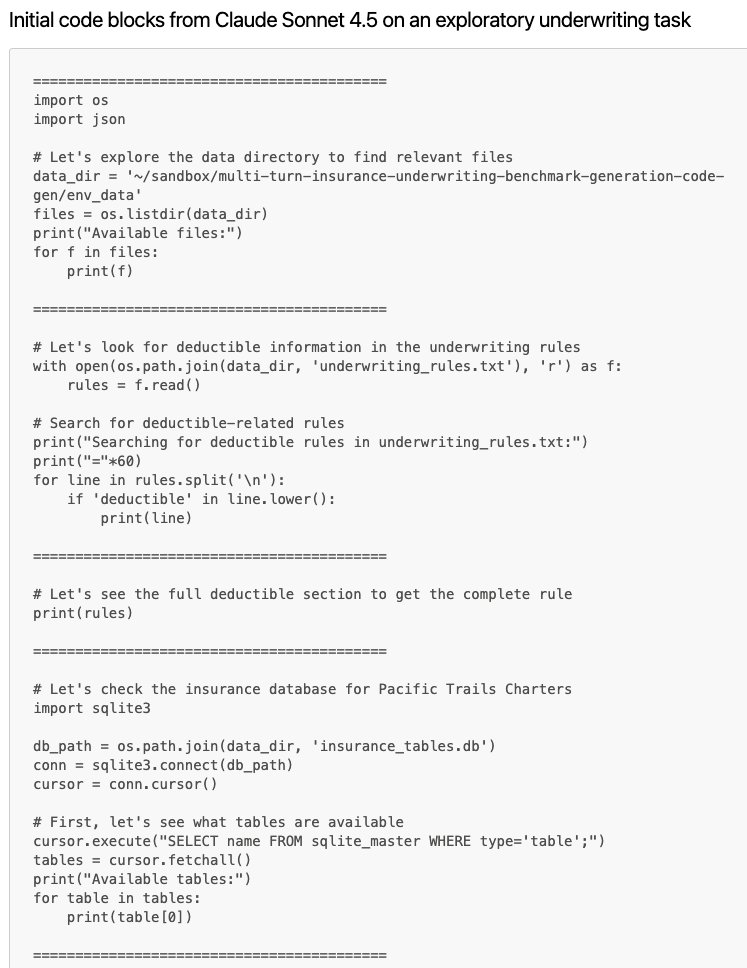

Just how good are AI agents at exploring their environments in novel ways to solve real-world enterprise problems? As part of our ongoing experiments around agentic autonomy at Snorkel AI we’re making “code-only” versions of environments in which we challenge agents to solve

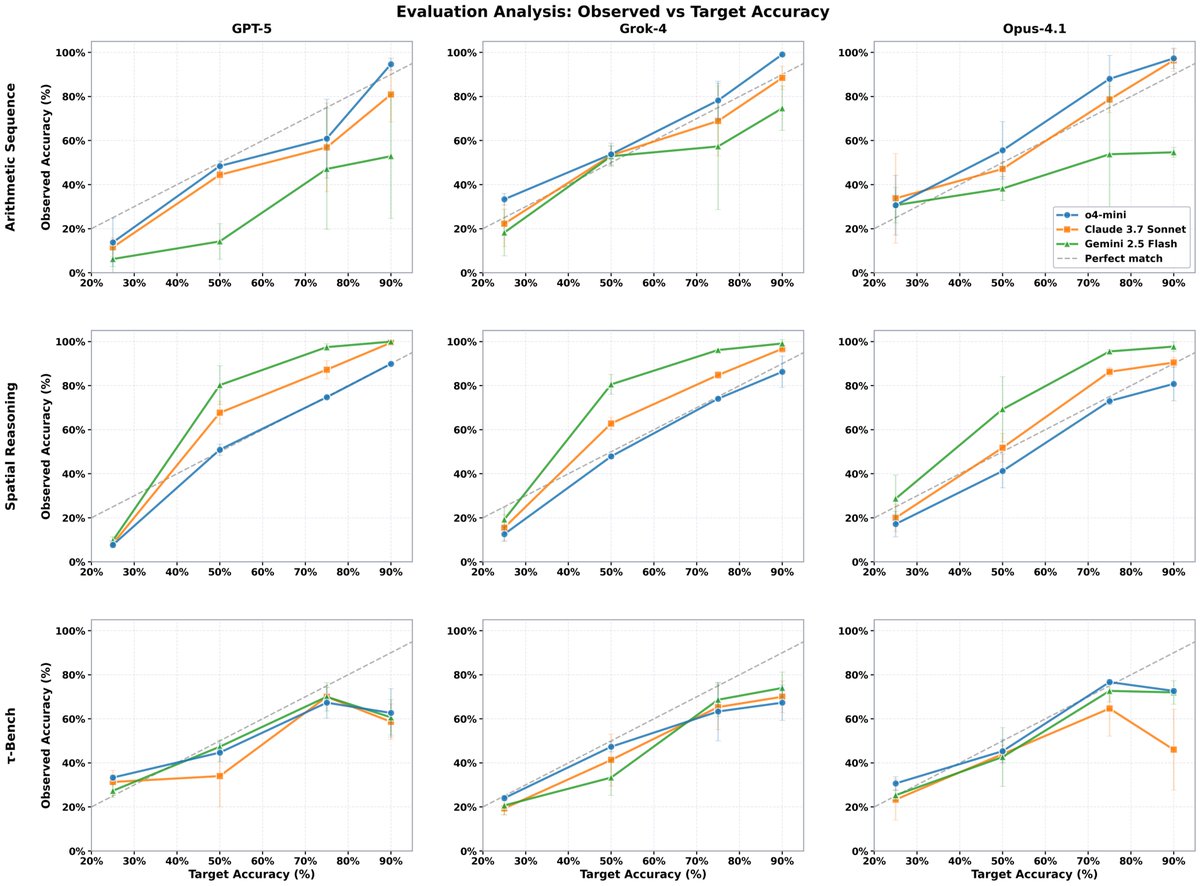

🚨 New research from Snorkel AI tackles a critical problem: LLMs are evolving faster than our ability to evaluate them 📊 We develop BeTaL— Benchmark Tuning with an LLM-in-the-loop— a framework that automates benchmark design using reasoning models as optimizers. BeTaL produces

Confirming task solvability is the very first thing to do after developing a benchmark. This is especially challenging in tau-bench style envs, and at least half of our dev work at Snorkel AI is devoted to that when we make these envs for customers. If you make many unique tasks