Byungsoo Ko

@byungsooko1

Research and engineering on deep stuff :)

ID: 1169878424199946240

https://github.com/kobiso 06-09-2019 07:42:14

20 Tweet

54 Followers

161 Following

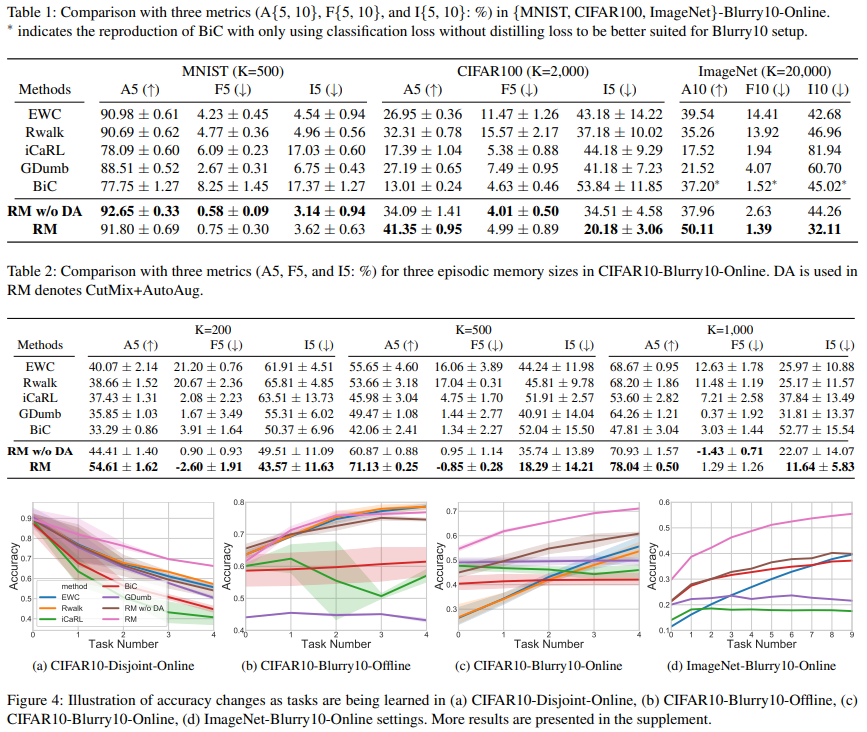

Happy to share our “Rainbow Memory“ to propose a new method and evaluation protocol for more realistic CIL, #blurryCIL, which will appear @cvpr2021. Congrats to Jihwan Bang, Heesu Kim, Youngjoon Yoo, Jonghyun Choi arxiv.org/abs/2103.17230 github.com/clovaai/rainbo…

Happy to share our KELIP -- Korean and English bilingual multimodal model. KELIP is trained with 1.1B image-text pairs, which are three times larger than CLIP. Try out pre-trained KELIP! paper: arxiv.org/abs/2203.14463 github: github.com/navervision/KE… demo: huggingface.co/spaces/navervi…