Artëm Sobolev

@art_sobolev

Preserving the entropy. Ex @BayesGroup

ID: 111886381

http://artem.sobolev.name/ 06-02-2010 13:37:42

843 Tweet

847 Followers

547 Following

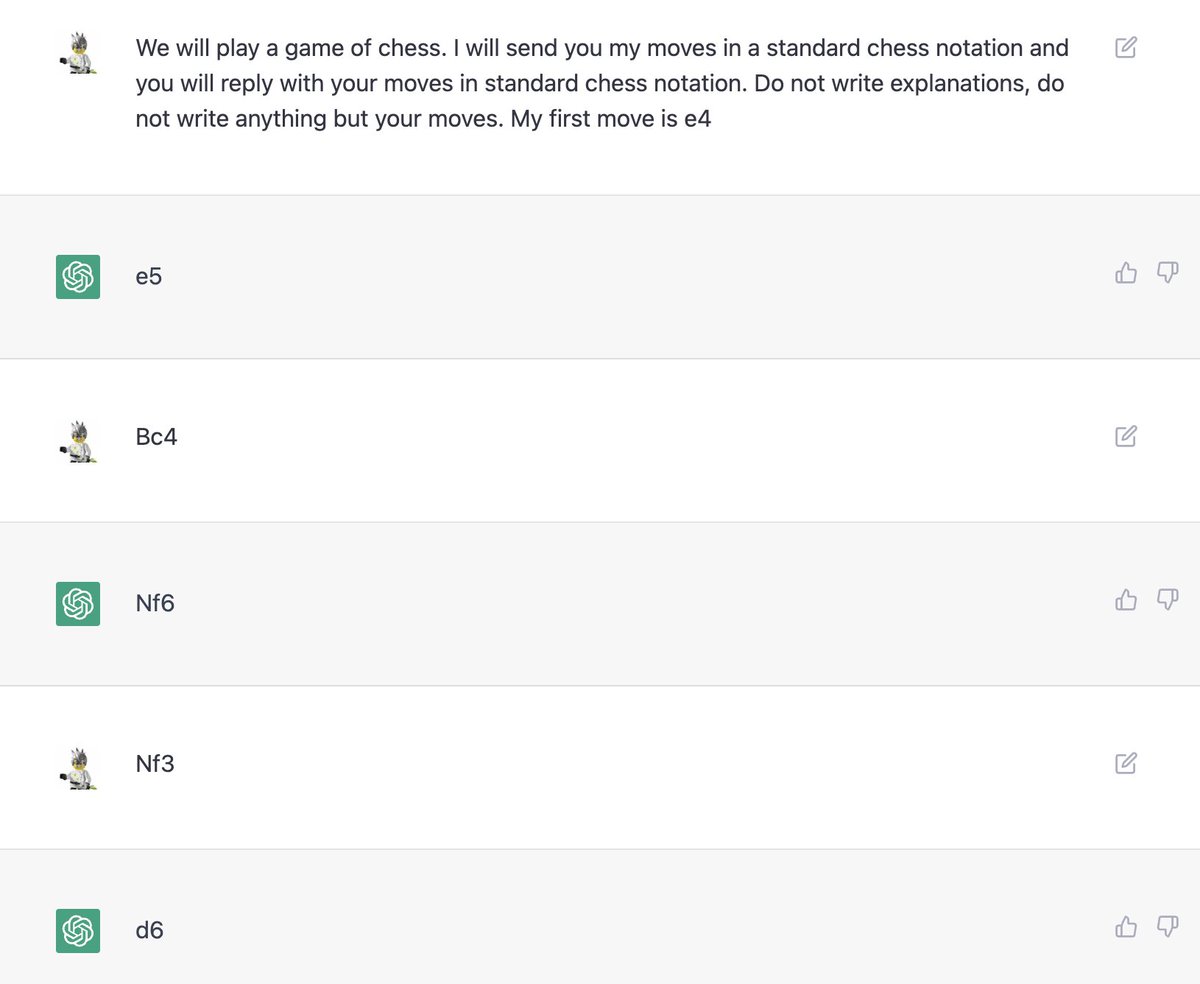

Our #icml2020 paper "Involutive MCMC: a Unifying Framework" is now available on arxiv arxiv.org/abs/2006.16653. It describes many MCMC algorithms from a single perspective. Work with Max Welling, Evgenii Egorov, Dmitry Vetrov

Here's a condensed version of the matplotlib cheatsheets so it can fit a desktop background (github.com/matplotlib/che…) Full image: drive.google.com/file/d/1kwYFaR… and vectorized .svg, with the non-standard fonts outlined: drive.google.com/file/d/1b2LtZU… Thanks Nicolas P. Rougier et al for making it!

Come by our poster "On the Periodic Behavior of Neural Network Training with Batch Normalization and Weight Decay" tomorrow at #NeurIPS2021 poster session 6! With Maxim Kodryan, Nadia Chirkova, Andrey Malinin, and Dmitry Vetrov. Poster: nips.cc/virtual/2021/p…

Sad to see Twitter going through a lot of turbulence these days. If you're on mastodon follow me @[email protected]

Just stumbled upon this gem by John Schulman – a very interesting read on estimating KL divergences in practice and possible bias-variance tradeoffs joschu.net/blog/kl-approx…