Ajay Mandlekar

@ajaymandlekar

NVIDIA AI Research Scientist | EE PhD @Stanford | Teaching 🤖 to imitate humans.

ID: 1192500851492831237

https://ai.stanford.edu/~amandlek/ 07-11-2019 17:55:46

207 Tweet

2,2K Followers

369 Following

Some nice work on the latest edition of Ajay Mandlekar's MimicGen

I wanted to test LeRobot on complex tasks without a physical robot arm. MimicGen has 26K+ demonstrations across 12 tasks. I created mg2hfbot to convert datasets, train LeRobot & RoboMimic policies, and evaluate them. Repo: github.com/kywch/mg2hfbot Thanks to Ajay Mandlekar,

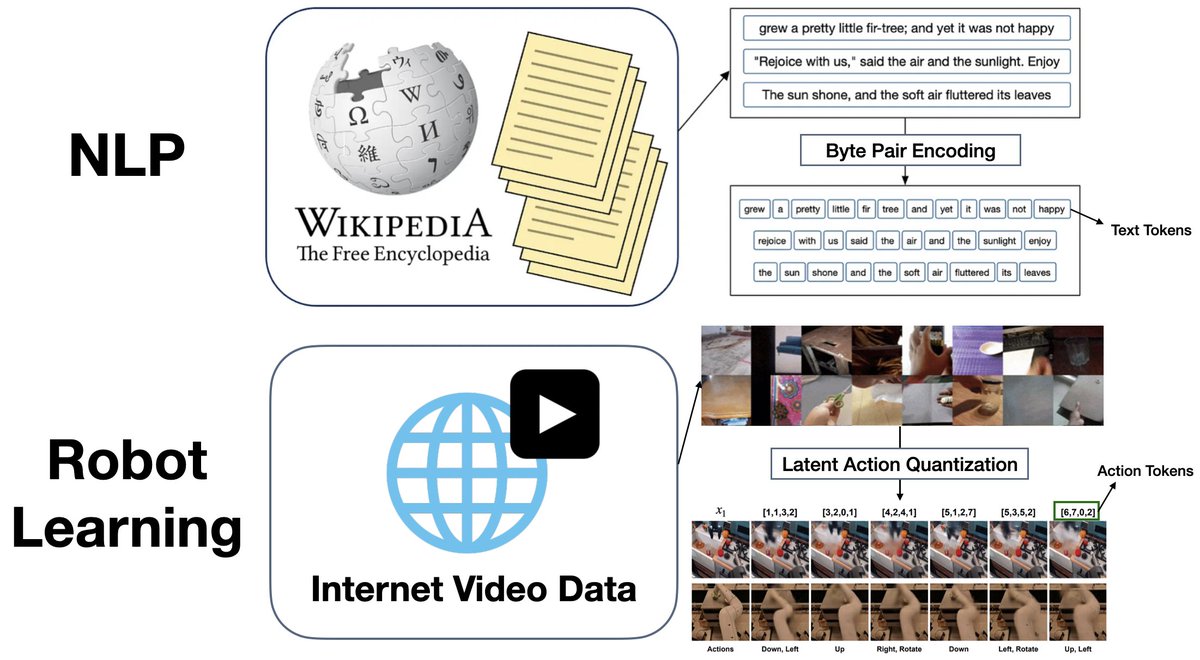

Excited to share that 𝐋𝐀𝐏𝐀 has won the Best Paper Award at the CoRL 2024 Language and Robot Learning workshop, selected among 75 accepted papers! Both Seonghyeon Ye and I come from NLP backgrounds, where everything is built around tokenization. Drawing inspiration from

Excited to share DexMachina, our new algorithm that can learn dexterous manipulation across different robot hands all from just a single human demonstration. Great work led by Mandi Zhao during her internship in our group!

TRI's latest Large Behavior Model (LBM) paper landed on arxiv last night! Check out our project website: toyotaresearchinstitute.github.io/lbm1/ One of our main goals for this paper was to put out a very careful and thorough study on the topic to help people understand the state of the