Alexey Bokhovkin

@abokhovkin

Computer Vision researcher @ TUM

3D Indoor Understanding

ID: 1334896500275548161

04-12-2020 16:25:15

42 Tweet

343 Followers

233 Following

Can we match visual features jointly across multiple frames? Yes! Barbara Roessle's #ICCV2023 paper proposes a differentiable pose optimization for end2end feature matching across multiple frames, thus obtaining better poses! barbararoessle.github.io/e2e_multi_view… youtu.be/uuLb6GfM9Cg

Check out Christian Diller's CG-HOI :) We generate realistic 3D human-object interactions, from object geometry and text description. A key ingredient is explicit modeling of contact, during training and as guidance during inference. cg-hoi.christian-diller.de youtube.com/watch?v=GNyQwT…

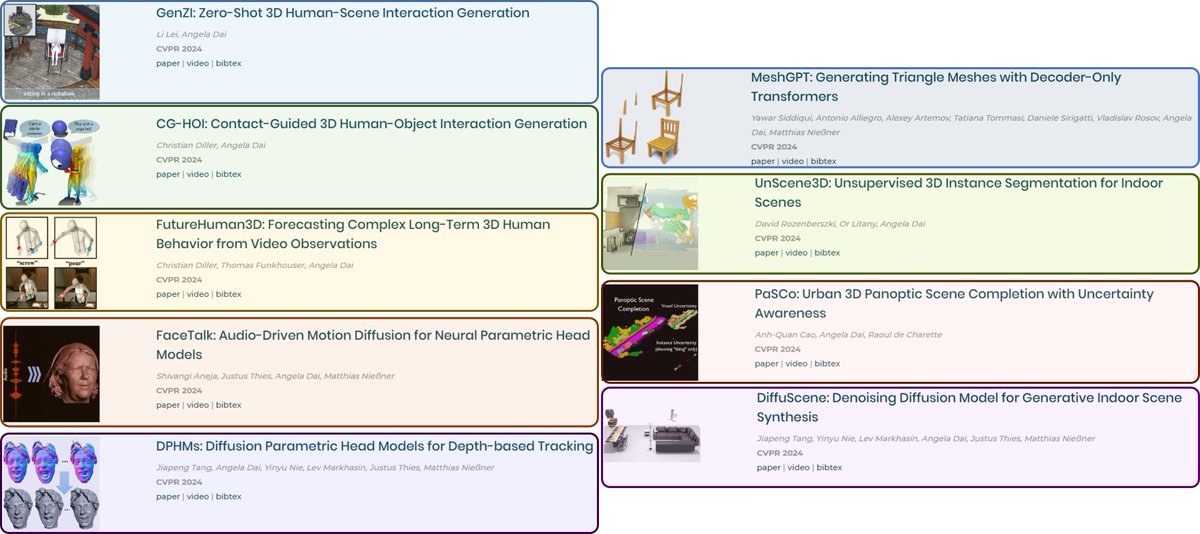

Check out our #CVPR'24 papers on 3D human interactions, generative 3D modeling, and uncertainty-aware and unsupervised 3D semantic scene understanding! Congrats to Lei Li David Rozenberszki Christian Diller Yawar Siddiqui Shivangi Jiapeng Tang Anh-Quan Cao for their amazing work!

Excited to present DiffCAD coming to #SIGGRAPH2024! Daoyi Gao introduces the first probabilistic single-view CAD retrieval & alignment. We train only on synthetic -> generalize robustly to real images! Check out the code: daoyig.github.io/DiffCAD_/ w/David Rozenberszki, Stefan Leutenegger

📢DNF: Generating 4D animations with dictionary-based neural fields! Xinyi Zhang presents a new dictionary-based neural field for unconditional 4D generation of deforming shapes -- generating motions with high-quality shape and temporal consistency. xzhang-t.github.io/project/DNF/

Excited to announce ScanNet++ v2!🎉 Chandan Yeshwanth and Yueh-Cheng Liu have been working tirelessly to bring: 🔹1006 high-fidelity 3D scans 🔹+ DSLR & iPhone captures 🔹+ rich semantics Elevating 3D scene understanding to the next level!🚀 w/ Matthias Niessner kaldir.vc.in.tum.de/scannetpp

📢 ScanNet++ v2 Benchmark Release! 🏆 Test your state-of-the-art models on: 🔹 Novel View Synthesis 📸➡️🖼️ 🔹 3D Semantic & Instance Segmentation 🤖🔍🕶️ Shoutout to Chandan Yeshwanth and Yueh-Cheng Liu for their incredible work👏 🚀Check it out: kaldir.vc.in.tum.de/scannetpp/

📢Animating the Uncaptured 📢 We animate 3D humanoid meshes using video diffusion priors given a text prompt. 🎥youtu.be/_YL1J_V3smI 🌍marcb.pro/atu Realistic motion generation for 3D characters - without motion capture! 🚀 Great work by Marc Benedí Angela Dai

📢ExCap3D: Multilevel Captioning of Objects in 3D Scenes Chandan Yeshwanth generates consistent object and part-level descriptions of objects in 3D scenes, and introduces a new dataset with 190k captions for 34k ScanNet++ objects. Project: cy94.github.io/excap3d w/ David Rozenberszki

📢SceneFactor code is released! SceneFactor is a factored latent diffusion for controllable, large-scale scene synthesis and editing! w/ Quan Meng, Shubham Tulsiani, Angela Dai Check out the code here: github.com/alexeybokhovki…. We present SceneFactor at #CVPR2025 on Fri 13, -10:30