Zulfikar Ramzan (He / Him)

@zulfikar_ramzan

CTO Point Wild. Former CTO @RSASecurity. MIT PhD. Intelligent Safety, Cybersecurity, Crypto(graphy), ML / AI. My tweets & opinions. he / him

ID: 55309639

http://www.pointwild.com 09-07-2009 18:01:17

3,3K Tweet

4,4K Takipçi

2,2K Takip Edilen

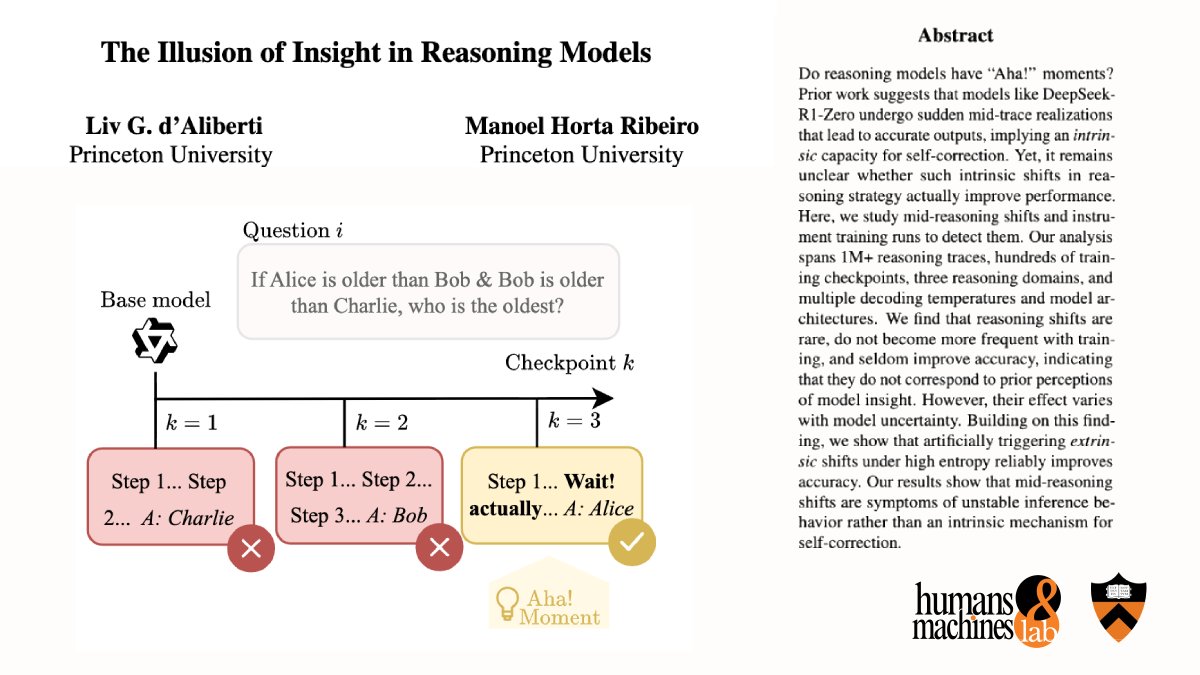

Do reasoning models have real “Aha!” moments—mid-chain realizations where they intrinsically self-correct? In a new pre-print, “The Illusion of Insight in Reasoning Models," led by Liv d'Aliberti, we provide strong evidence that they do not! 📜: arxiv.org/abs/2601.00514

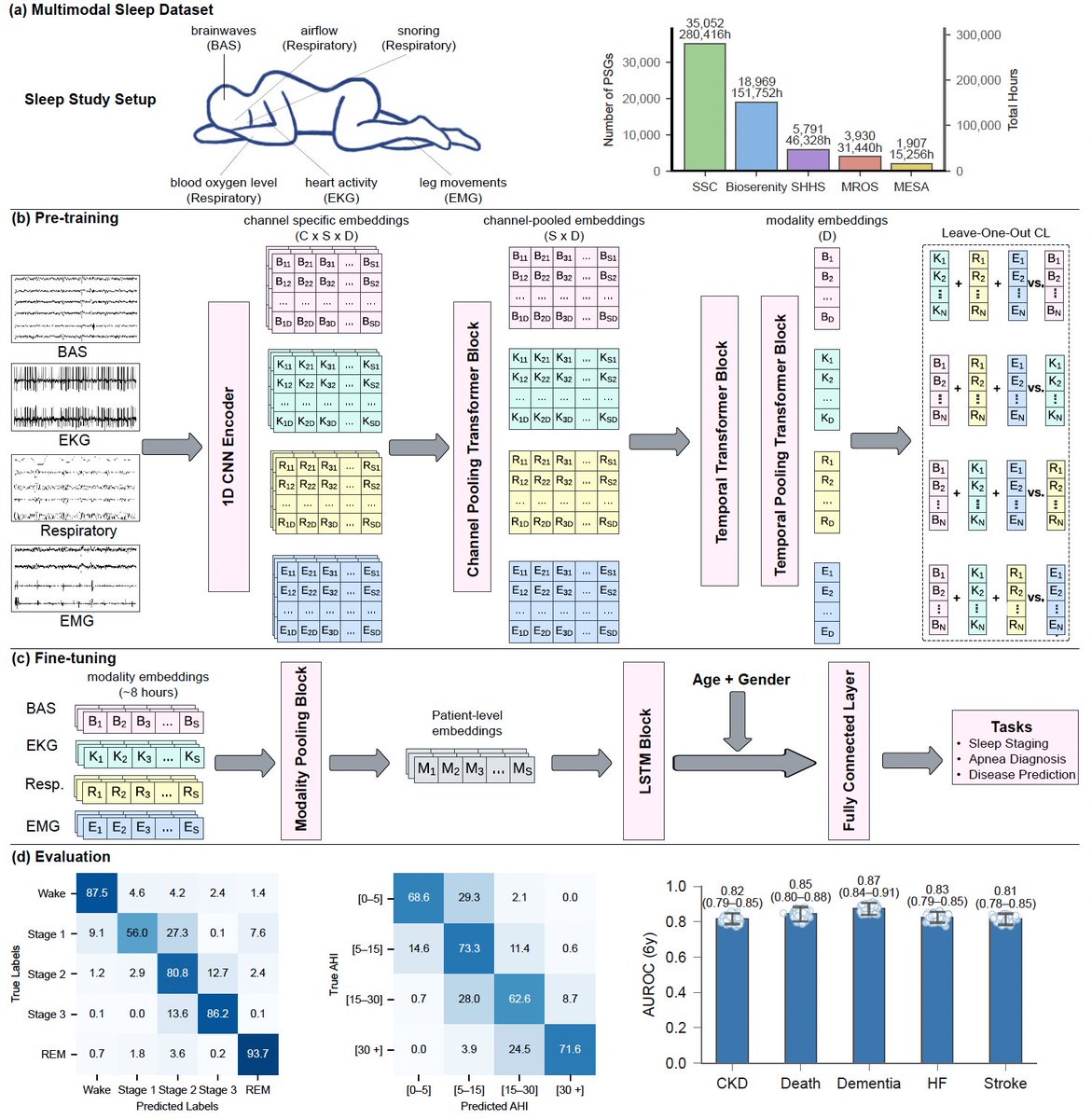

Today in Nature Medicine we report that AI can predict 130 diseases from 1 night of sleep🛌 We trained a foundation model (#SleepFM) on 585K hours of sleep recordings from 65K people—brain, heart, muscle & breathing signals combined. AI learns the language of sleep🧵

New Anthropic Fellows research: How does misalignment scale with model intelligence and task complexity? When advanced AI fails, will it do so by pursuing the wrong goals? Or will it fail unpredictably and incoherently—like a "hot mess?" Read more: alignment.anthropic.com/2026/hot-mess-…

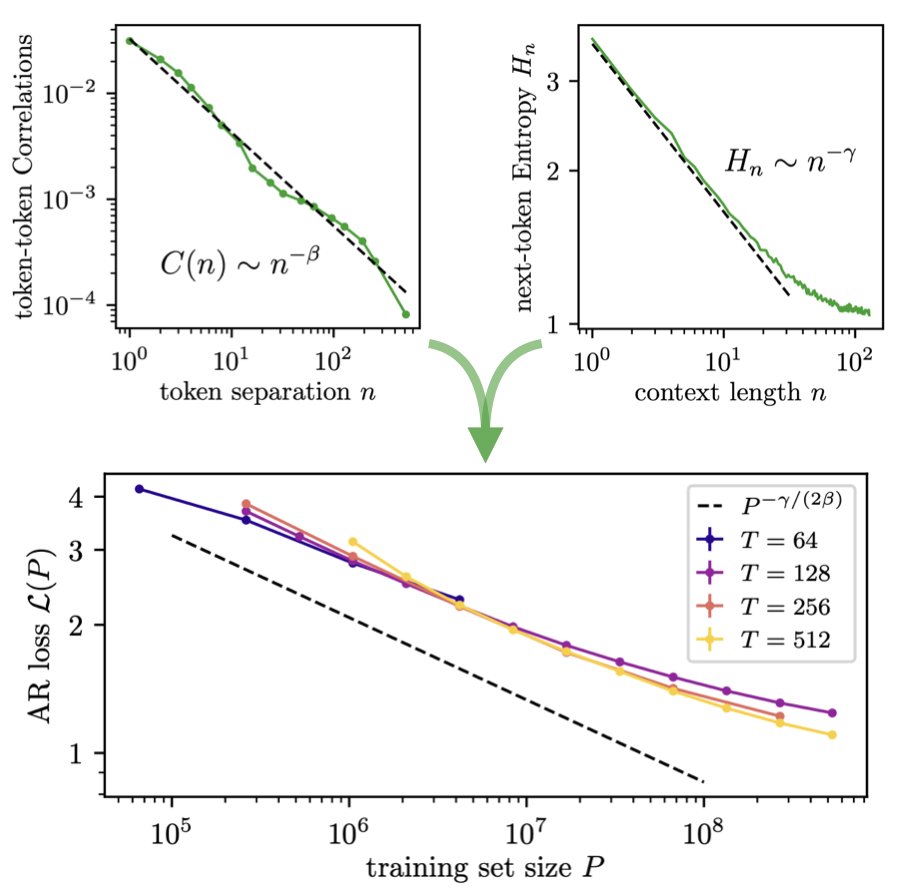

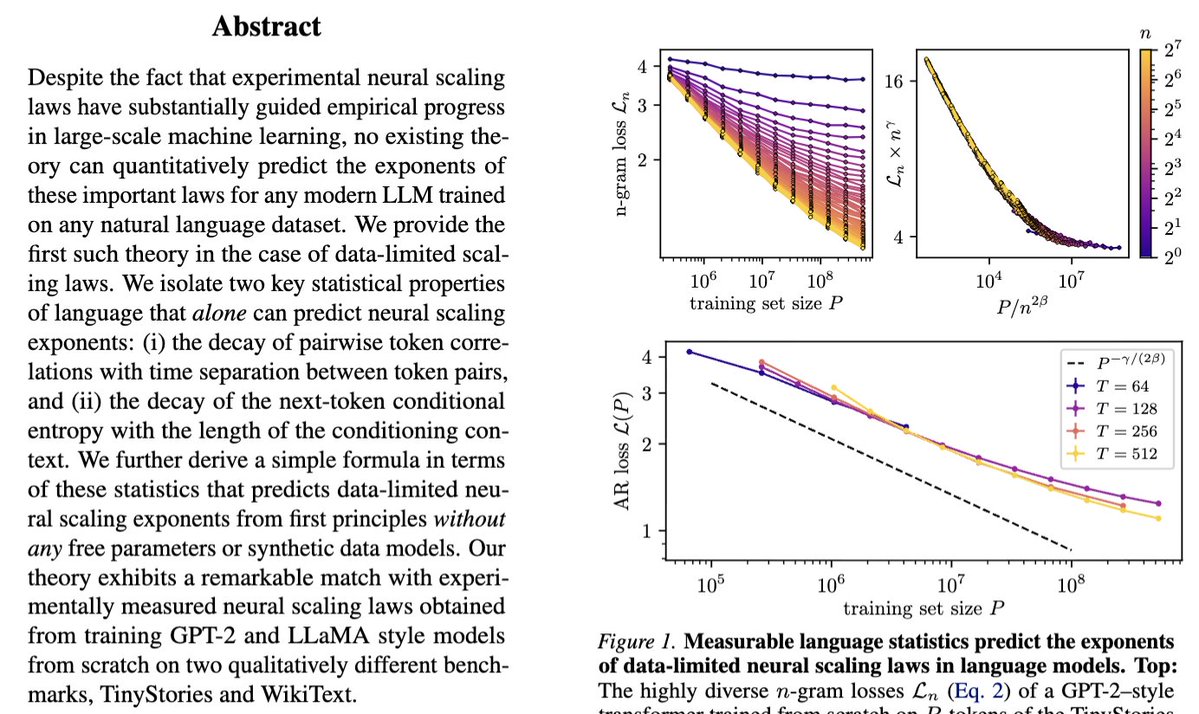

Our new paper "Deriving neural scaling laws from the statistics of natural language" arxiv.org/abs/2602.07488 lead by Francesco Cagnetta & Allan Raventós w/ Matthieu Wyart makes a breakthrough! We can predict data-limited neural scaling law exponents from first principles using the

Our grad-level "Deep Learning" course (MIT's 6.7960) is now freely available online through OpenCourseWare: ocw.mit.edu/courses/6-7960… Lecture videos, psets, and readings are all provided. Had a lot of fun teaching this with Sara Beery and Jeremy Bernstein!