Zhiheng LYU

@zhihenglyu

MMath Student @UWaterloo TIGER-Lab

Prev @HKUniversity @ETH_en @UCBerkeley

ID: 1529087177845616640

http://cogito233.github.io 24-05-2022 13:09:45

23 Tweet

117 Followers

337 Following

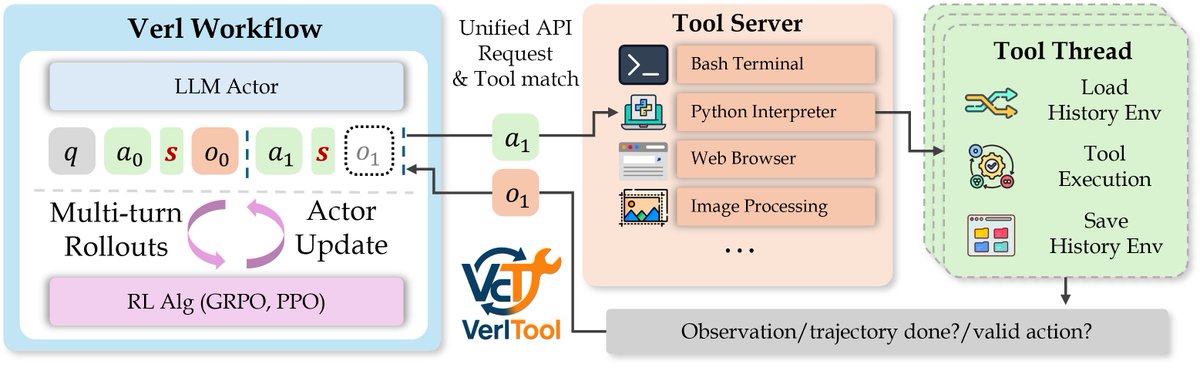

Will be at NAACL next week, excited to share two of our papers: FACTTRACK: Time-Aware World State Tracking in Story Outlines arxiv.org/abs/2407.16347 THOUGHTSCULPT: Reasoning with Intermediate Revision and Search arxiv.org/abs/2404.05966 Shoutout to first authors Zhiheng LYU and