Vladimir Rybakov

@vladimirrybako9

Head of data science in WaveAccess. wave-access.com

ID: 1013121828972318720

30-06-2018 18:07:21

438 Tweet

32 Followers

29 Following

We had an opportunity to chat with Vladimir Rybakov (Head of #DataScience at WaveAccess) about: - solving problems under pressure, - taking responsibility, - why asking for help is an important skill and more! bit.ly/2yr9vTK

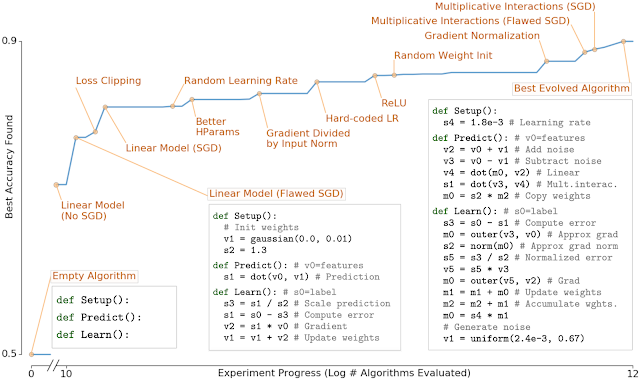

This brilliant OpenAI work and the video of Andrej Karpathy I shared recently are very exciting AI frontiers. The story repeats itself: Big net, curated data, and common sense are the ingredients. Congrats Ilya Sutskever et al. arxiv.org/abs/2005.14165

What makes production ML hard? - Cleaning, labeling, and augmenting data - Troubleshooting training and ensuring reproducibility - Deploying models and monitoring their real-world impact To help, we're excited to announce our online production ML course: course.fullstackdeeplearning.com

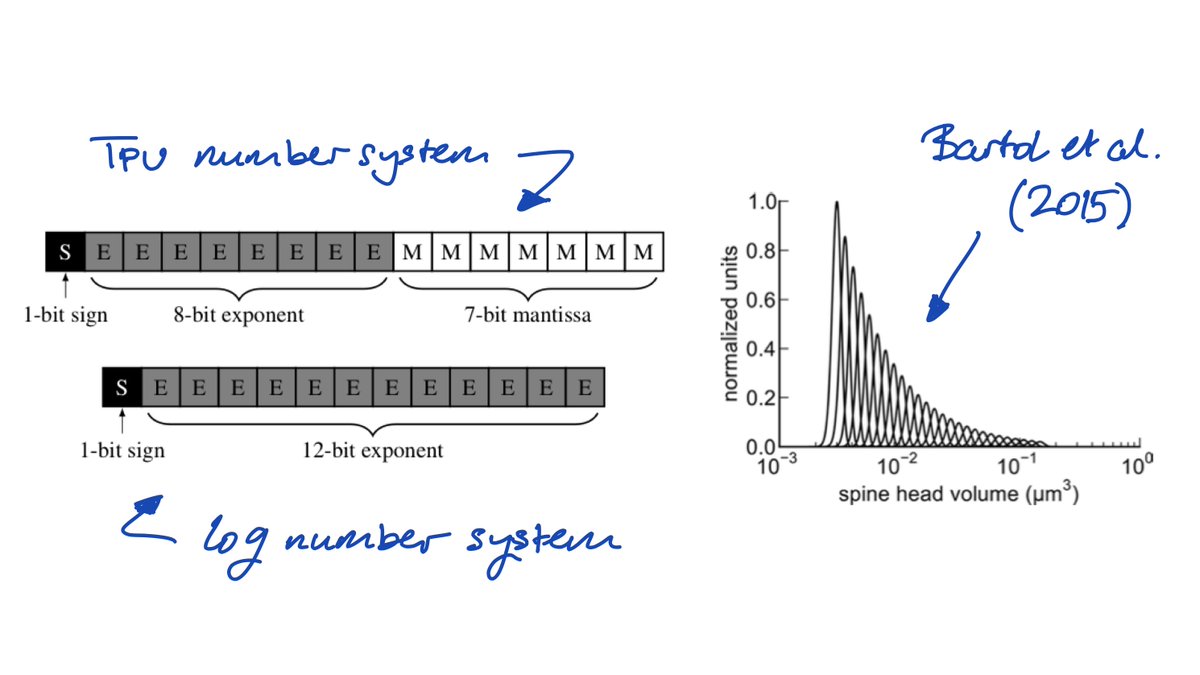

Madam (multiplicative adam) needs little to no learning rate tuning and brings the numerical representation of the synapse closer to neuroscience. w/ Jiawei Zhao, Markus Meister, Ming-Yu Liu, Prof. Anima Anandkumar & Yisong Yue paper: arxiv.org/abs/2006.14560 code: github.com/jxbz/madam

Suffering from post-#acl2020nlp withdrawal? There are also lots of Percy Liang, Tatsunori Hashimoto, and other Stanford people’s papers at ICM 2020 this coming week! ai.stanford.edu/blog/icml-2020/

Open-source, high-quality texture synthesis and style-transfer using PyTorch! Amazing work by Alex J. Champandard 🌱 👏

Java still try-harding to catch-up to python in the DS/ML field. Though it is good to have a scikit-like lib on Java just in case. blogs.oracle.com/javamagazine/p… PS Christoph Henkelmann - @[email protected], your thoughts? :)