Laura Stoinski

@stoinskilaura

Not active here anymore. Follow me on: @laurastoinski.bsky.social

PHD candidate in the "Vision and Computational Cognition" group at @MPI_CBS and @UniLeipzig.

ID: 926186732395081728

http://laurastoinski.com 02-11-2017 20:38:19

40 Tweet

90 Followers

165 Following

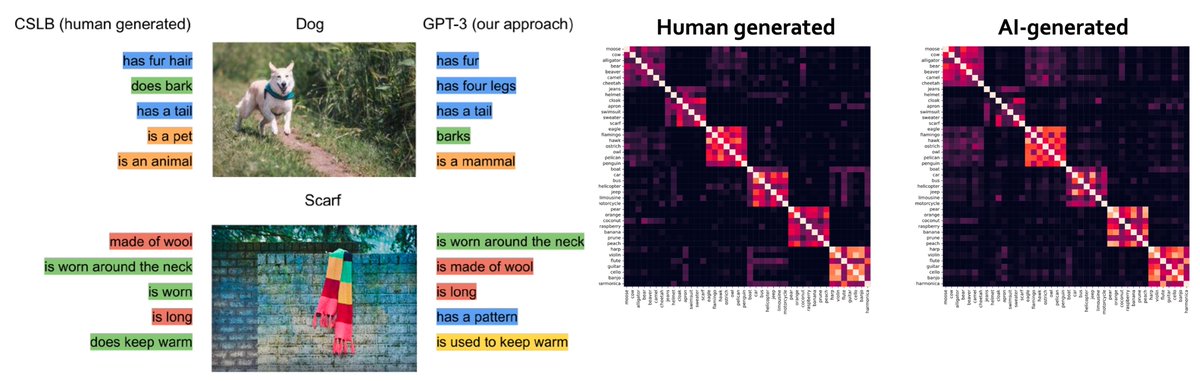

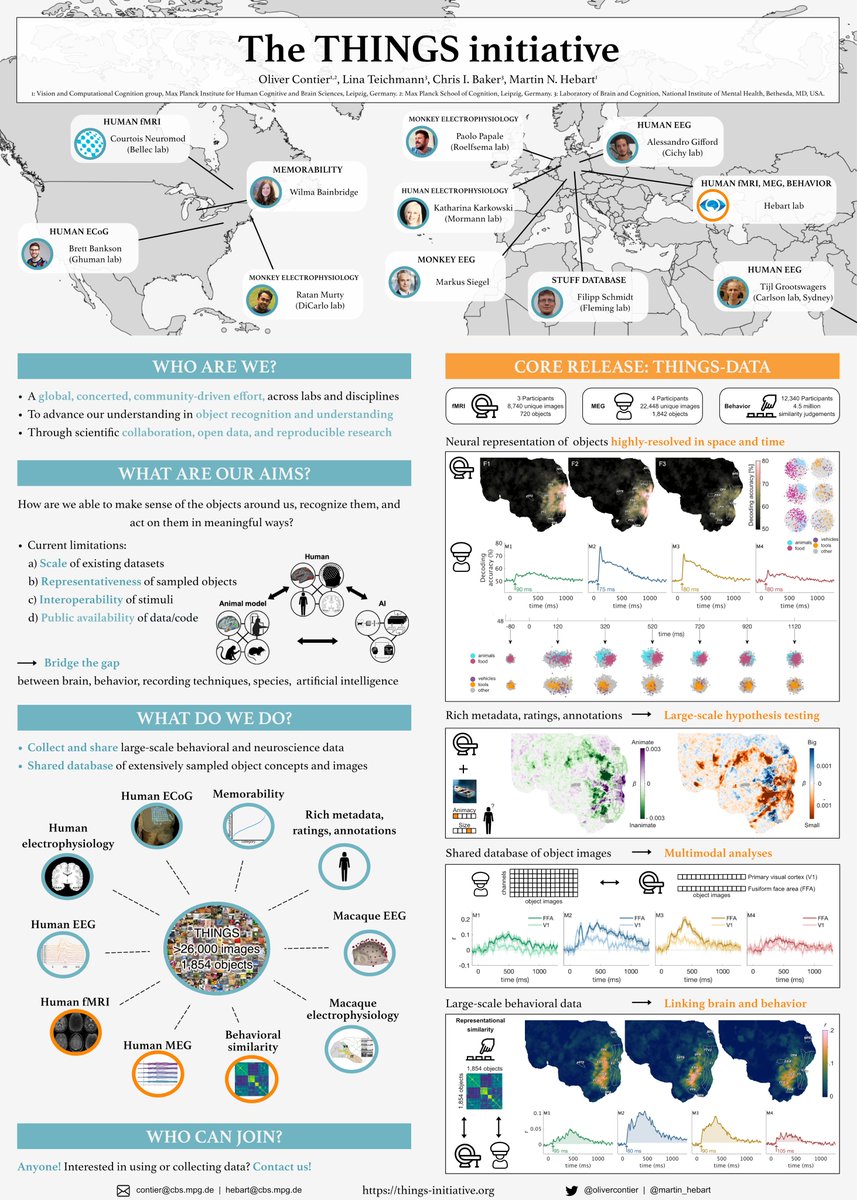

Please come to the poster of Hannes Hansen on Sunday morning 33.312 in the Banyan Breezeway at #VSS2022 ! He will show you how he used the large language model GPT-3 to generate human-level semantic feature norms for 1854 objects.

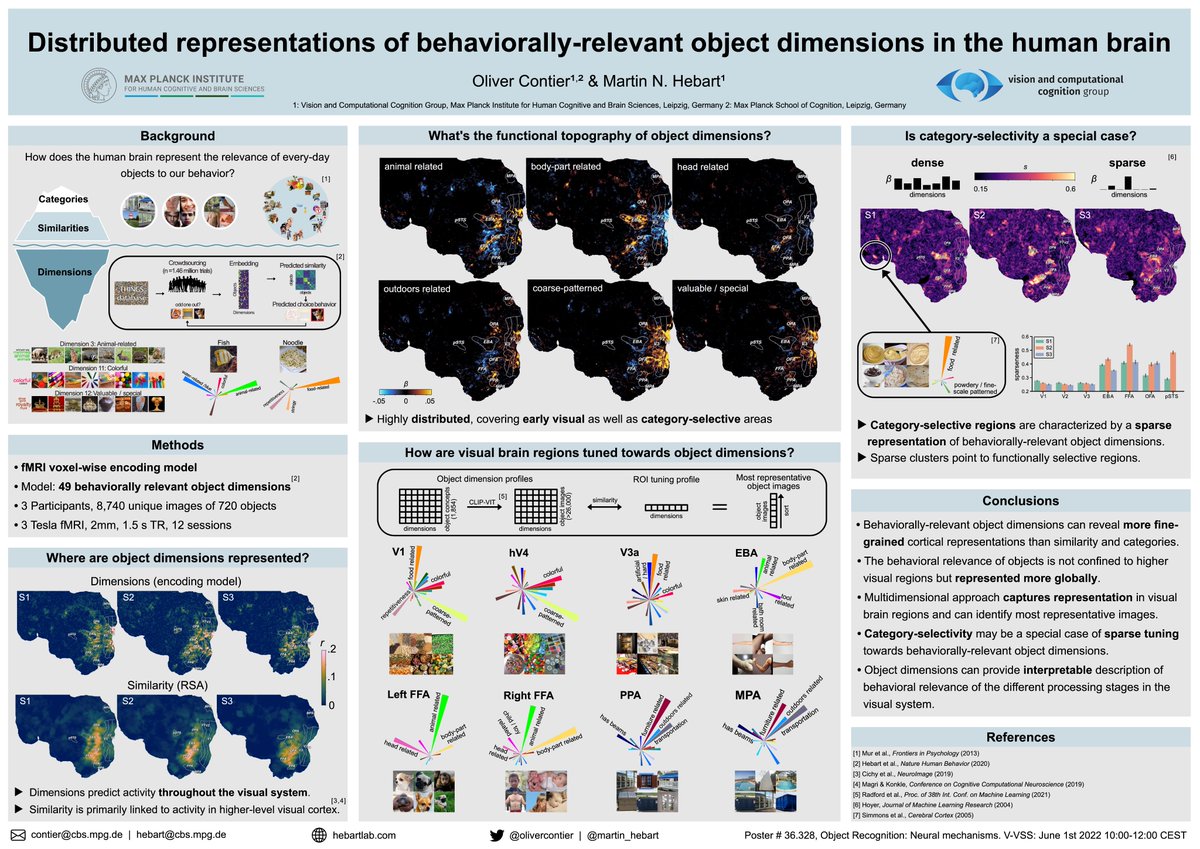

Interested in how the brain represents the relevance of every-day objects to our behavior? Visit us! In our poster at #VSS2022, Martin Hebart and I explore the neural representation of object dimensions underlying perceived similarities. (tomorrow afternoon Banyan Breezeway)

🥳 I’m happy that our paper “Feature-reweighted representational similarity analysis: A method for improving the fit between computational models, brains, and behavior” is now published in NeuroImage . Why would you want to use FR-RSA? sciencedirect.com/science/articl… 1/n

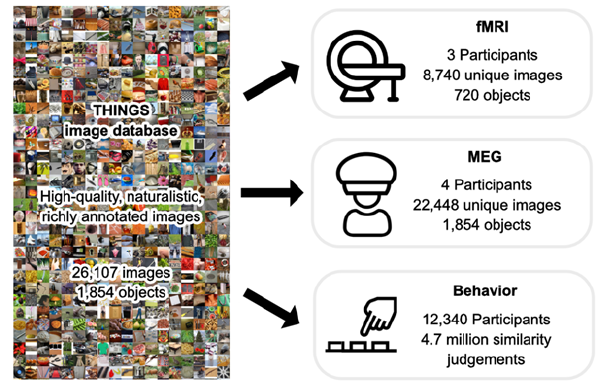

Excited about the potential of large-scale open data? Visit our #PuG2022 poster (tomorrow 14:30-16:00): Introducing the THINGS initiative, a joint effort for collecting behavioral/neuro data in object recognition & understanding using the same image database! With Martin Hebart

(3) Rich metadata: THINGS-data allows for testing countless hypotheses, using semantic feature norms for all 1854 objects, typicality ratings for 53 high-level categories, or diverse ratings incl. animacy, size or manipulability. Laura Stoinski 7/n psyarxiv.com/exu9f

I’m so excited to finally see THINGS-data released! Working on this fMRI dataset was an awesome challenge for me and motivated me to learn a ton. So many thanks to Martin Hebart , Chris Baker , @lina_teichmann and everyone involved for bringing me on board! 1/n

What hypotheses can be tested? THINGSplus (Laura Stoinski psyarxiv.com/exu9f) contains rich metadata about objects, allowing to address a wide range of questions. We demonstrate this potential by replicating seminal findings on object animacy and size on a large scale.

I hope you are enjoying #VSS2023! In case you are interested in what our lab has been up to, check out these presentations! 👇 Work with Marie St-Laurent courtois-neuromod @kateiyas Johannes Singer Oliver Contier Judy Fan Wilma Bainbridge Kushin Mukherjee Johannes Roth Laura Stoinski

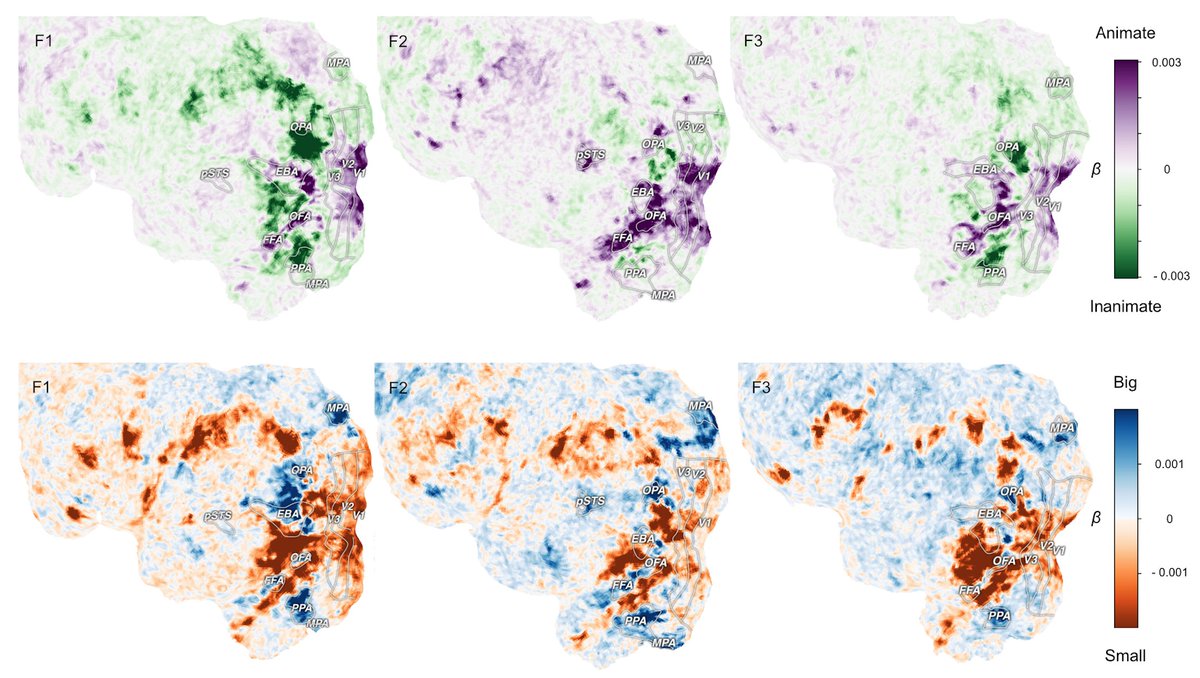

Finally, PhD Laura Stoinski revisits the animacy, size & curvature organization in visual cortex for hundreds of categories using THINGS-fMRI. She updates our understanding of these dimensions, reveals modulatory factors & introduces a massive new dataset of perceived curvature.

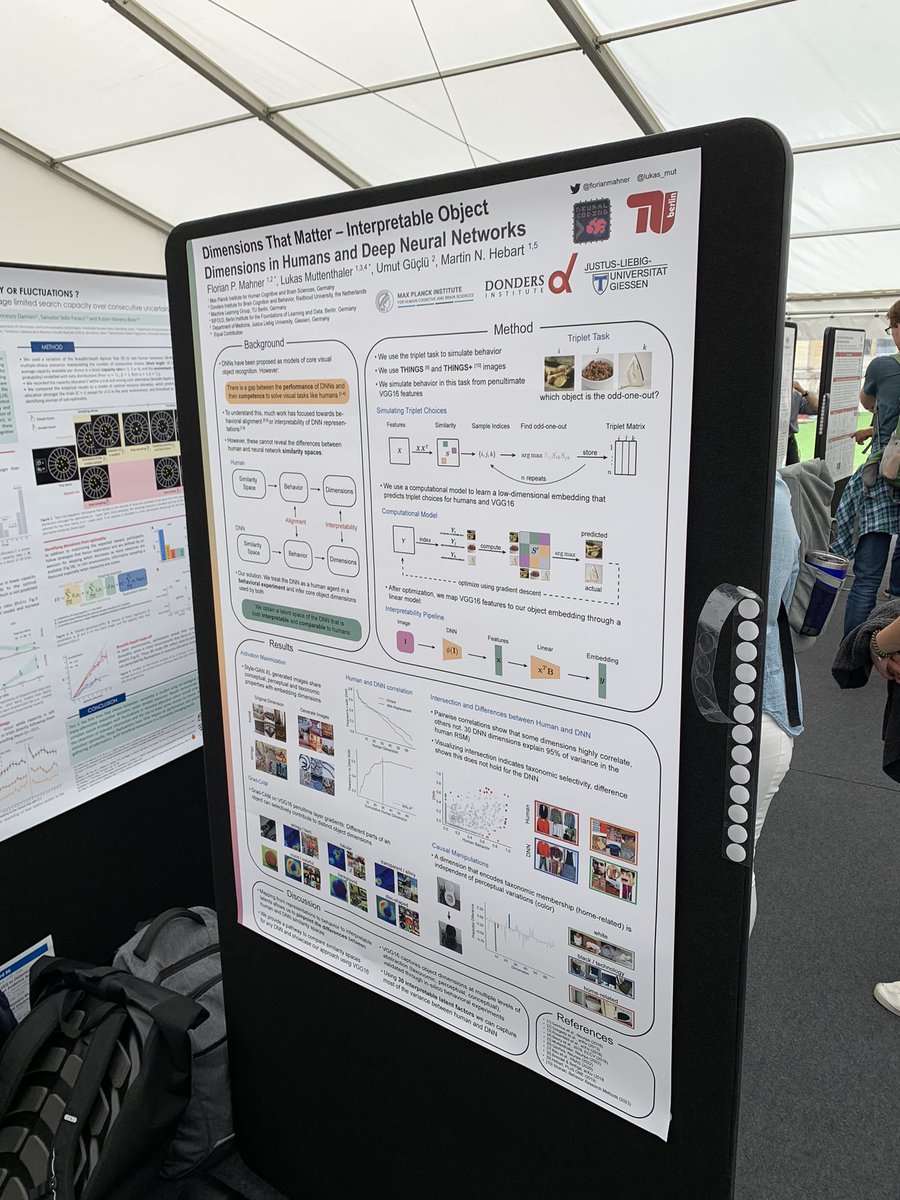

Come to poster 2B.95 CogCompNeuro for hearing about our work on interpretable object dimension in deep neural nets! Florian Mahner Martin Hebart

How does our brain enable us to make sense of our visual world? 🗺👀🧠 Excited to share our latest work with Chris Baker and Martin Hebart : “Distributed representations of behaviorally relevant object dimensions in the human visual system” biorxiv.org/content/10.110… 🧵 1/n

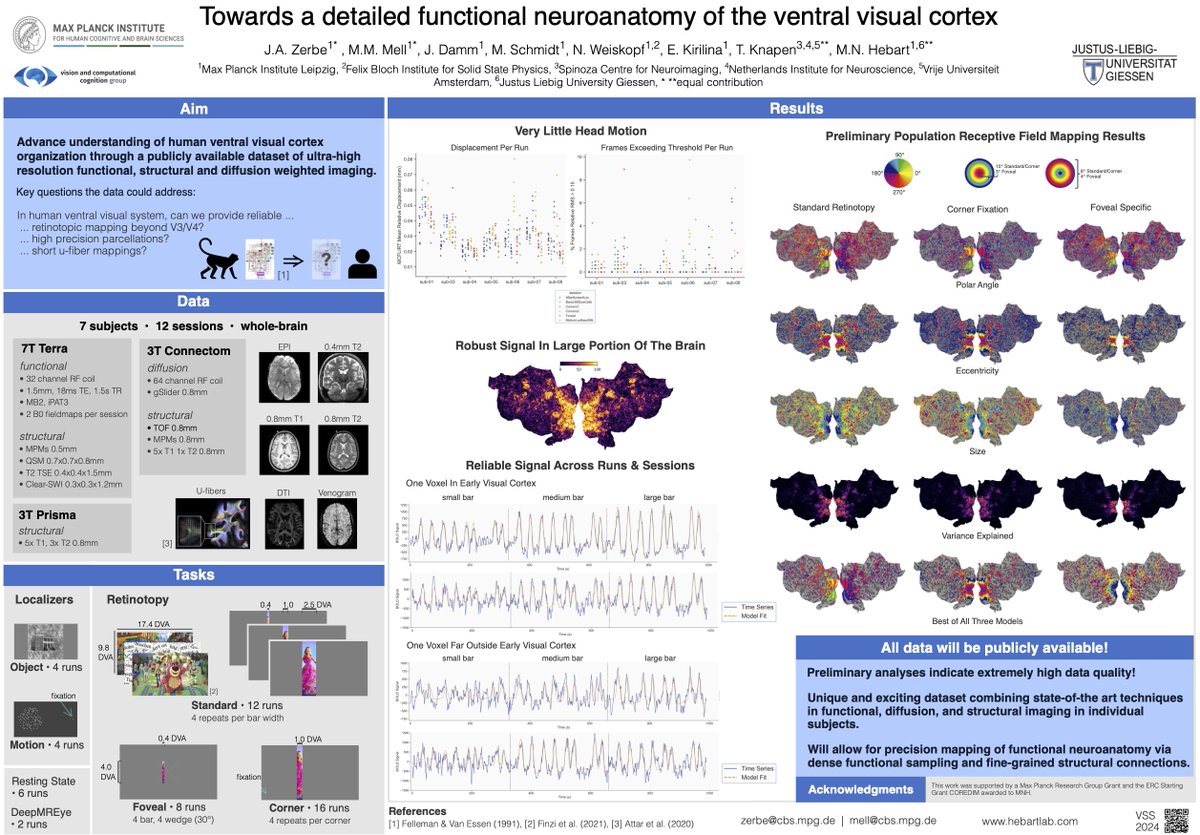

Check out Josefine’s and Dr. Maggie Mae Mell's amazing dataset merging state-of-the art techniques in functional, diffusion, and structural imaging! Today 8:30 am – 12:30 pm in Banyan Breezeway VSS Meeting Martin Hebart Tomas Knapen

What makes humans similar or different to AI? In a new study, led by Florian Mahner and Lukas Muttenthaler and w/ Umut Güçlü, we took a deep look at the factors underlying their representational alignment, with surprising results. arxiv.org/abs/2406.19087 🧵

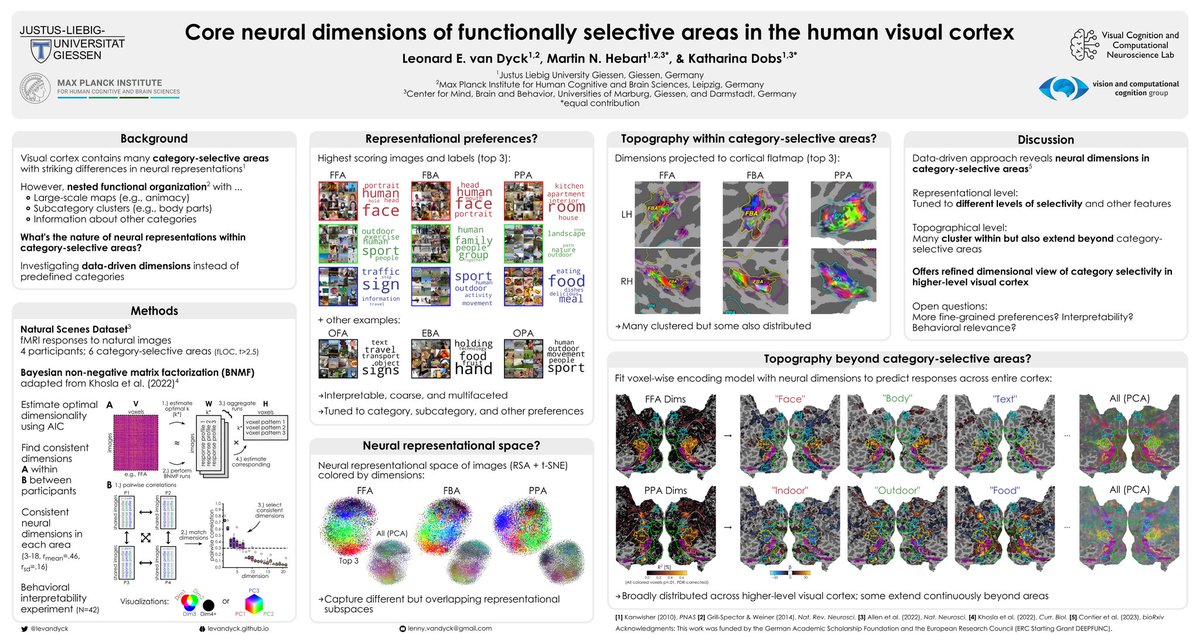

Excited to present findings from my first PhD project with Martin Hebart and Katharina Dobs at #CCN2024 If you're into visual cortex, functional selectivity, and/or representational dimensions, make sure to stop by poster P154 today! 🧠🌈

Hugely excited that this work with Martin Hebart and Chris Baker is now out in Nature Human Behaviour !!! By moving from a category-focused to a behaviour-focused model, we identified behaviourally relevant object information throughout visual cortex. nature.com/articles/s4156…