Generative AI & RL Community

@rlcommunity8

Community of Generative AI and Reinforcement Learning Researchers, Practitioners and Enthusiasts. Monthly Meetup and Newsletter.

ID: 1090954837708234753

31-01-2019 12:47:57

1,1K Tweet

2,2K Followers

508 Following

Can we get LLMs to "hedge" and express uncertainty rather than hallucinate? For this we first have to understand why hallucinations happen. In new work led by Katie Kang we propose a model of hallucination that leads to a few solutions, including conservative reward models 🧵👇

A fun chat with Craig S. Smith from back in December at NeurIPS: youtu.be/Tk1pX_IMYzQ?si… Thanks Craig S. Smith for the chat, lots of fun questions. I hope I didn't ramble too much🙂

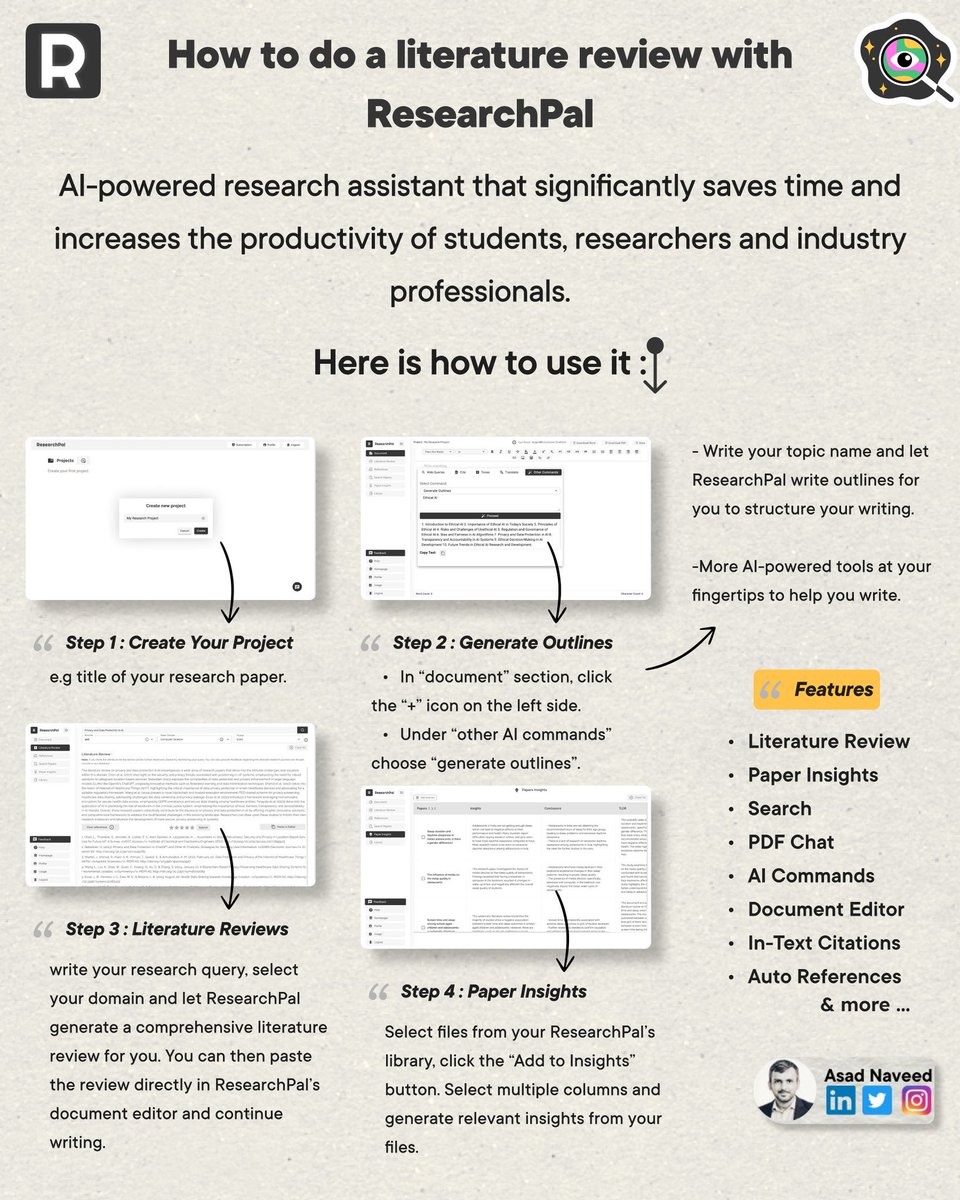

This week, I tried ResearchPal (researchpal.co) and here's my review on it: It's a simple tool that quickly automates a lot of your research needs. Here're some specific use cases:

![Thomas Wolf (@thom_wolf) on Twitter photo [75min talk] i finally recorded this lecture I gave two weeks ago because people kept asking me for a video

so here it is, enjoy "The Little guide to building Large Language Models in 2024"

tried to keep it short and comprehensive – focusing on concepts that are crucial for [75min talk] i finally recorded this lecture I gave two weeks ago because people kept asking me for a video

so here it is, enjoy "The Little guide to building Large Language Models in 2024"

tried to keep it short and comprehensive – focusing on concepts that are crucial for](https://pbs.twimg.com/media/GJwmEHKXoAEvb7d.jpg)