Jonathan Pilault

@j_pilault

• ML Research Scientist at Silicon Valley startup @ZyphraAI

• Former researcher @GoogleDeepMind @nvidia

• PhD @Mila_Quebec

ID: 110540208

01-02-2010 22:32:22

106 Tweet

324 Followers

484 Following

My research group Krueger AI Safety Lab is looking for interns! Applications are due in 2 weeks ***January 29***. The long-awaited form: forms.gle/iLU1uQAxZ2UKEN… Please share widely!!

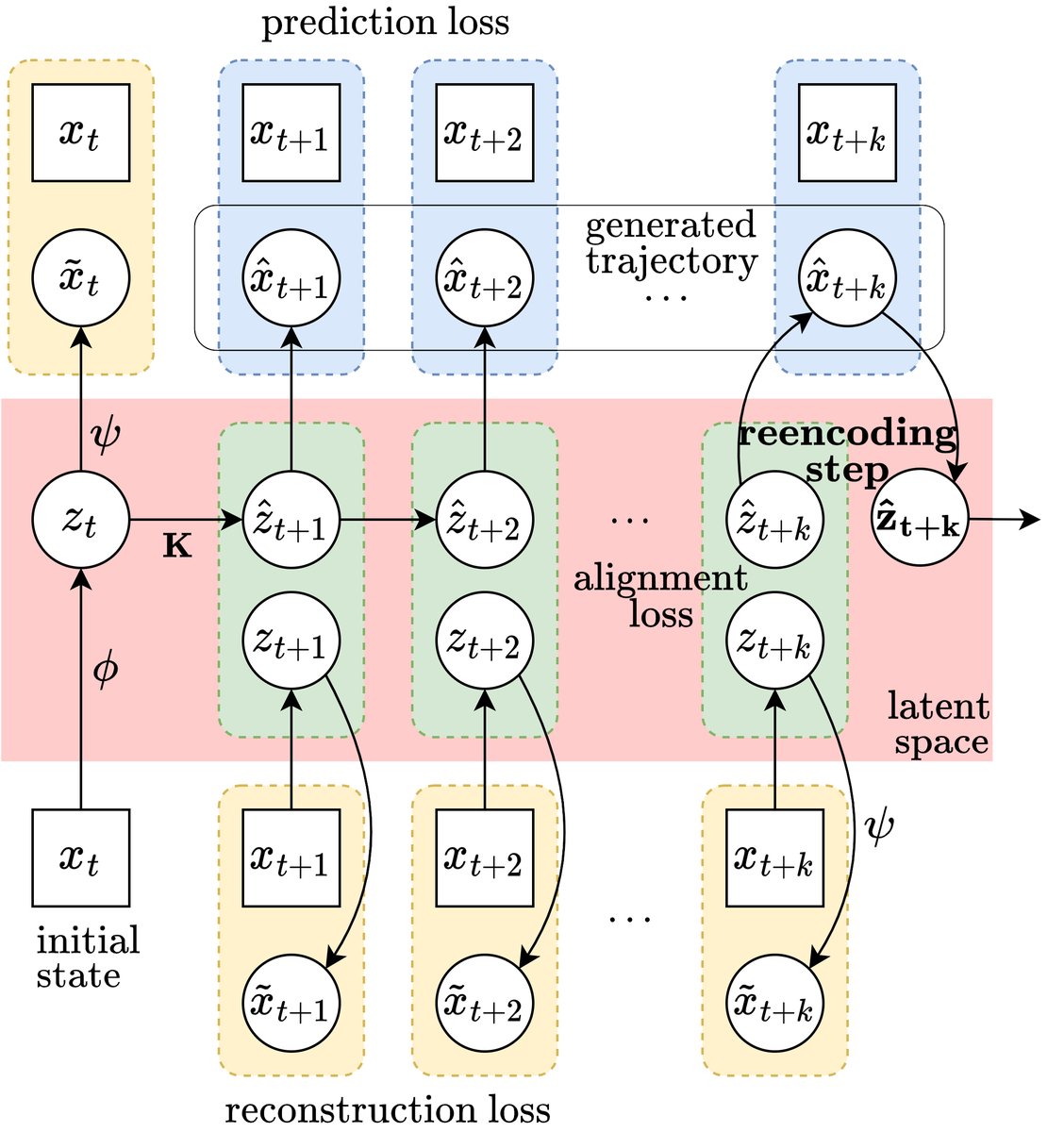

Course Correcting Koopman Representations Accepted at #ICLR2024! We identify problems with unrolling in imagination and propose an unconventional, simple, yet effective solution: periodically "𝒓𝒆𝒆𝒏𝒄𝒐𝒅𝒊𝒏𝒈" the latent. 📄 arxiv.org/abs/2310.15386 Google DeepMind 1/🧵

Last week, I gave a talk at Mila - Institut québécois d'IA. The talk should be of interest to anyone working on predictive models, particularly in latent space. In collab. with Mahan Fathi Clement Gehring Jonathan Pilault David Kanaa Pierre-Luc Bacon. See you at ICLR 2026 in 🇦🇹! drive.google.com/file/d/1mQSXFa…

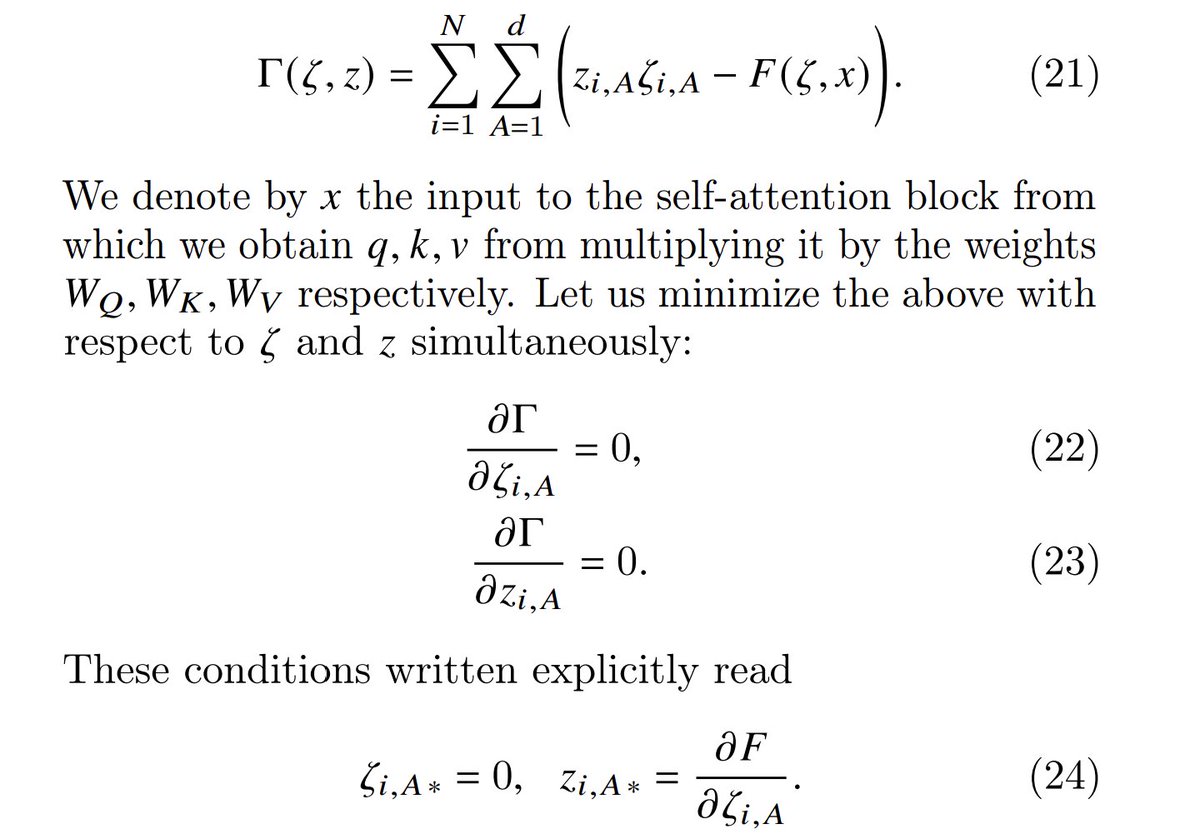

Yann LeCun Thanks for sharing! Another little trick that might amuse you is that we identified a function which upon minimization produces the forward pass of the attention block: