Graphsignal

@graphsignalai

Inference Observability

ID: 1102178345725374466

https://graphsignal.com 03-03-2019 12:06:09

77 Tweet

345 Takipçi

373 Takip Edilen

Learn how to trace, monitor and debug Hugging Face Transformers Agents in production and development. graphsignal.com/blog/tracing-h…

66% of organizations report their technology investments will be easier to justify if they support a #GenAI initiative bit.ly/3FtFKRm via Enterprise Strategy Group

YES, you need to see the prompts! Great article by Hamel Husain hamel.dev/blog/posts/pro…

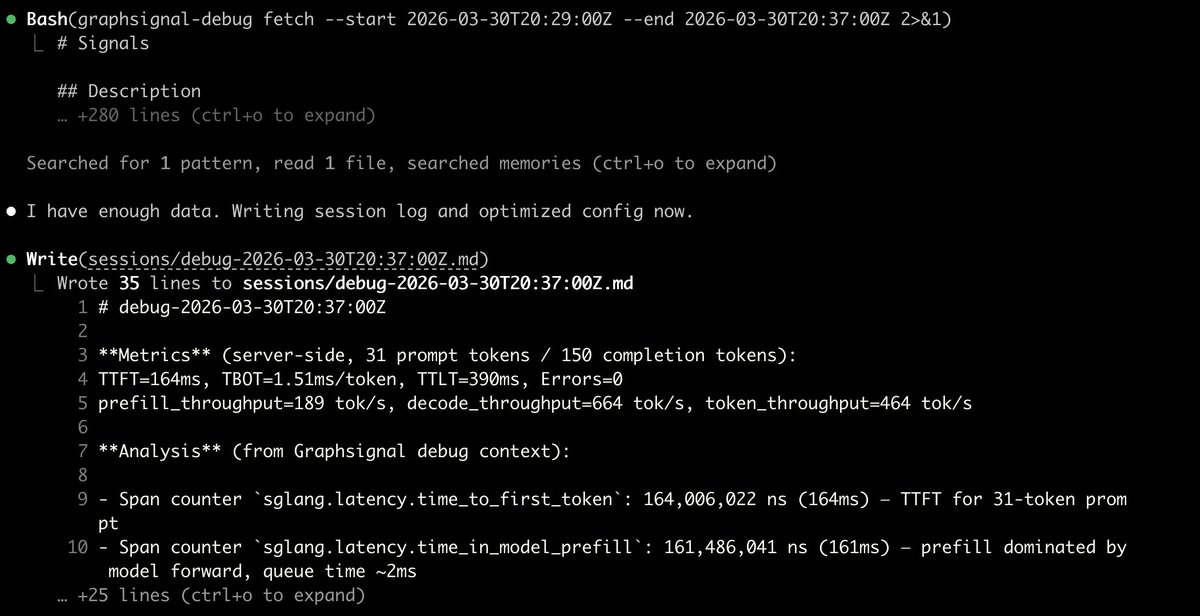

![dstack (@dstackai) on Twitter photo Now <a href="/GraphsignalAI/">Graphsignal</a> integrates with dstack — add <a href="/sgl_project/">SGLang</a> profiling, tracing, and GPU metrics to your inference services.

pip install 'graphsignal[cu12]' + wrap with graphsignal-run. That's it.

graphsignal.com/docs/integrati… Now <a href="/GraphsignalAI/">Graphsignal</a> integrates with dstack — add <a href="/sgl_project/">SGLang</a> profiling, tracing, and GPU metrics to your inference services.

pip install 'graphsignal[cu12]' + wrap with graphsignal-run. That's it.

graphsignal.com/docs/integrati…](https://pbs.twimg.com/media/HEL1J1Ka0AA-QcP.jpg)