Felix Draxler

@felixdrrelax

Machine Learning PostDoc at UC Irvine with Stephan Mandt. PhD from Heidelberg University. Generative models, normalizing flows w/ cell bio applications

ID: 512935817

03-03-2012 08:20:24

19 Tweet

90 Takipçi

55 Takip Edilen

We're publishing the code to our ICML 2018 paper "Essentially No Barriers in Neural Network Energy Landscape" (arxiv.org/abs/1803.00885) at github.com/fdraxler/PyTor…. Check out our talk at ICML Conference on Wed 5pm.

PS. When we examine the optimization landscape, we're looking for a linear form of "mode connectivity." In doing so, we build on foundational work by @feldrify et al. & Timur Garipov et al. showing that neural network optima are connected by nonlinear paths of nonincreasing error.

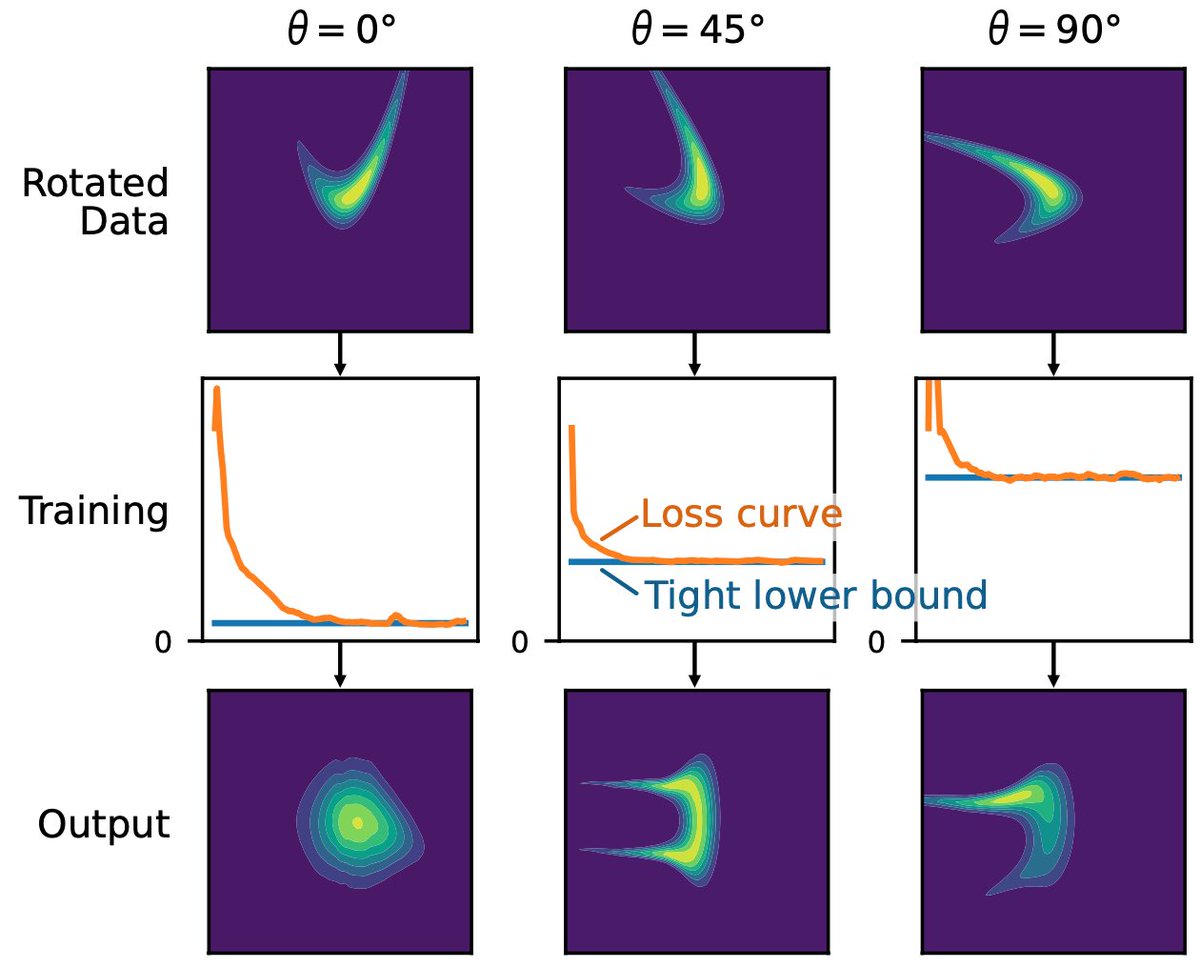

New paper out from group in Heidelberg that has some extension of the work Johann Brehmer & I did on Manifold-learning flows, but for unrestricted (aka non-invertible) auto-encoders. They also suggest a different way to avoid a failure mode we identified arxiv.org/abs/2306.01843

Our new preprint trains any neural network architecture as a generative model via maximum likelihood: arxiv.org/abs/2310.16624 Free-form flows (FFF) work well and sample fast. We showcase this on SBI and molecule generation. Thanks to Peter Sorrenson, Armand, Lea and Ullrich!