Wanrong Zhu

@zhuwanrong

Research Scientist @AdobeResearch| PhD @UCSB, BSc @PKU1898

ID: 1180383762351157248

http://wanrong-zhu.com 05-10-2019 07:27:29

52 Tweet

739 Followers

216 Following

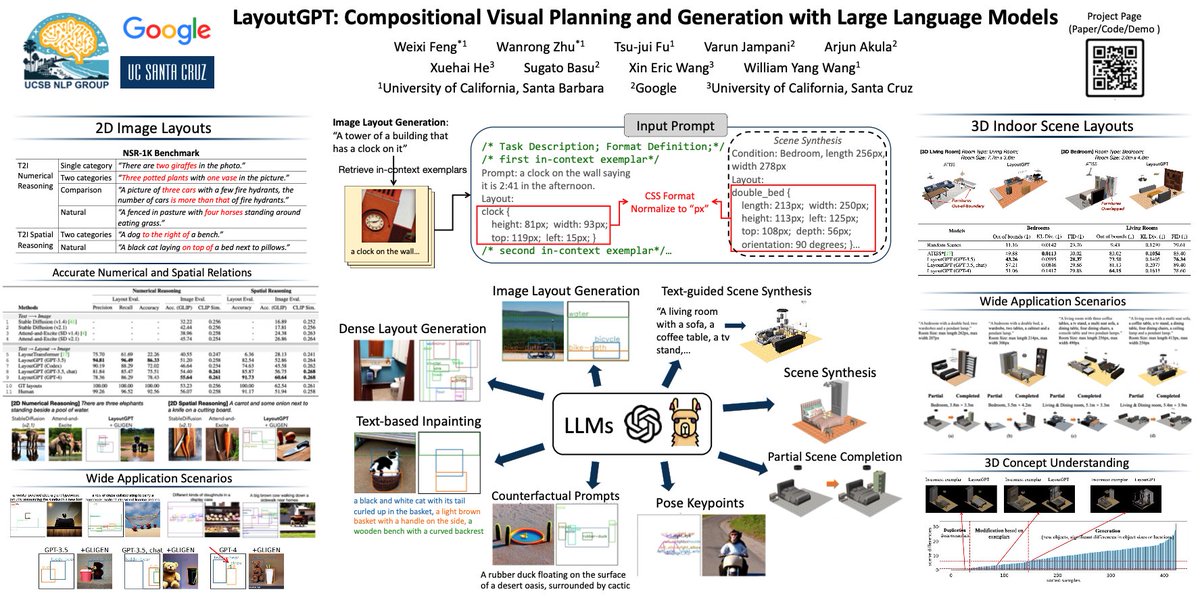

Thank you Aran Komatsuzaki for sharing our work! Our project page and code are now available: project page: layoutgpt.github.io code: github.com/weixi-feng/Lay…

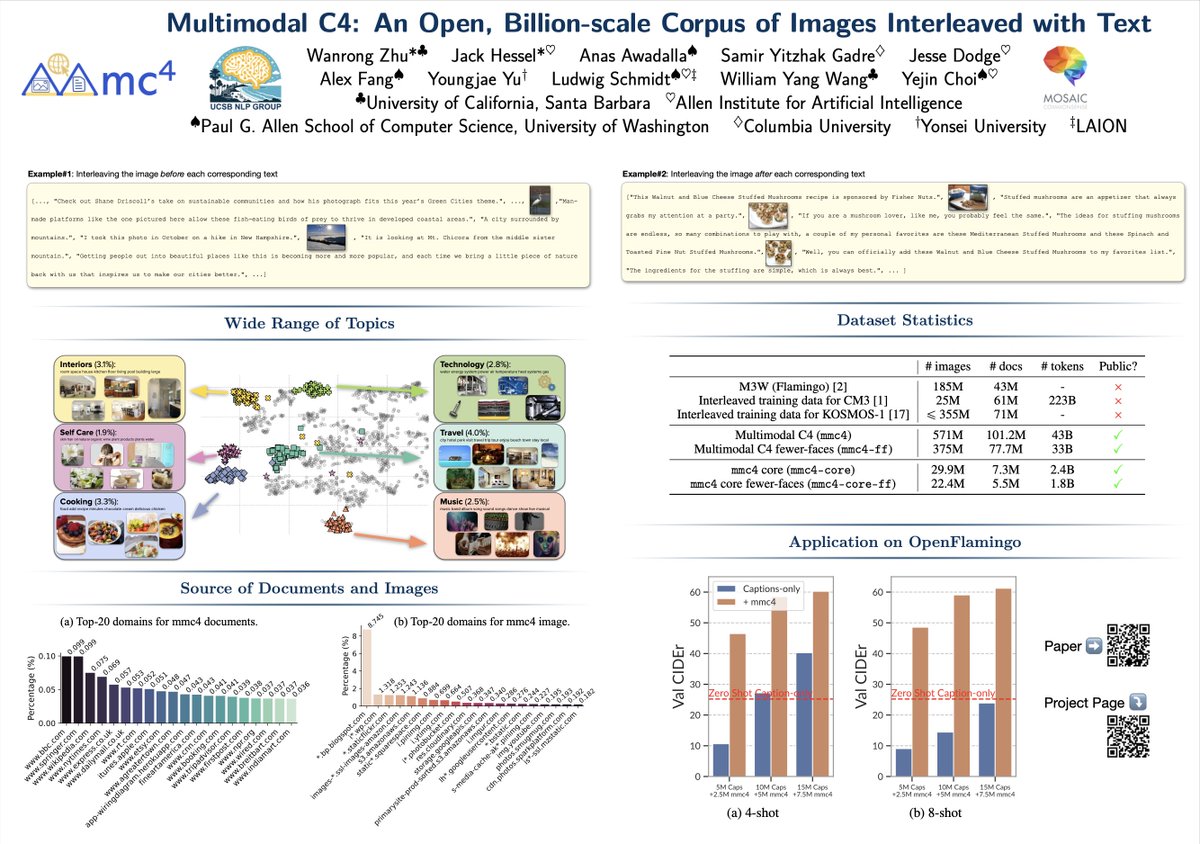

Our mmc4 is accepted to #NeurIPS2023 D&B Track. Thanks again to my amazing collaborators, especially Jack Hessel ! See you guys in New Orleans 😆

How does LLM walk in the New York City? Here’s an interactive demo by Raphael Raphael Schumann : map2seq.schumann.pub/velma/demo/ Talk to them at #NeurIPS2023 Congrats Raphael Schumann Wanrong Zhu @NeurIPS Weixi Feng on the accepted #AAAI2024 paper on LLMs for decision making. arxiv.org/abs/2307.06082

Our NeurIPS paper about understanding LLMs' in-context learning ability will be presented by my amazing coauthor Wanrong Zhu @NeurIPS at poster session 2 on Tuesday, 5:15-7:15 pm. Please drop by if you are interested! I won't be there in person but I'm happy to talk more about it online😀

Congratulations Drs Tsu-Jui Fu Alon Albalak Wanrong Zhu @NeurIPS Yi-Lin (Pascal) Tuan on your graduation! The world of artificial intelligence is better because of your contributions. To your continued success! 🚀