Umar Ateeq

@umarateeq_

Co-Founder Virid Future || NLP Engineer || Data Scientist

ID: 1275185630411137026

https://www.linkedin.com/in/umarateeq 22-06-2020 21:55:33

23 Tweet

32 Followers

100 Following

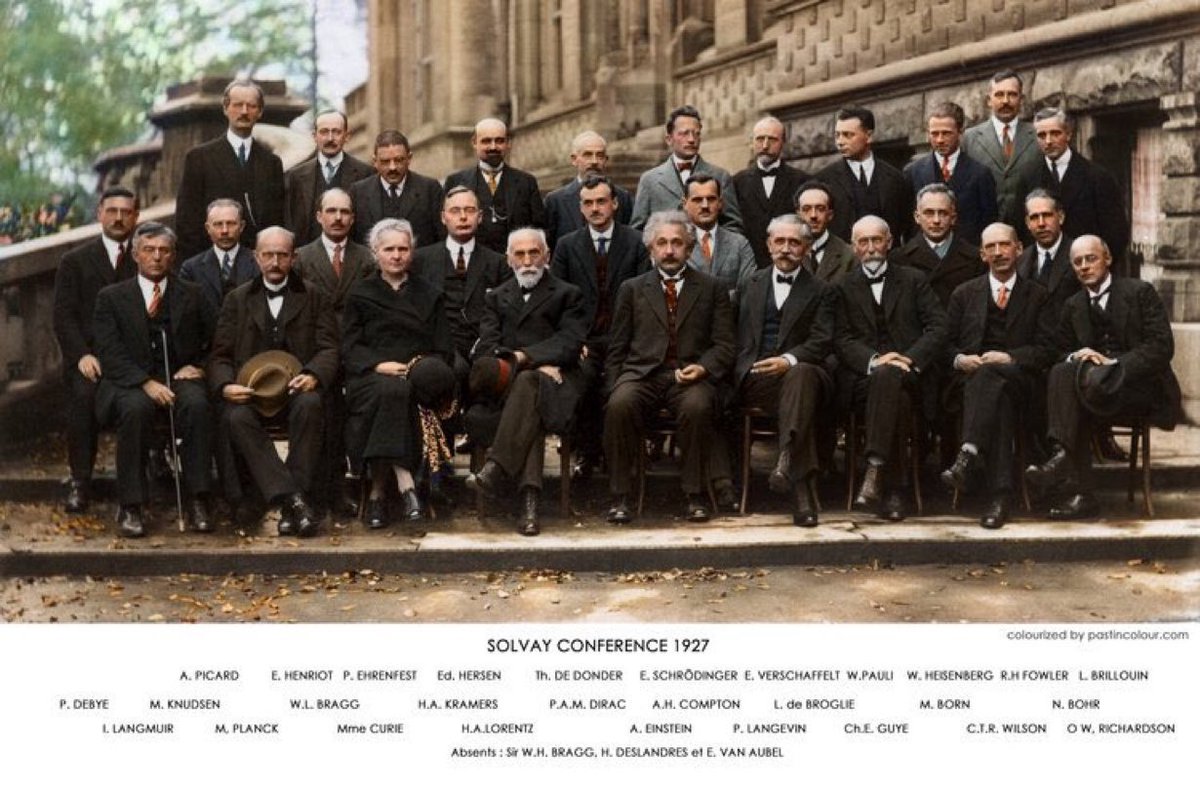

Marie Curie with Pauli, Bohr, Albert Einstein, Schrodinger, Direc, Heisenberg, Lorentz and Planck at the Solvay Conference, 1927