WOOSUNG CHOI

@woosungchoi3

ID: 1207259612014997504

https://ws-choi.github.io/cv 18-12-2019 11:21:59

650 Tweet

289 Takipçi

232 Takip Edilen

🔊Our new work: SoundReactor, pushing V2A generation toward “Neural Sound Engine”! We tackle “frame-level online” V2A, i.e., without accessing any future video frames ✅Simple design ✅Full-band stereo with AV sync ✅Low frame-level latency on 30FPS 🎧koichi-saito-sony.github.io/soundreactor/

🎓 Happy to share: CMU is incorporating our book 《The Principles of Diffusion Models》 as a core resource for their diffusion & flow-matching course materials. If you’re teaching or learning diffusion models — or want a systematic, principled handbook — feel free to use it too. pic.x.com/S034V7OX1a

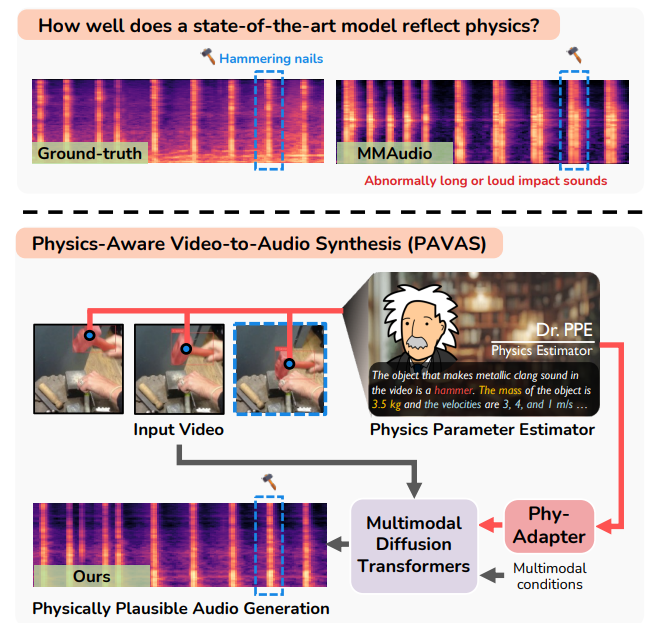

🎉PAVAS, a framework for generating physically plausible audio from video, by integrating physics estimation at #CVPR2026! Led by our intern Hyun-Bin Oh (x.gd/pE0IB), in collaboration with 過密都市, Tae-Hyun Oh, and Yuki Mitsufuji. 🎧&📝: x.gd/ObKwe

Audio VAEs + VQ-VAEs designed for #GenAI! * Ultra-fast encoding for on-the-fly training pipelines, * ~2x more compression (13Hz) w/frontier quality, * Any format (mono, stereo LR, MS, mel, raw), * Cont. or discrete latents. 👏 Jonah Casebeer! w/Ge Zhu Zhepei Wang, me