(✿◡‿◡) 🍁

@soccerff22

ID: 80158383

05-10-2009 23:42:01

3,3K Tweet

223 Takipçi

2,2K Takip Edilen

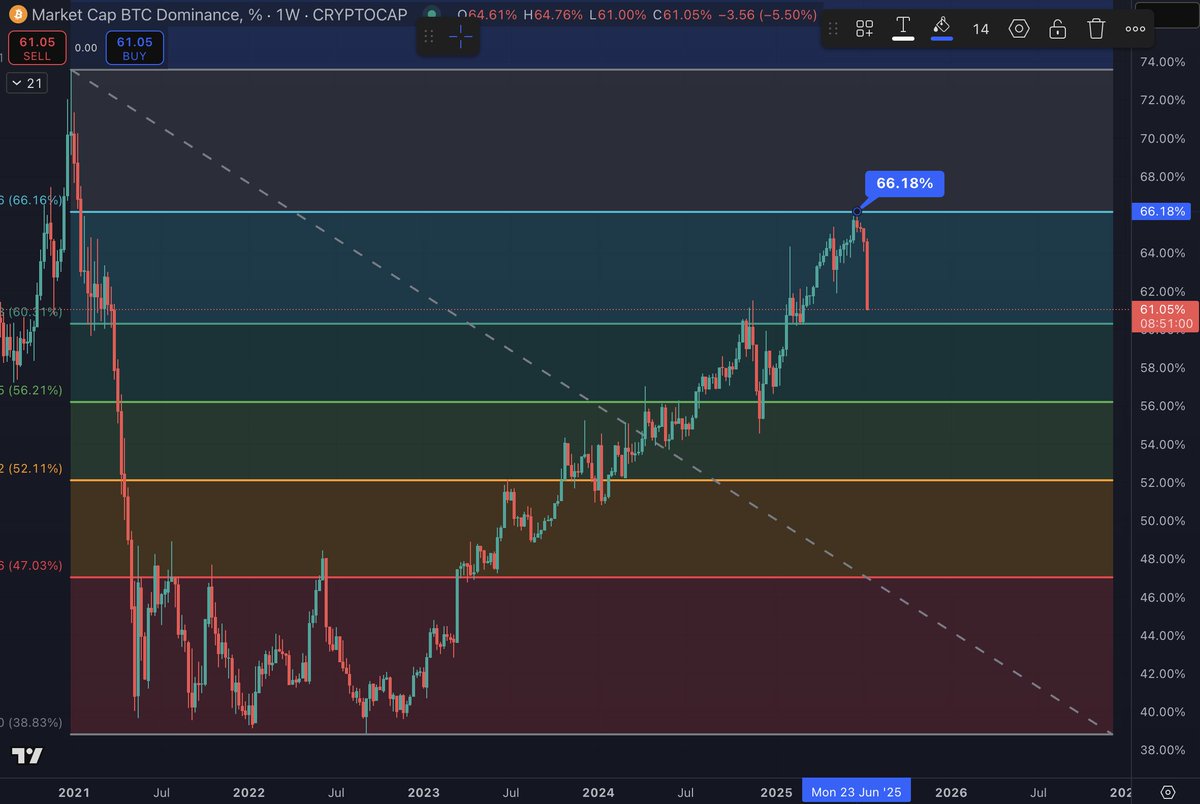

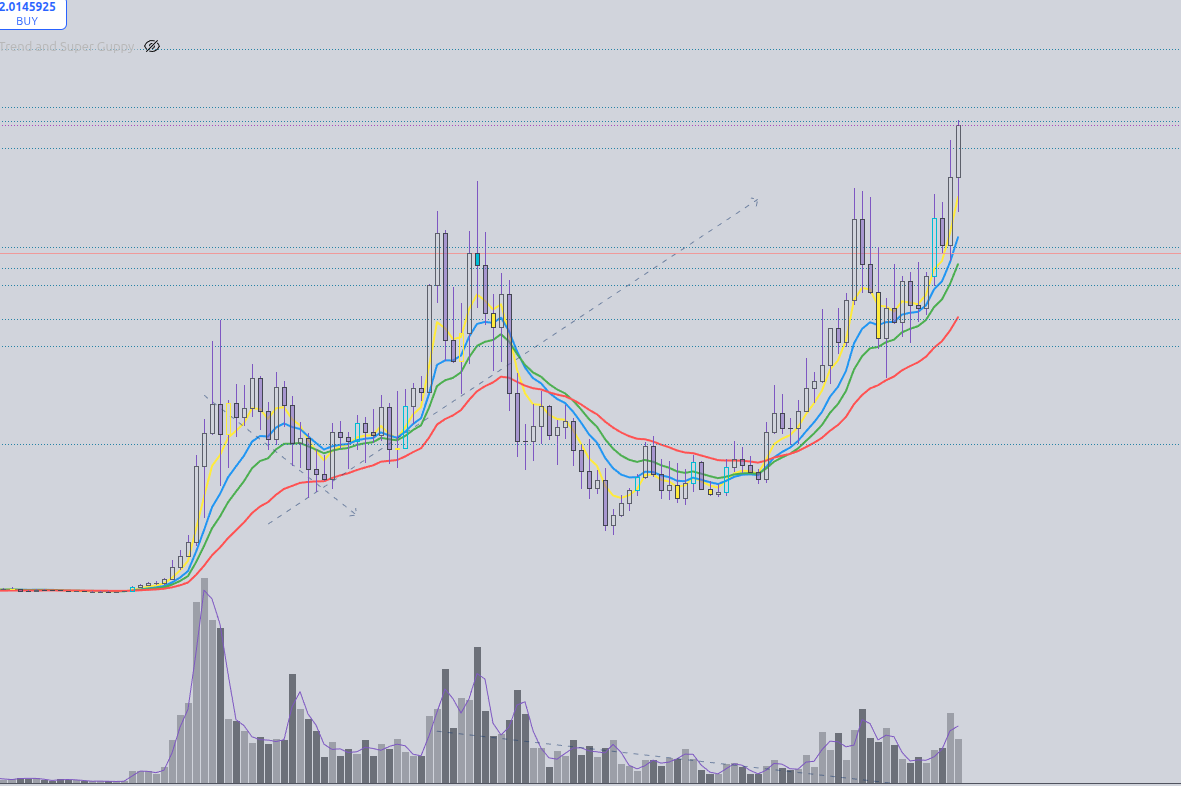

My guess: ETH stakers will be rewarded with #LINEA Declan Fox

My Etherscan Moment: Realizing I could check ANY wallet's balance without asking. No more 'trust me, bro'—just pure transparency. ✨ 10 years of building trust. Thank you, etherscan.eth! #10YearsofEtherscan