Sunghwan Kim

@seonghwan_57

M.S. student, Yonsei University

ID: 1749046906557444096

https://kimsh0507.github.io/ 21-01-2024 12:31:25

26 Tweet

21 Takipçi

410 Takip Edilen

Everything you love about generative models — now powered by real physics! Announcing the Genesis project — after a 24-month large-scale research collaboration involving over 20 research labs — a generative physics engine able to generate 4D dynamical worlds powered by a physics

Scaling laws in deep RL? Turns out that batch size, learning rate, and UTD (update-to-data) for getting the most efficient and scalable deep RL has predictable relationships. Checkout the analysis in new work by Oleg Rybkin & collaborators: arxiv.org/abs/2502.04327

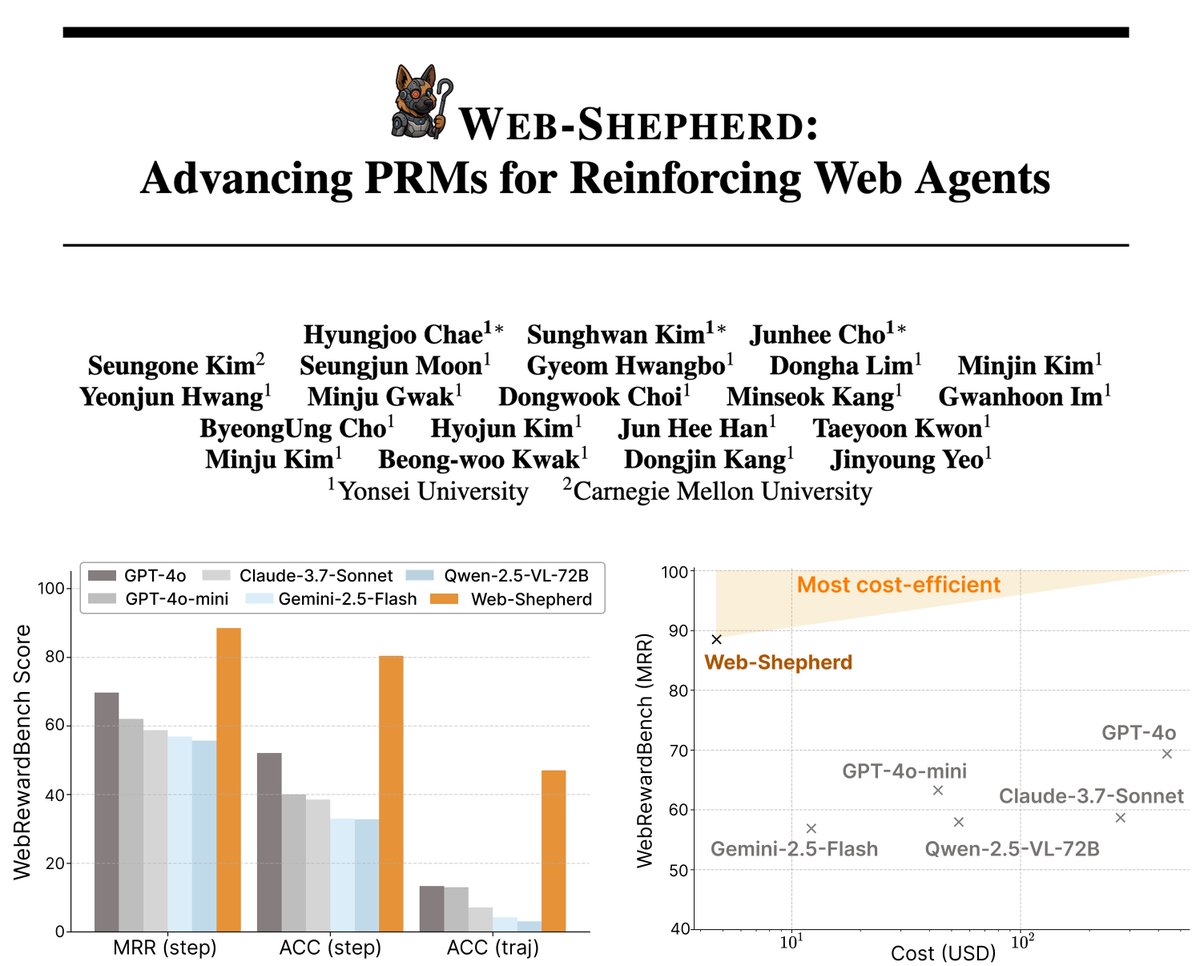

Would you like to enhance your web agent? Check out our work! Web-Shepherd is a (process) reward model designed for interactive web environments beyond single-turn tasks. Huge thanks to Hyungjoo Chae for the amazing collaboration!