Matt Upson

@m_a_upson

Founder @mantisnlp. @PublicDigitalHQ Network. Fellow @SoftwareSaved. Previously @gdsteam. Mostly #NLP and #MLOps.

ID: 1394290140

http://www.machinegurning.blog 01-05-2013 10:27:26

2,2K Tweet

1,1K Takipçi

1,1K Takip Edilen

Super nice Transformer breakdown from hacker lax . I'll be reading this with my cup of tea later 🫖

🔥Preprint out: `Toward a realistic model of speech processing in the brain with self-supervised learning’: arxiv.org/abs/2206.01685 by J. Millet*, Charlotte Caucheteux @ICML24* and our wonderful team: The 3 main results summarized below 👇

📢 If you are interested in how to scale 🚀 multilabel classsification to thousands or millions of labels 🏷 I am giving a talk on Sunday in PyData London titled “Extreme multilabel classification in the biomedical NLP domain” 🗣 Also super excited to be back in London 🇬🇧

Great talk by Nick Sorros on Multilabel Classification at scale, for biomedical publications. The trained model BertMESH is available on Hugging Face PyData London #pydatalondon Wellcome

Great talk by Nick Sorros on Extreme Multilabel Classification: classification problems with thousands or millions of classes. - svm.sparsify() is useful - TFIDF vocabs can be made smaller with BPE/WordPiece - "Multilabel attention" is used in these models #PyDataLDN

I've got a ~surprise~ video for you today! I had a great conversation with Nick Sorros and Matt Upson from @mantisnlp about reproducibility, open source, data ethics & more. I hope you enjoy it as much as I did. 😁▶️ youtube.com/watch?v=uztK9g…

✨Here is quick example of a retrieval based #ChatGPT equivalent you can easily build using your own data and the excellent library from deepset, makers of Haystack

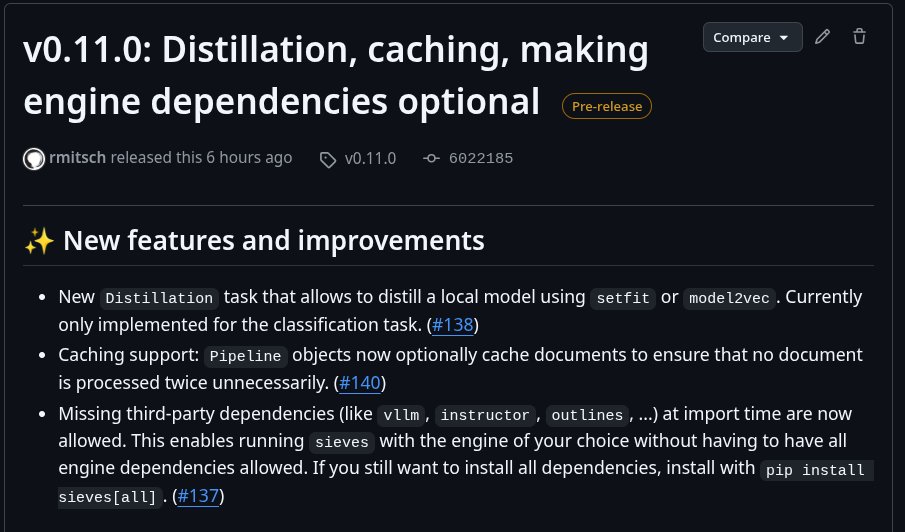

We recently switched to using Hugging Face inference endpoints as it makes it much easier to deploy and manage the models for our clients. We also created a nice CLI tool called hugie 🐻 to make inference endpoints available from the command line 🚀

Here is a nice CLI tool we created on top of the Hugging Face inference endpoints which allow you to deploy any transformer model from the command line or a CI/CD pipeline. Kudos mainly to Matt Upson 👏

![Kim Calders (@kimcalders) on Twitter photo After more than five years of work: our new publication on how to almost double woodland carbon overnight; or, when models work a bit too well.

Full paper: bit.ly/3uXiG8D

[1/10] After more than five years of work: our new publication on how to almost double woodland carbon overnight; or, when models work a bit too well.

Full paper: bit.ly/3uXiG8D

[1/10]](https://pbs.twimg.com/media/FkZjyhQXEAEbr0x.jpg)