Tommy Shaffer Shane

@tommyshane

AI Policy Manager @longresilience, doing a PhD on AI going wrong @kingsdh

previously @scitechgovuk, @firstdraftnews, @houseofcommons

ID: 41828876

22-05-2009 15:06:23

557 Tweet

741 Followers

1,1K Following

"Google Autocompletion appears to be a patchwork of blocks and related suppressions" Great paper by Richard Rogers on AI and the reliance on 'patchwork', where problematic outputs are quietly blocked with 'patches' - covering problems with the system journals.sagepub.com/doi/10.1177/20…

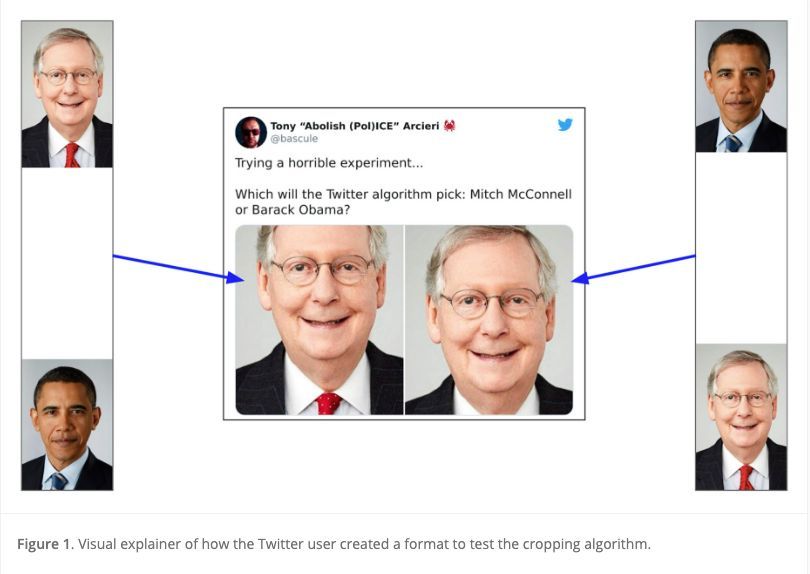

In the 2nd commentary for our SI on AI, Tommy Shaffer Shane shifts attention from "algorithmic ‘bugs’ as technical accidents that are simply found, to how we come to be troubled by AI going wrong, and therefore how publics form and acquire the capacity to alter algorithms’ design".

In this commentary, Tommy Shaffer Shane takes up the example of an #AI incident in September 2020, when a Twitter user created a ‘horrible experiment’ to demonstrate the racist bias of Twitter's algorithm for cropping images. Check it out! ➡️ buff.ly/46WaOET

A new paper from our PhD researcher Tommy Shaffer Shane on AI incidents and ‘networked trouble’: kingsdh.net/2023/12/20/ai-…

🧐 Ever wondered about the fallout of #AI gone wrong? In this commentary, Tommy Shaffer Shane hones in on AI incidents, using a 2020 Twitter algorithm bias fiasco as a case study. Read it here! ➡️ buff.ly/46WaOET

In this article, Tommy Shaffer Shane argues for a research agenda focused on AI incidents – examples of AI going wrong and sparking #controversy – and how they are constructed in online environments. Read it here! buff.ly/46WaOET