Excited to share that our paper #WordAsImage was accepted to #SIGGRAPH2023 🥳

Word-as-Image allows you to visually express the semantic meaning of a word in a creative way 👩🎨 🎨

For more details (and our Hugging Face demo), check out our project page 👉 wordasimage.github.io/Word-As-Image-…

From interactive hair simulation to an #AI system that can auto-generate realistic 3D character motion by watching 2D videos, check out the 19 papers from #NVIDIAResearch accepted into #SIGGRAPH2023 .

Read the blog with links to each paper: nvda.ws/3nniRKc

Print🖨️beautiful anisotropic appearances✨with our orientable dense cyclic infill described in our ACM SIGGRAPH paper.

With C. Zanni, J. Martínez, P.-A. Hugron, and Sylvain Lefebvre, at Inria Nancy - Grand Est

Article: xavierchermain.github.io/data/pdf/Cherm…

Supplemental video: youtu.be/aUDzZrlRnNU

Excited to share our #SIGGRAPH2023 work on accelerating inverse rendering with ReSTIR. Since we render a sequence of frames during optimization, we can reuse samples from previous frames, just like in real-time rendering. 1/3

weschang.com/publications/r…

🎉 Excited to share HumanRF #SIGGRAPH2023 🎉

It compactly reconstructs full humans in action and enables novel-view synthesis. Check out the new high-quality dataset ActorsHQ too!

Code and data follow soon.

Project page: synthesiaresearch.github.io/humanrf/

Paper: arxiv.org/abs/2305.06356

Acknowledging recent #xR festival closures, the #SIGGRAPH2023 #VR Theater stands in support of our community and medium, and remains set on furthering immersive futures. Tell your stories in worlds without walls or frames s2023.siggraph.org/program/vr-the…

#VFX #techconference #VR #immersive

Yesterday was the last day to get in the raffle to win one of 50 prizes, just for registering for #SIGGRAPH2023 . Good luck if you entered. And don't forget to play the Scavenger Hunt while you're there!!! #siggraphscavengerhunt . Because, if you like prizes, we've got prizes.

Thank you AK for sharing our latest work AniFaceDrawing, #SIGGRAPH2023 conference paper. The project page is here.

jaist.ac.jp/~xie/AniFaceDr…

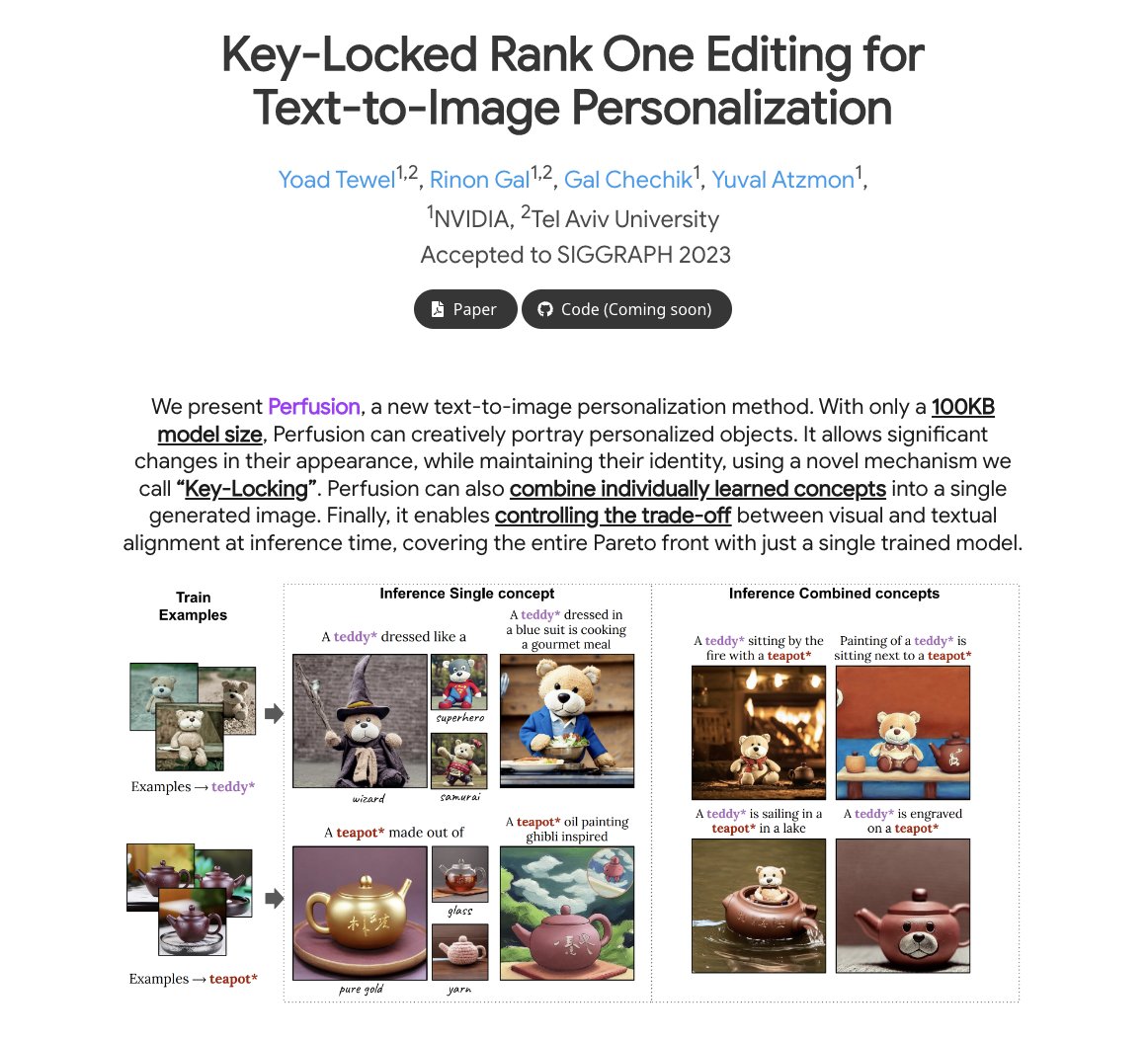

Thank you, AK! I'm thrilled to announce that our work, Perfusion, has been accepted to #SIGGRAPH2023 ! 🥳 This project was completed during my internship at NVIDIA AI Research with Rinon Gal, Gal Chechik, and Yuval Atzmon.

🚀 Excited to share our new #SIGGRAPH2023 paper 'NeRF-Texture', a novel method for capturing & synthesizing real-world textures with NeRFs from multi-view images.

Check it out 👉 yihua7.github.io/NeRF-Texture-w…

#ComputerGraphics #NeRF #TextureSynthesis

Estimating a full-body pose from only the headset and controllers is ambiguous. Environment information can help resolve this. In our #SIGGRAPH2023 paper the avatar is generated from only the 3 shown coordinate frames (no cameras) + height of the environment (green dots). (1/4)👇

Happy to share our latest #SIGGRAPH2023 paper!

We present “Stealth Shaper,” a framework that optimizes the surface’s reflection property, while effectively preserving the original geometry.

Project page: kenji-tojo.github.io/publications/s…

Meet 2023 Team Leader Jackie Allex (she/her) from Northeastern University majoring in Computer Science and Media Arts.

#SIGGRAPH2023 #ProudToBeSV

Want to draw a face on inanimate objects, or arms on a flamingo? 🎨

I’m happy to share our latest research project, where we looked at how we can assist users in creating video doodles: hand-drawn animations added on top of videos 📽️

#SIGGRAPH2023