Sung-Feng Huang

@sungfenghuang

National Taiwan University | Speech Processing & Machine Learning Lab @ntu_spml

ID: 378591891

23-09-2011 13:32:43

22 Tweet

63 Takipçi

256 Takip Edilen

New work with Ron Weiss, Eric Battenberg, Soroosh Mariooryad, Durk Kingma -- finally achieving what Yuxuan Wang and I set out to do in 2016 before switching to spectrograms: direct waveform generation from characters. (1/7) abs: arxiv.org/abs/2011.03568 samples: google.github.io/tacotron/publi…

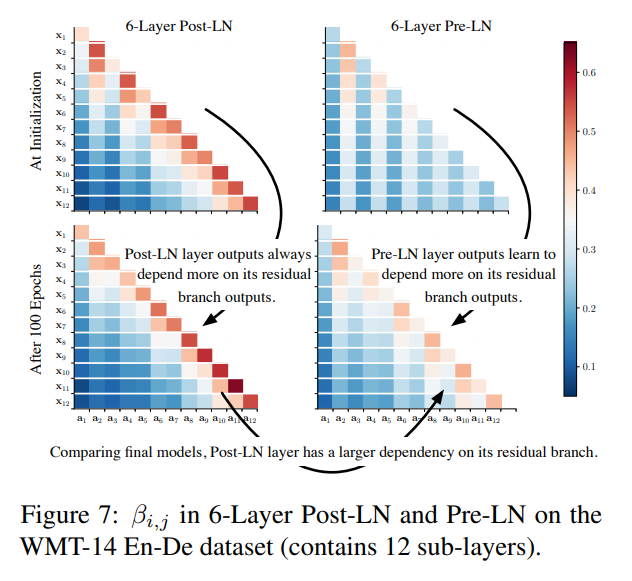

Understanding the Difficulty of Training Transformers (arxiv.org/pdf/2004.08249…, Liyuan Liu (Lucas)) Studies instability of transformer. Dives into the dark arts of NN stability, impact of layer norm / residuals. Isolates residual paths as a main cause. Trains 60 layers transformers.