subho ghosh

@subhoghosh02

22. seeking local optima in ML & global maxima in life. training on life

ID: 1201157604212236288

http://Github.com/iGhoshSubho 01-12-2019 15:14:32

6,6K Tweet

1,1K Takipçi

407 Takip Edilen

so reward hacking gonna brrrrr. will brown will be joining us for the next pod ⚡ you're not ready for this. more soon!

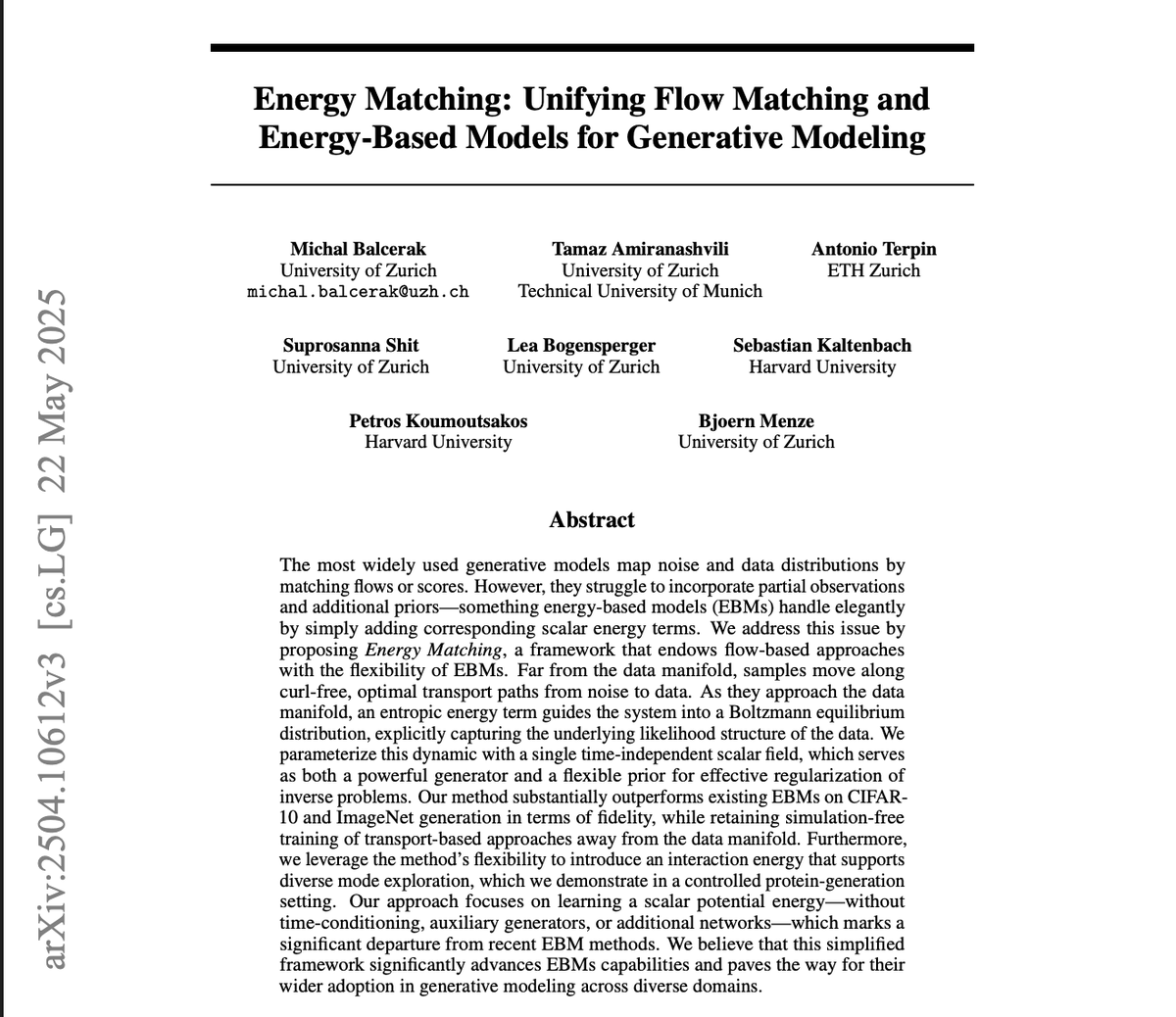

New paper on the generalization of Flow Matching arxiv.org/abs/2506.03719 🤯 Why does flow matching generalize? Did you know that the flow matching target you're trying to learn **can only generate training points**? with Quentin Bertrand, Anne Gagneux & Rémi Emonet 👇👇👇

subho ghosh true. was just noting down all the topics. piyushmaharanacats.blogspot.com/2025/06/rabbit…