Siva Reddy

@sivareddyg

Assistant Professor @Mila_Quebec @McGillU @ServiceNowRSRCH; Postdoc @StanfordNLP; PhD @EdinburghNLP; Natural Language Processor #NLProc

ID:56686035

https://sivareddy.in 14-07-2009 12:56:42

1,7K Tweets

4,9K Followers

973 Following

Monograph on 'Formal Aspects of Language Modeling' from Ryan David Cotterell et al.

arxiv.org/abs/2311.04329

It would be so nice if everyone read this and we had shared foundations. Particularly for interpretability.

Mila welcomes this morning's announcement by Canadian Prime Minister Justin Trudeau of a historic investment of over $2 billion in AI, including a strategic national computing infrastructure, and the establishment of an institute dedicated to AI safety research.

We are excited to host our next UNC-Chapel Hill NLP/ML Colloquium by Dr. Siva Reddy (@sivareddyg) from MilaQuebec @McGillU, talking about:

'Paradoxes in Transformer Language Models: Masking, Positional Encodings, and Routing'!

Happening this Wednesday April 10th, 2-3pm ET in FB141.

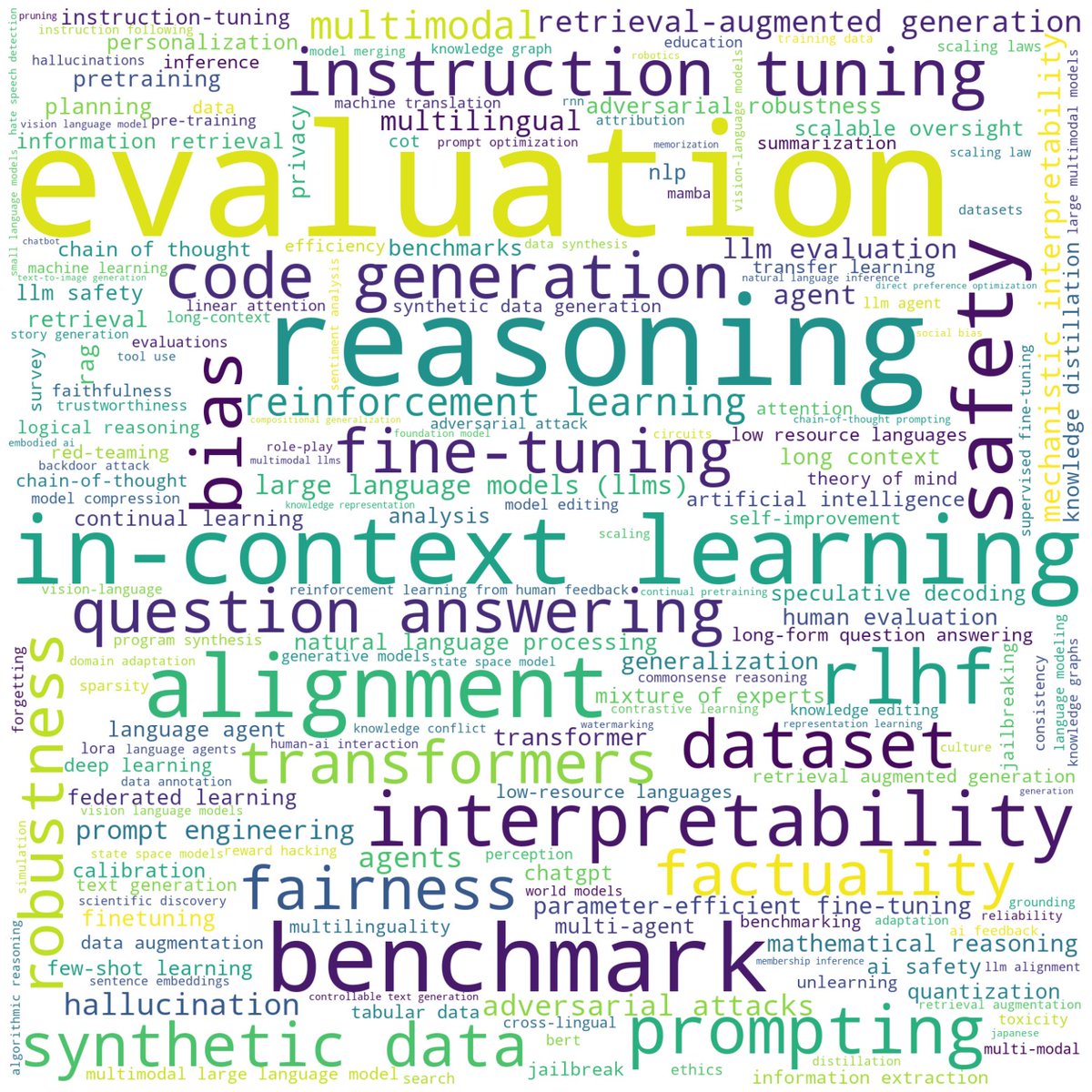

Folks, some Conference on Language Modeling stats, because looking at these really brightens the mood :)

We received a total of ⭐️1036⭐️ submissions (for the first ever COLM!!!!). What is even more exciting is the nice distribution of topics and keywords. Exciting times ahead! ❤️

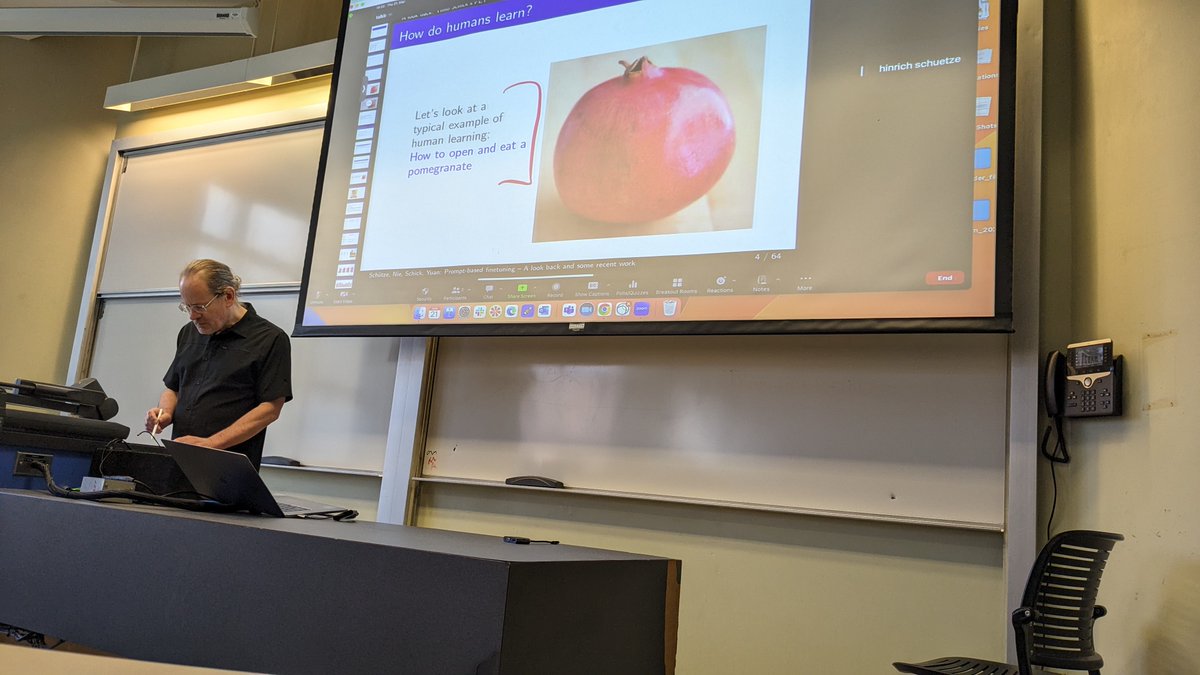

Many of us at MilaQuebec are thrilled to hear from hinrich schuetze about generating large scale instruction data in an unsupervised fashion. Recording will be available. My course students also had a bonus course lecture on pattern-exploiting training (PET) and GNNavi.

We retrofit LLMs by learning to compress their memory dynamically

I find this idea very promising as it creates a middle ground between vanilla Transformers and SSMs in terms of memory/performance trade-offs

I'd like to give a shout-out to Piotr Nawrot and Adrian Lancucki for the…