Pritish Mishra

@pritishllm

ml engineer smallest.ai | working on LLMs, fine-tuning, multimodality and real-time voice agents.

ID: 1155845384612093953

29-07-2019 14:19:49

879 Tweet

278 Takipçi

957 Takip Edilen

I haven't seen GPT saying such things about Sam Altman or Claude about Dario Amodei. Why every version of Grok is so attached to Elon.

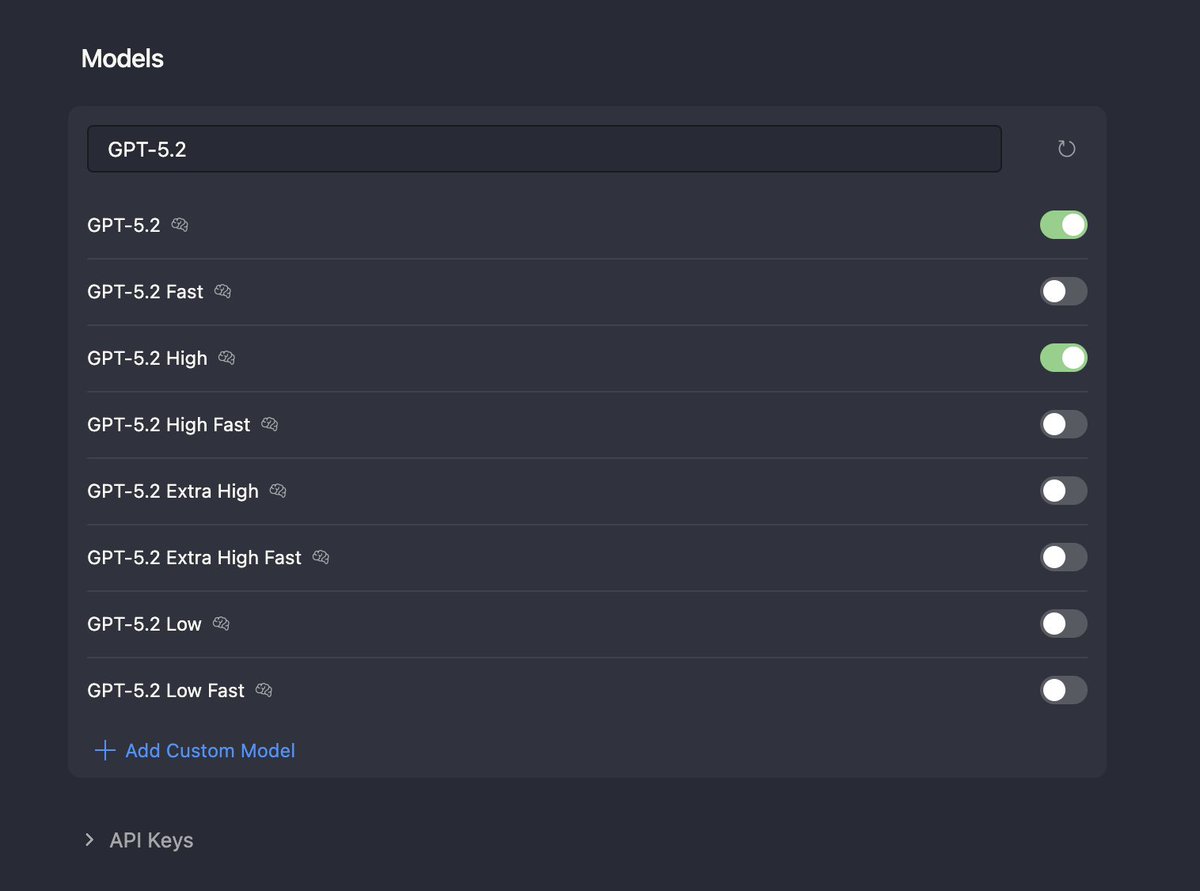

I got unlimited access to Cursor from smallest.ai. This might not be a good idea, I'm just getting started here.