Maxime Peyrard

@peyrardmax

Junior Professor @CNRS (previously @EPFL, @TUDarmstadt) -- AI Interpretability, causality, and interaction flows between LLM, humans, and tools

ID: 4881437273

https://peyrardm.github.io 06-02-2016 13:08:32

55 Tweet

306 Followers

282 Following

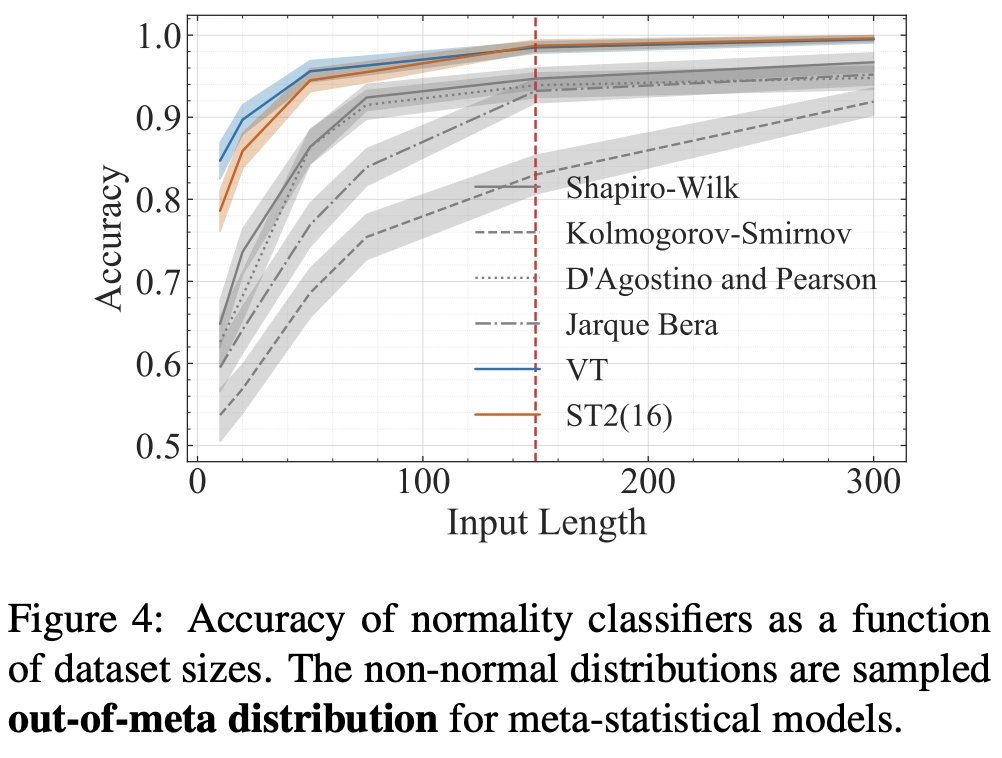

because i haven't done so yet, i've decided to burn any remainder of the bridge to the land of statistics. it wasn't statisticians nor statistics but it was really me. i am simply not good enough to do statistics myself. so, Maxime Peyrard and i decided to turn statistical

I'm excited to announce that I’ll be joining the Computer Science department at Johns Hopkins University as an Assistant Professor this Fall! I’ll be working on large language models, computational social science, and AI & society—and will be recruiting PhD students. Apply to work with me!

Causal Abstraction, the theory behind DAS, tests if a network realizes a given algorithm. We show (w/ Denis Sutter, T. Hofmann, Tiago Pimentel) that the theory collapses without the linear representation hypothesis—a problem we call the non-linear representation dilemma.