Serdar Ozsoy

@ozsoyserdar

PhD student @UniBonn

ID: 1100087592

18-01-2013 05:26:01

50 Tweet

46 Takipçi

161 Takip Edilen

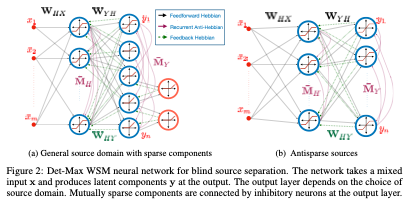

Our paper (arxiv.org/abs/2209.07999) just got accepted to NeurIPS Conference 2022! Congratulations to Alper Erdogan for coming up with the idea and Serdar Ozsoy, Shadi Hamdan, Sercan Arık for a great collaboration.

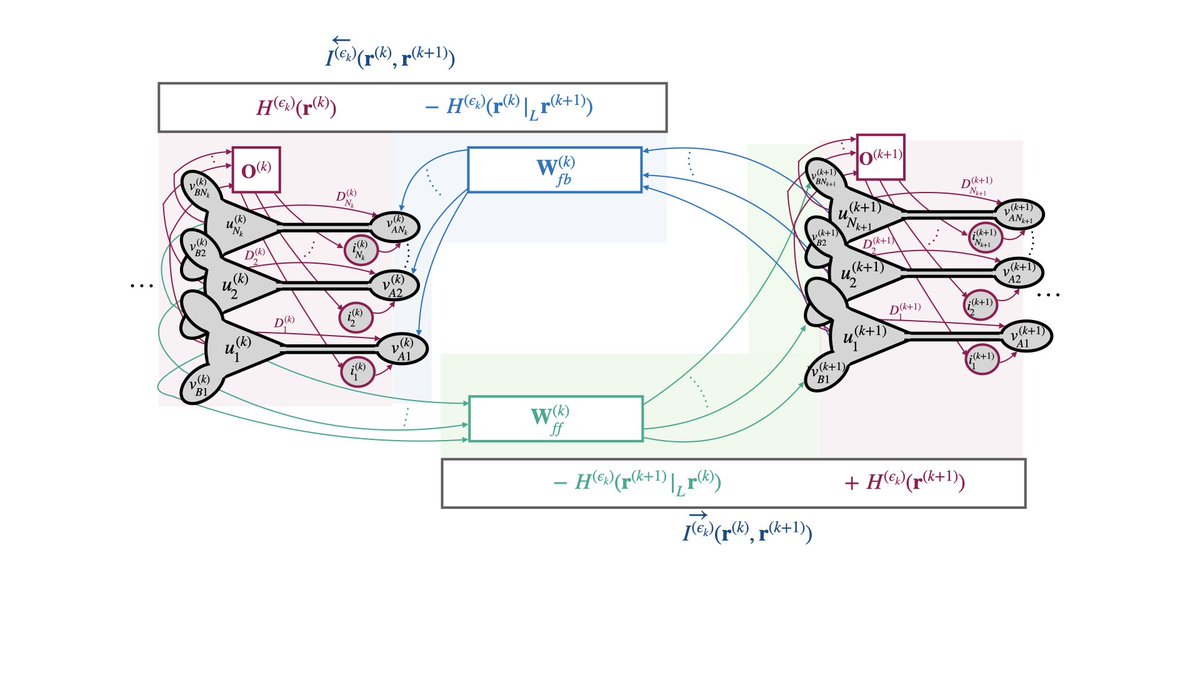

Our paper (arxiv.org/abs/2209.12894) just got accepted to NeurIPS Conference 2022! Conference. Many thanks to my collaborators Bariscan Bozkurt and Cengiz Pehlevan for this exciting joint work.

💫We're happy to share that our MS student Serdar Ozsoy and faculty members Alper Erdogan and Deniz Yuret presented posters of the two papers at #NeurIPS2022 in USA, and our MS student Bariscan Bozkurt made a successful virtual presentation in #NeurIPS2022 Oral Session. Congrats!👏🏼

Join us at the poster session for our #Neurips2023 article "Correlative Information Maximization: A Biologically Plausible Approach to Supervised Deep Neural Networks without Weight Symmetry", our joint work with Bariscan Bozkurt & Cengiz Pehlevan. 🗓️ Wed, 5-7 p.m. 📍 Hall B1+B2 #423

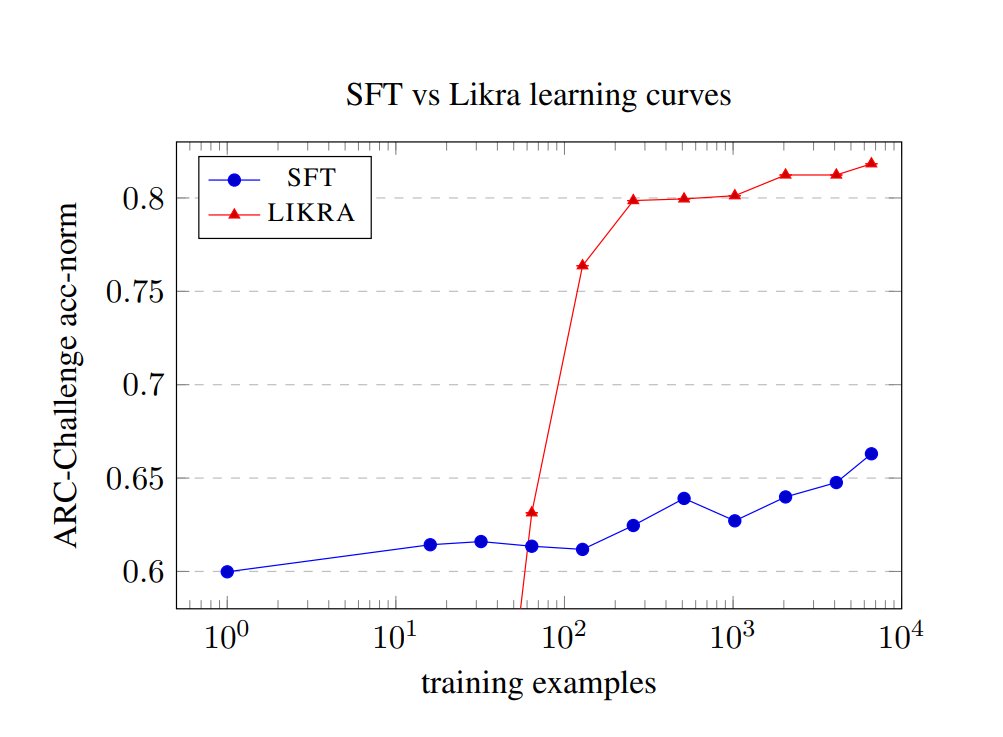

Have you ever seen a learning curve that looks like a step function? It turns out a few hundred negative examples flips a switch inside an LLM and gives a discrete jump in accuracy. "How much do LLMs learn from negative examples?" (arxiv.org/abs/2503.14391) with Shadi Hamdan.