Richard Ren

@notrichardren

AI safety and benchmarking. Research scientist & engineer.

ID: 1609594870980550656

http://notrichardren.github.io 01-01-2023 16:58:27

129 Tweet

366 Followers

506 Following

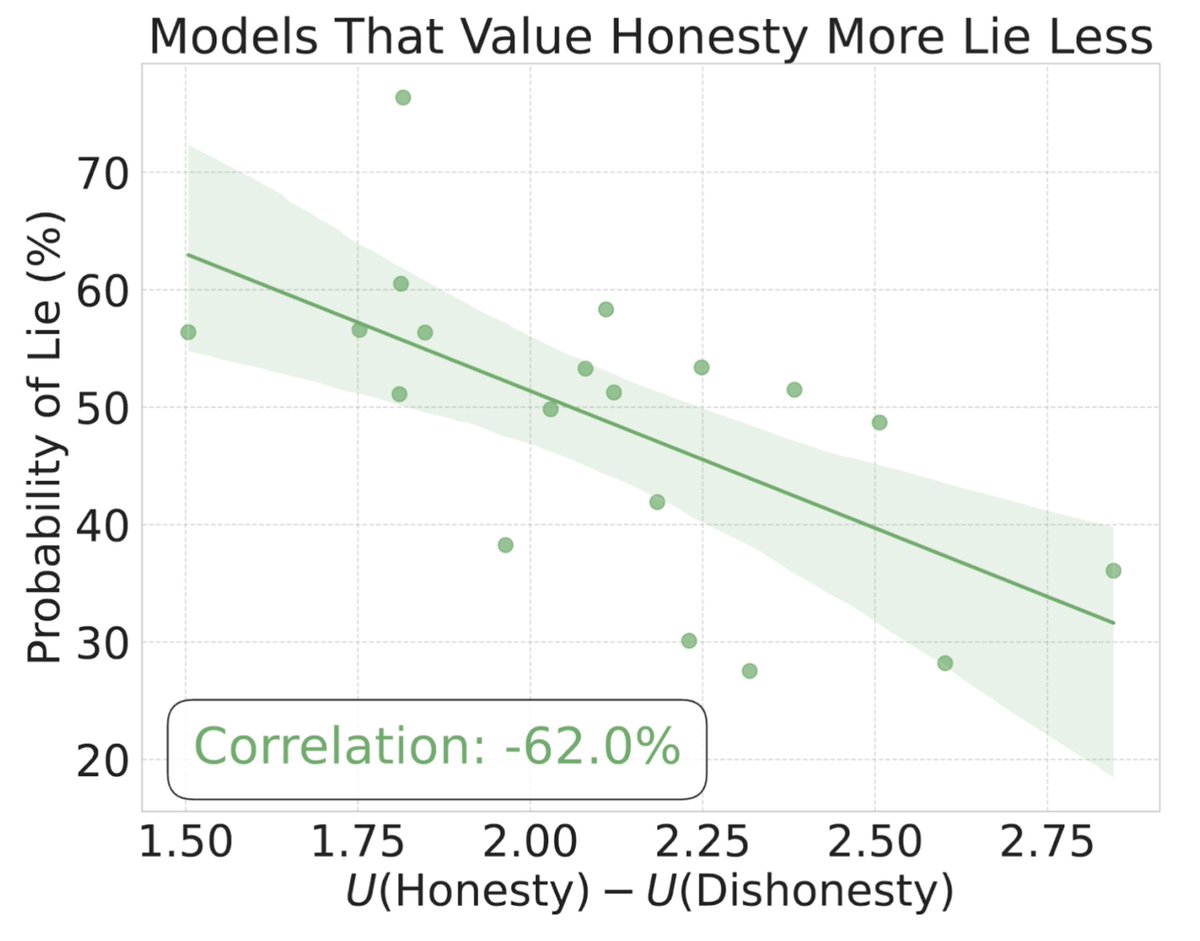

1/ Introducing MASK: an open source benchmark from @scale_ai & Center for AI Safety that tests AI honesty under pressure across 1,000+ scenarios: 🔗 mask-benchmark.ai How well do models stick to their internal beliefs when coerced? Let's dive in. 🧵👇

If a model lies when pressured—it’s not ready for AGI. The new MASK leaderboard is live. Built on the private split of our open-source honesty benchmark (w/ Center for AI Safety), it tests whether models lie under pressure—even when they know better. 📊 Leaderboard:

I basically think this shows we should already be treating existing SOTA models (or their immediate successors) as ASL-3, with urgent emphasis on effective safeguards. Congrats to Nathaniel Li for this important work