Mika Senghaas

@mikasenghaas

research intern @primeintellect, msc data science @epfl

ID: 837406685488611330

https://mikasenghaas.de 02-03-2017 20:58:06

5 Tweet

97 Followers

171 Following

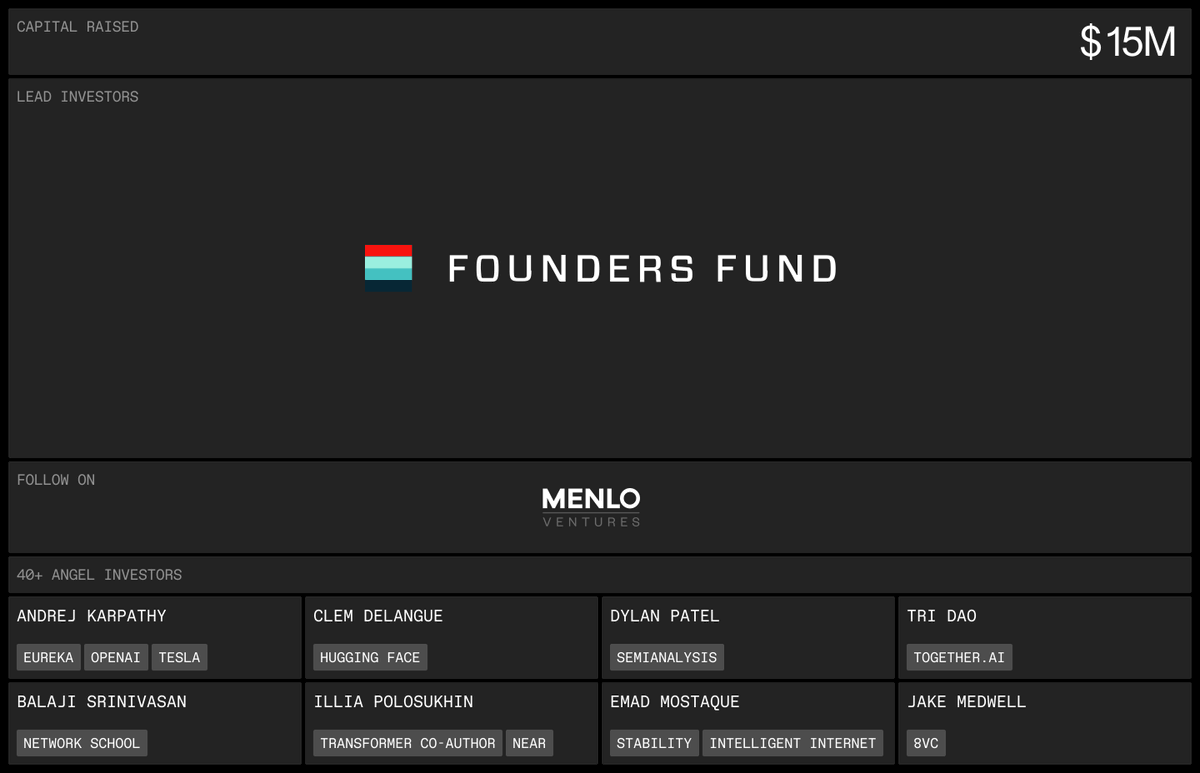

Announcing our $15m raise — led by Founders Fund. To build our peer to peer compute and intelligence protocol. With participation from Menlo Ventures and angels like Andrej Karpathy clem 🤗 Tri Dao Dylan Patel Balaji Emad and many others.

i can’t wait to launch synthetic-2 Prime Intellect