Michi Yasunaga

@michiyasunaga

Researcher @OpenAI

ID: 1182194132309012481

http://michiyasunaga.github.io 10-10-2019 07:23:34

308 Tweet

3,3K Takipçi

882 Takip Edilen

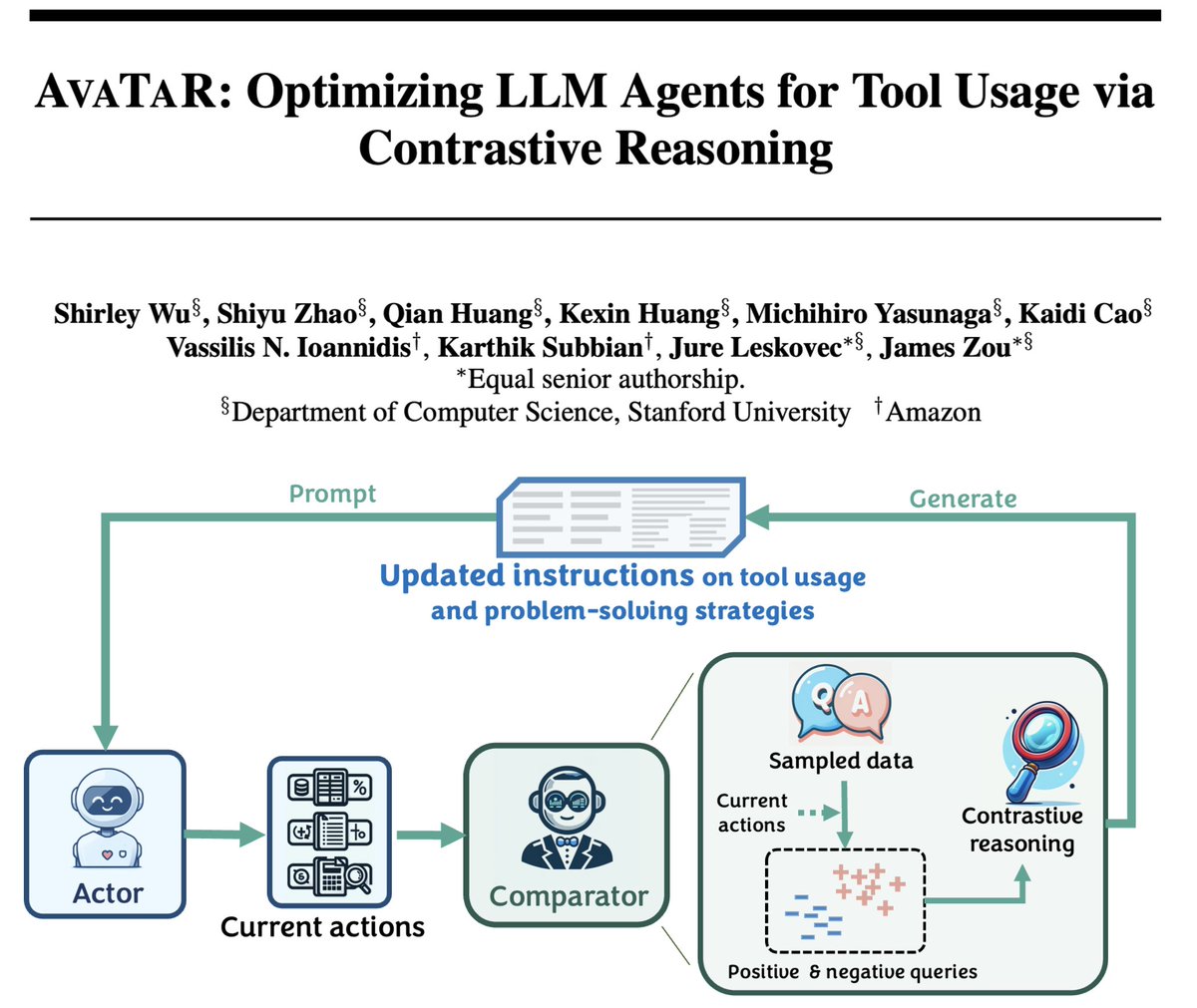

🔥 AvaTaR: Optimizing LLM Agents for Tool Usage via Contrastive Reasoning 🔥 is on NeurIPS Conference 2024! LLM agents are great, but they don't always make the best use of tools! AvaTaR is an automated framework that optimizes an LLM agent’s tool usage for any task. The "magic" lies

🚨 I’m on the job market this year! 🚨 I’m completing my Allen School Ph.D. (2025), where I identify and tackle key LLM limitations like hallucinations by developing new models—Retrieval-Augmented LMs—to build more reliable real-world AI systems. Learn more in the thread! 🧵