Scott Graham

@macgraeme42

Artificial Intelligence. Game Design. All-in on Tesla. Joining the conversation.

ID: 1423081579790970880

05-08-2021 00:41:35

28,28K Tweet

1,1K Takipçi

200 Takip Edilen

OK, but that's an 8lbs (**pounds**) bar of uranium, not 8oz. So about 72 billion calories... Sabine Hossenfelder

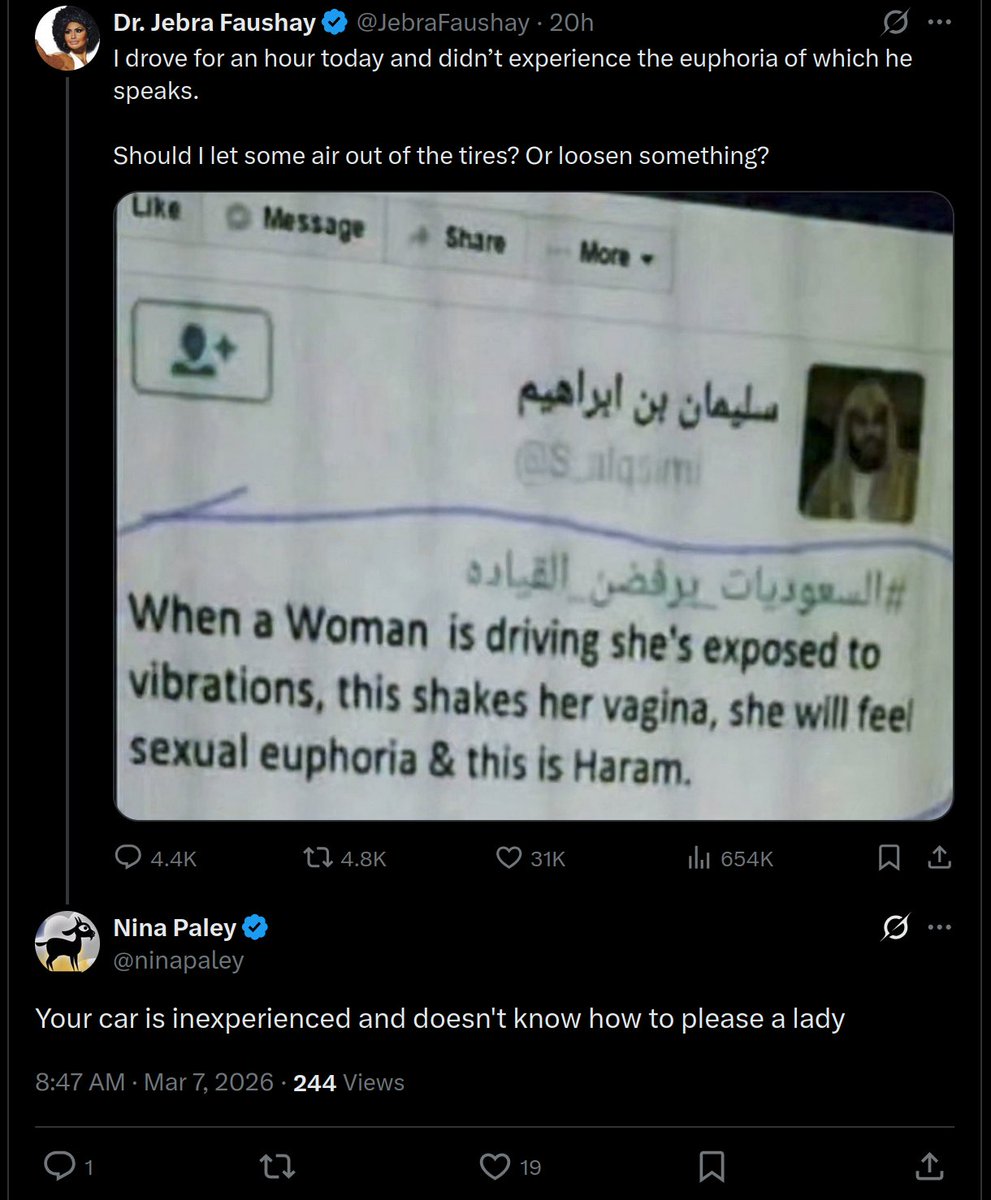

Nina Paley Dr. Jebra Faushay Clearly western women, are simply too repressed to experience orgasm.