Leandro von Werra

@lvwerra

Head of research @huggingface

ID: 1105171148382326789

http://www.github.com/lvwerra 11-03-2019 18:18:29

1,1K Tweet

8,8K Takipçi

360 Takip Edilen

✨ Very happy to announce I'm joining Hugging Face next week! 🤗 I'm based in the amazing Bern office 🇨🇭🏔️ alongside Leandro von Werra Lewis Tunstall Andi Marafioti working with the Language Model Dataset team to champion open datasets and models while supporting the open-science community.

Leandro von Werra so fast! just used it to figure out at which speed to go home and get less wet

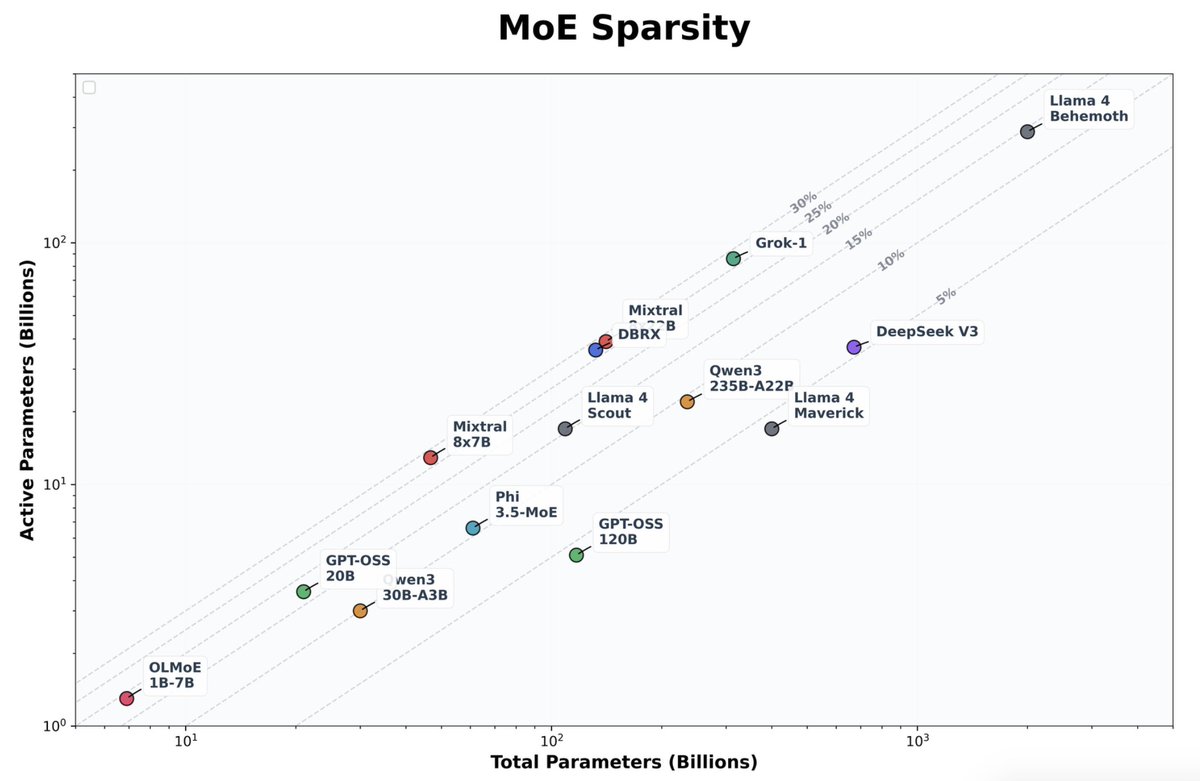

🇺🇦 Dzmitry Bahdanau Indeed, I mixed it up and thought it was the same as DeepSeek as they re-used the arch. Forgot that they change active/passive (32B/1T):

🎉 New chapter: just started at Hugging Face! Excited to help science teams bring their ML research to life through interactive demos and visualizations. Ready to dive in!

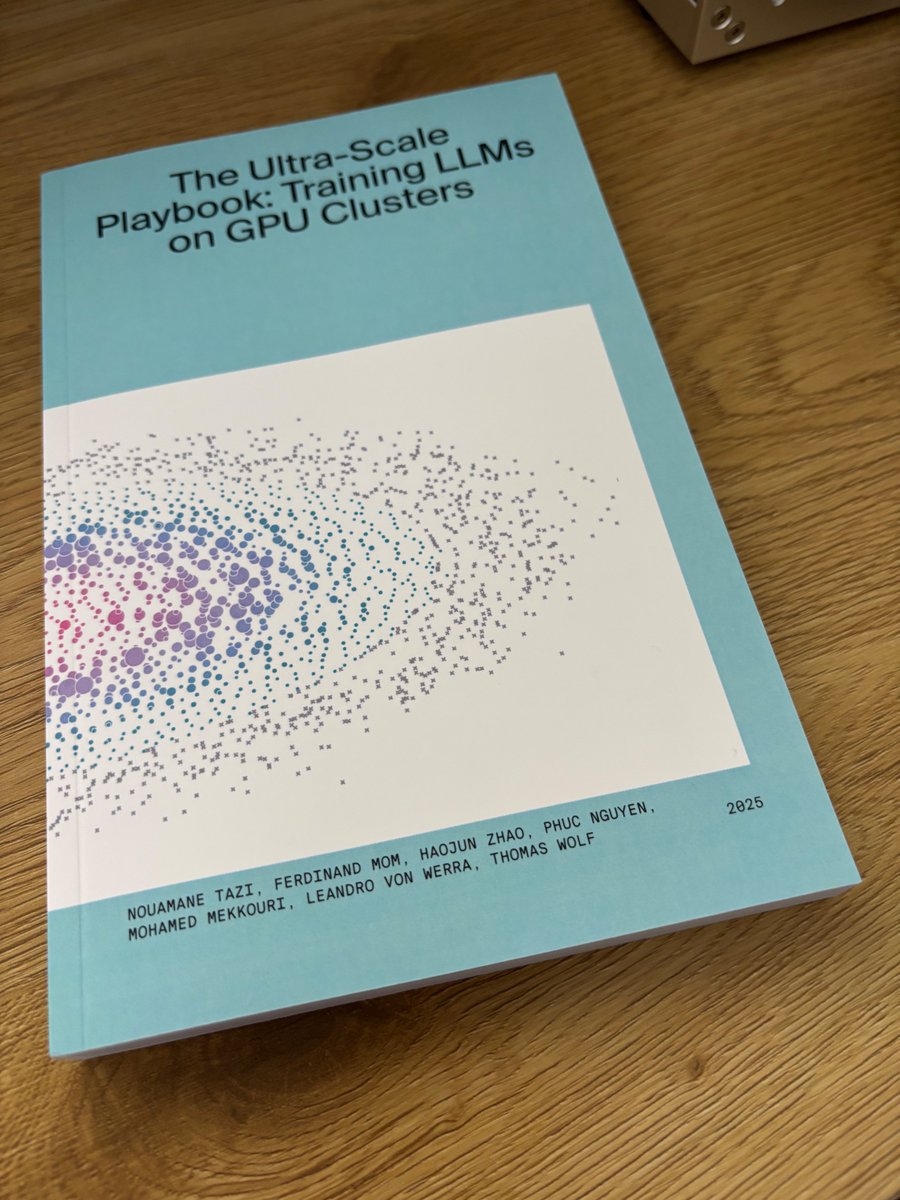

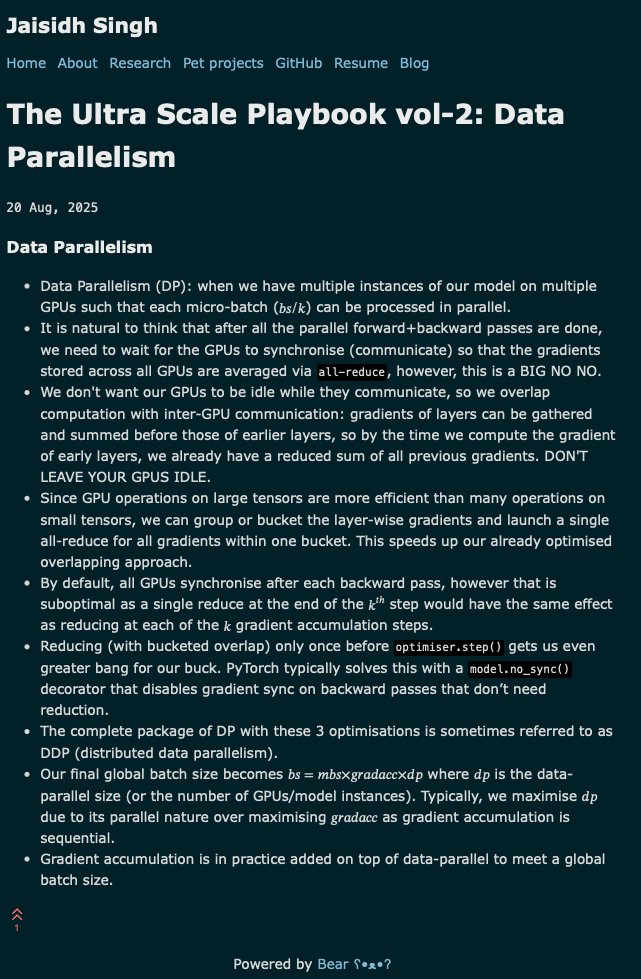

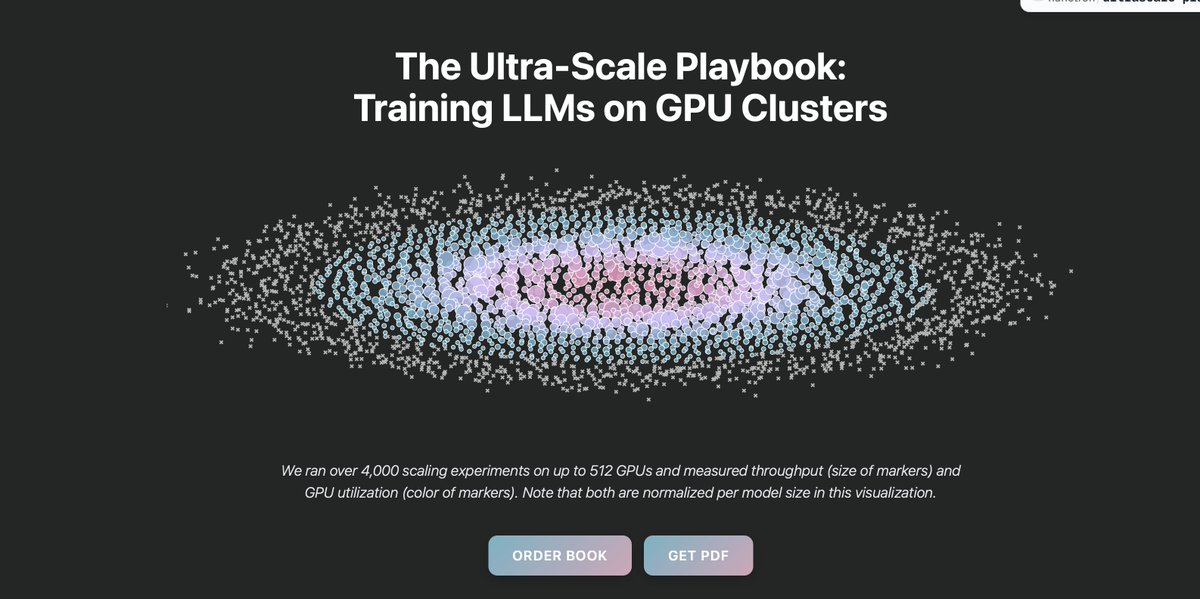

🚀 First week at Hugging Face: shipped improvements to The Ultra Scale Playbook! ✅ Dark mode ✅ Some mobile responsiveness ✅ Performance fixes Your complete guide to scaling #LLMs in 2025 👇 huggingface.co/spaces/nanotro…

If you need high quality data contact your friendly data-dealers of choice: Guilherme Penedo and Hynek Kydlíček

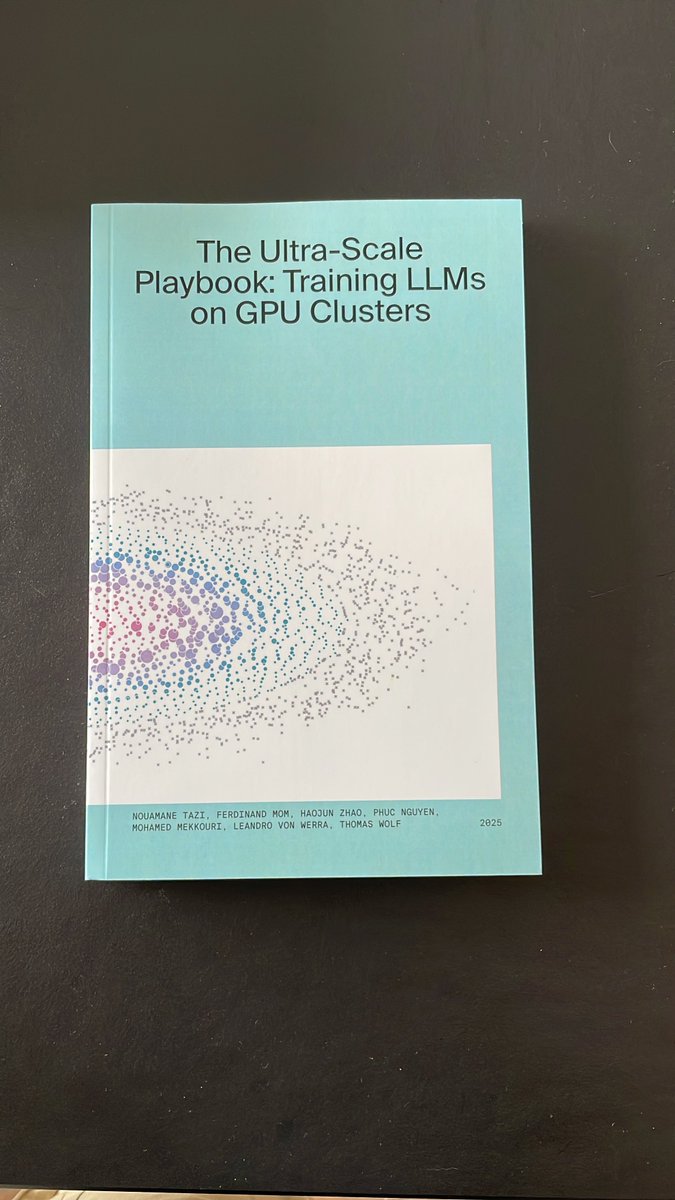

Copy arrived today. I appreciate the practical coverage of data/tensor/pipeline/expert parallelism and profiling. Eager to read. Hugging Face, Leandro von Werra, Thomas Wolf