Luke Metz

@luke_metz

Thinking Machines

Previously: OpenAI, Google Brain

ID: 887992016

http://lukemetz.com 18-10-2012 02:57:44

326 Tweet

16,16K Takipçi

1,1K Takip Edilen

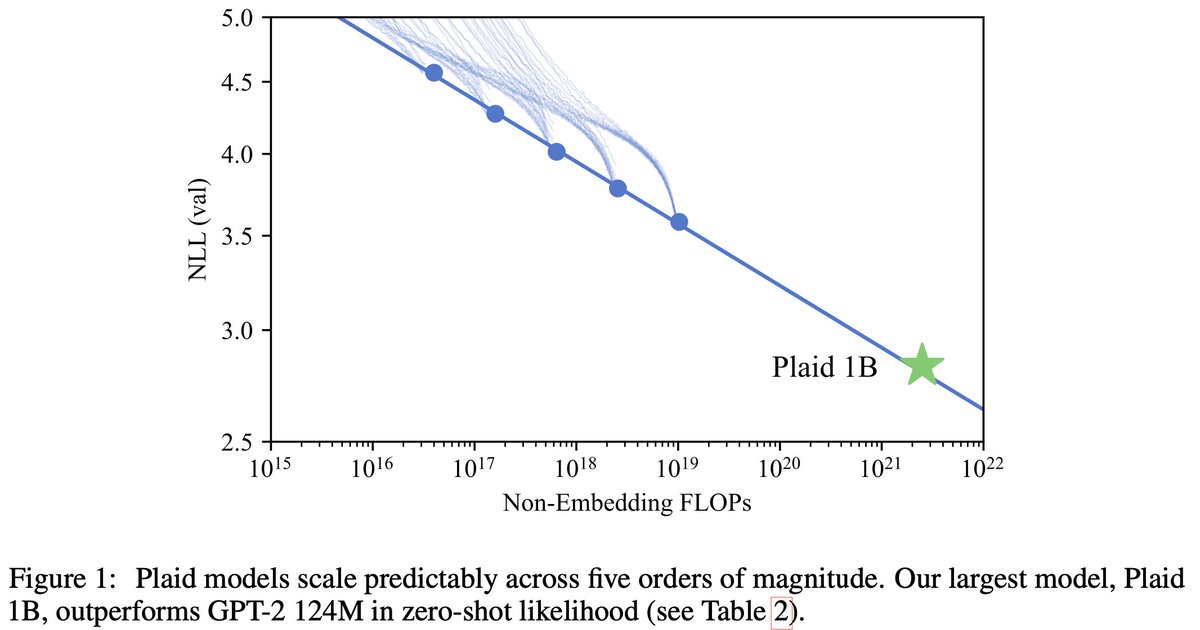

New paper with Tatsunori Hashimoto! Likelihood-Based Diffusion Language Models: arxiv.org/abs/2305.18619 Likelihood-based training is a key ingredient of current LLMs. Despite this, diffusion LMs haven't shown any nontrivial likelihoods on standard LM benchmarks. We fix this!🧵

Want to learn about learned optimization? I gave a tutorial at CoLLAs 2025 which is now public! youtu.be/FMjYwthtoN4?si…

I'm leaving OpenAI after over 2 years of wild ride. Alongside Barret Zoph , William Fedus , John Schulman , and many others I got to build a “low key research preview” product that became ChatGPT. While we were all excited to work on it, none of us expected it to be where it is