Weixin Liang

@liang_weixin

CS Ph.D. @Stanford | @StanfordAILab | TA for CS224C: NLP for Computational Social Science | Exploring AI & NLP | ai.stanford.edu/~wxliang/

ID: 1193940640792338432

https://ai.stanford.edu/~wxliang/ 11-11-2019 17:16:56

151 Tweet

1,1K Followers

700 Following

Thanks Weixin Liang We all enjoyed reading the paper! And we appreciate your paper for helping the community gain a deeper understanding of the modality gap 🥰

Thank you, Victoria X Lin , for the write-up.

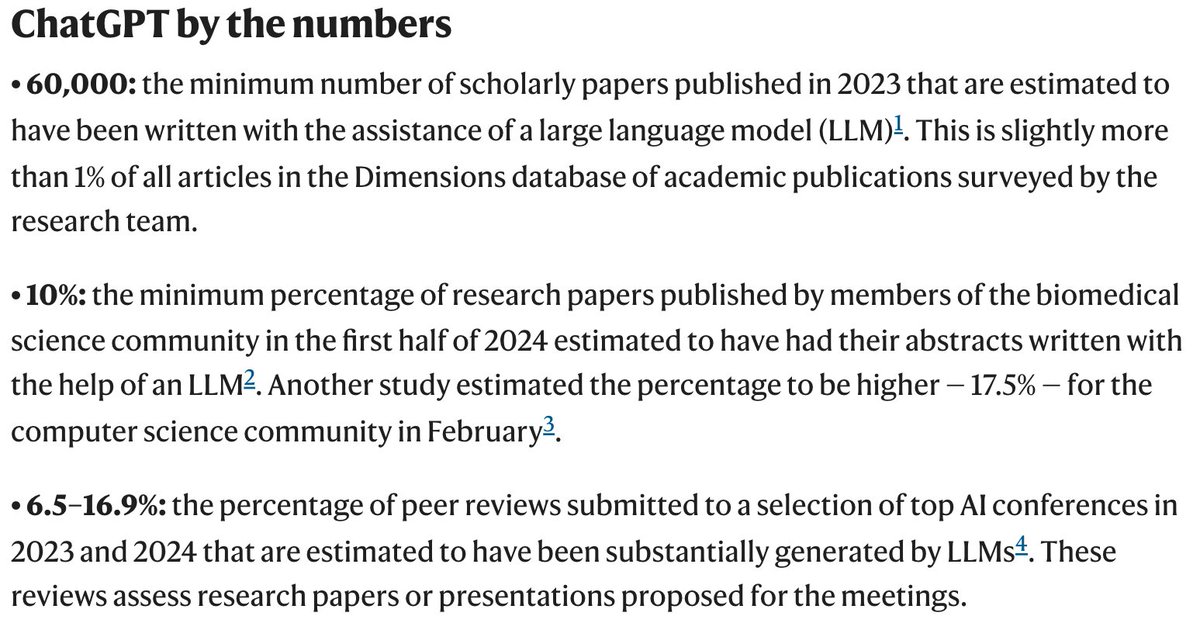

Even the smartest LLMs can fail at basic multiturn communication Ask for grocery help → without asking where you live 🤦♀️ Ask to write articles → assumes your preferences 🤷🏻♀️ ⭐️CollabLLM (top 1%; oral ICML Conference) transforms LLMs from passive responders into active collaborators.