Li Ding

@li_ding_

CS PhD student @UMassAmherst. Previously AI/ML @GoogleAI, @Meta, and @MIT. More: liding.info

ID: 1715105170927837185

http://liding.info 19-10-2023 20:38:40

25 Tweet

88 Takipçi

144 Takip Edilen

Great work led by Ryan Bahlous-Boldi ! QD maintains diversity with pre-defined diversity metrics (or learned, e.g., QDHF!), but is it necessary? This paper proposes an alternative that uses MMO to solve deceptive RL tasks, and outperforms ME on QD-score w/o even optimizing towards it!

Thrilled to announce the first annual Reinforcement Learning Conference RL_Conference, which will be held at UMass Amherst August 9-12! RLC is the first strongly peer-reviewed RL venue with proceedings, and our call for papers is now available: rl-conference.cc. 🧵

Thrilled to announce the first annual Reinforcement Learning Conference RL_Conference, which will be held at UMass Amherst August 9-12! RLC is the first strongly peer-reviewed RL venue with proceedings, and our call for papers is now available: rl-conference.cc.

Had a great time yesterday presenting our QDHF work at #NeurIPS2023 ALOE Workshop! Fantastic workshop with great vibes. Glad to meet so many people in open-endedness, QD, RL, especially my amazing collaborators Jenny Zhang and Jeff Clune! (Thanks to Ryan Bahlous-Boldi for this photo!)

POPL enhances the safety and fairness of RLHF by aligning agents and LLMs with diverse human values. It effectively addresses hidden contexts in preferences, ensuring risk-sensitive alignment without additional labeling. Led by Ryan Bahlous-Boldi, w/ Lee Spector and Scott Niekum.

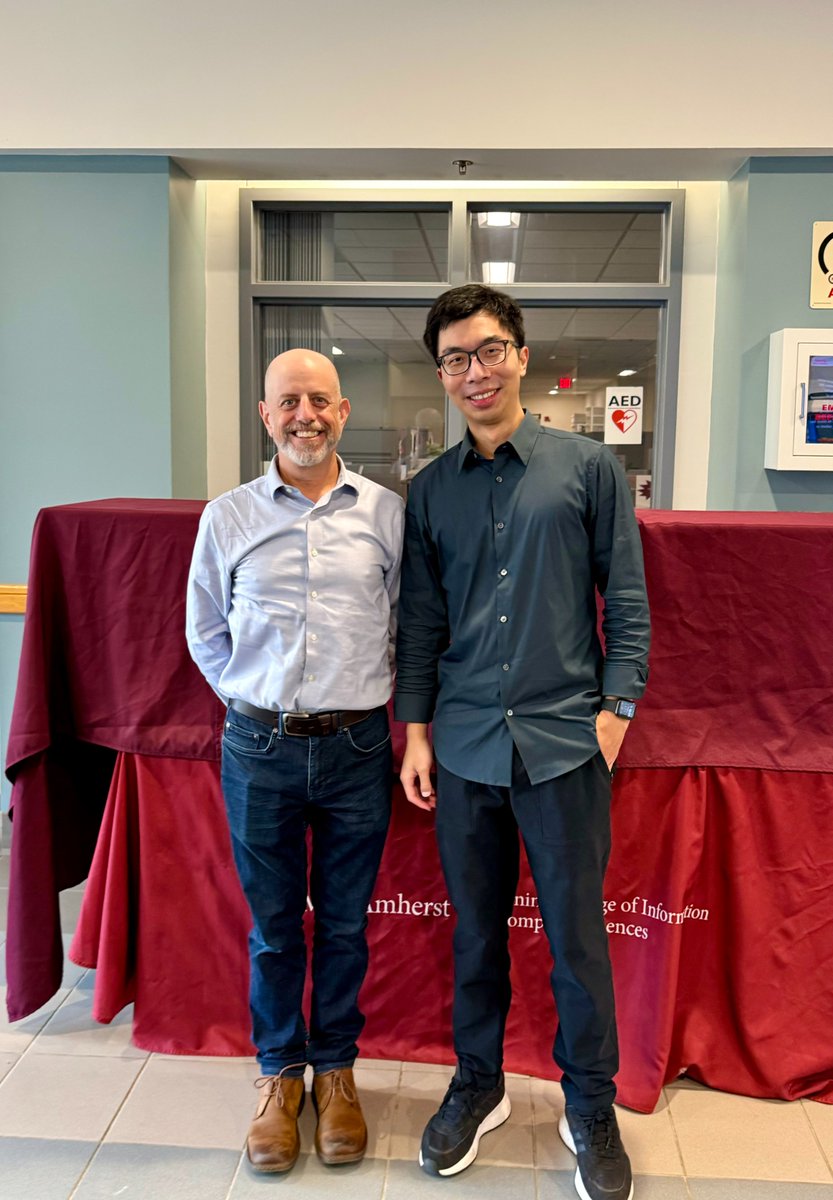

Thrilled to announce I’ve successfully defended my PhD! 🎓 Deeply grateful to my advisor Lee Spector, my committee Scott Niekum, Subhransu Maji, Jeff Clune, and all collaborators, friends, and family. Milestone achieved, excited for the next chapter!