Lapis Labs

@lapisrocks

be novel.

ID: 1753646329136283648

https://lapis.rocks/ 03-02-2024 05:07:26

30 Tweet

54 Followers

2 Following

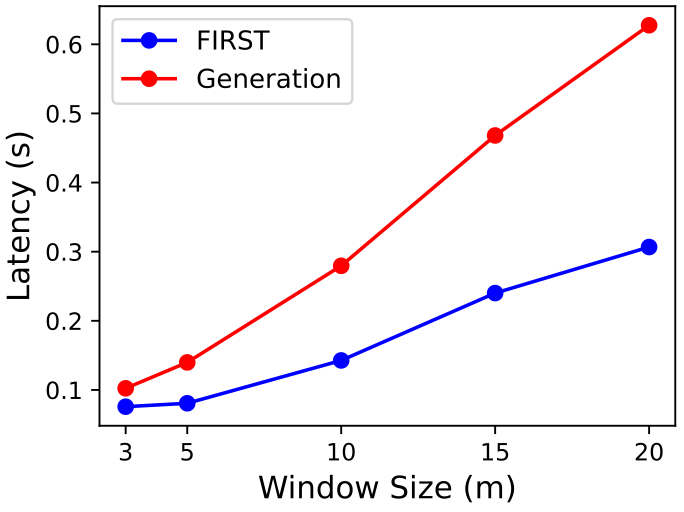

Moreover, inference efficiency considerably improves since only a single token needs to be generated instead of the entire ranking order! Work done in collaboration with Heng Ji IBM Research (Avi Sil and Arafat Sultan) and Lapis Labs

We are excited to introduce FIRST! Our novel LLM reranking approach boosts efficiency by 50% while maintaining performance. Paper link: arxiv.org/pdf/2406.15657 Big thanks to Revanth Gangi Reddy and Heng Ji, along with our collaborators IBM Research (Avi Sil and Arafat