Jonathan Lorraine

@jonlorraine9

Research scientist @NVIDIA | PhD in machine learning @UofT. Previously @Google / @MetaAI. Opinions are my own. 🤖 💻 ☕️

ID: 926859515986923520

https://www.jonlorraine.com/ 04-11-2017 17:11:43

227 Tweet

6,6K Followers

6,6K Following

I'm super excited for the future of Real2Sim2Real workflows on top of our new VoMP pipeline. There's still work ahead to integrate it into Isaac Lab through solvers in Newton and get simulation running fast enough for RL, but this is an important start. Congrats rishit dagli!

Video motion and view control just became easy! Check out our new plug-and-play approach led by my brilliant students and collaborators Assaf Singer Noam Rotstein Amir Mann Ron Kimmel Technion Israel 🌐project page: time-to-move.github.io

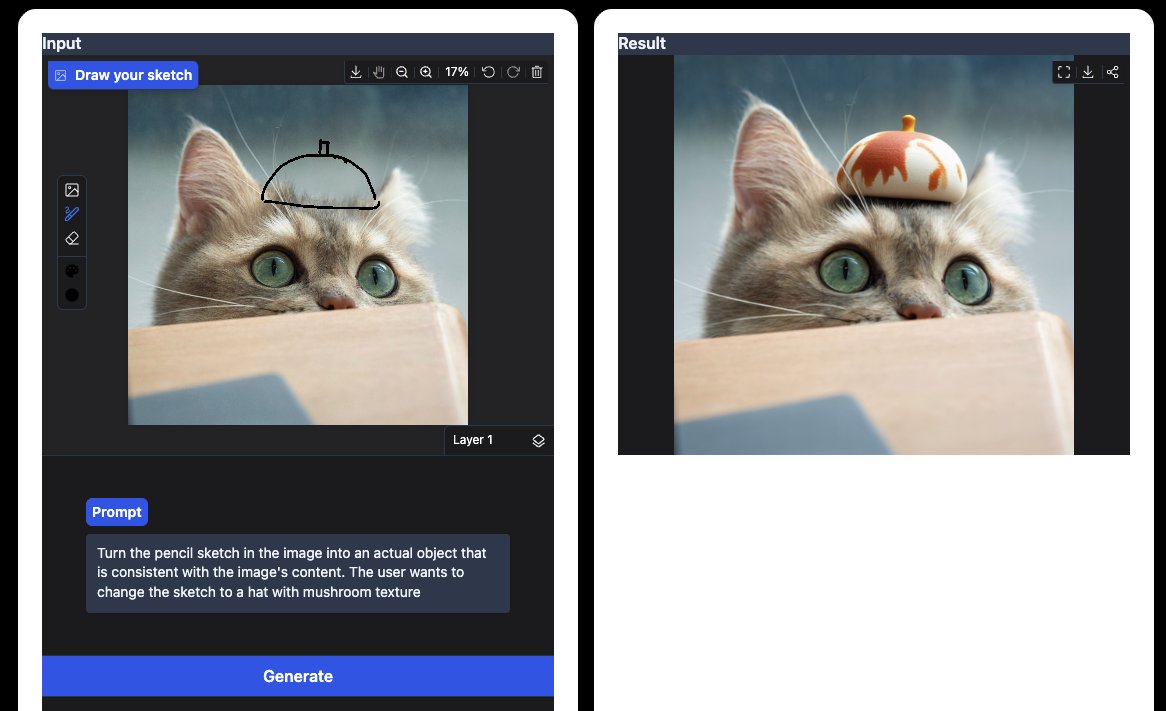

Crafting 3D assets just got a massive upgrade! Why guess with text when you can guide with geometry? 🎨 Extremely proud of this work led by the brilliant Elisabetta Fedele and Francis Engelmann spacecontrol3d.github.io

In engineering and art, geometry is often represented not as meshes or points, but as domain-specific structured *grammars*. In this work led by Milin Kodnongbua and Jack Zhang, we investigated how to optimize these grammars ML-style with SGD. 4 simple rules make a huge difference!