Pablo Marcos

@jazzmaniatico

PhD student | DL & Cognitive neuroscience

ID: 2240577112

https://github.com/pablomm 23-12-2013 22:32:40

60,60K Tweet

22 Takipçi

132 Takip Edilen

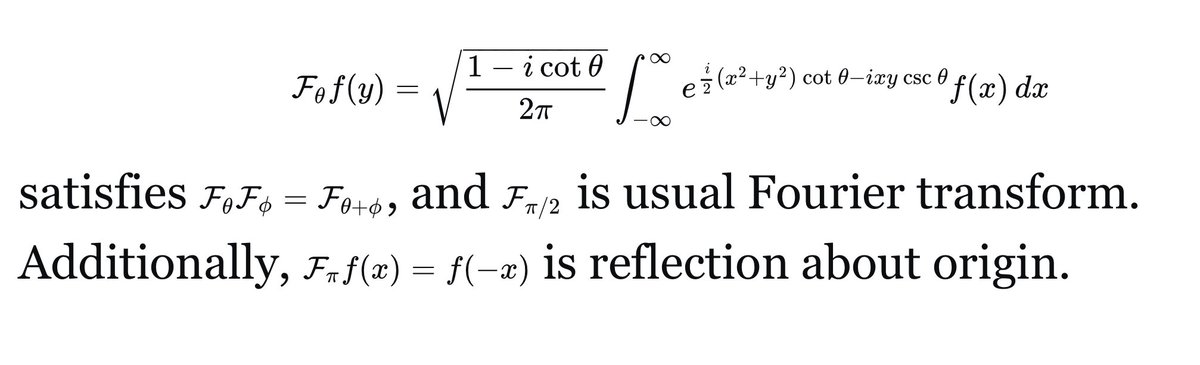

Lots of discussion around JEPA and why latent space prediction works better than input space (e.g., LLMs) for certain modalities. But no one has formalized WHY. The answer lies in whether statistically dominant features are semantically meaningful. NeurIPS Conference spotlight 🧵👇