Yuhao Dong

@dyhthu

ID: 1709110084834598912

03-10-2023 07:36:26

61 Tweet

81 Takipçi

172 Takip Edilen

🚤Real-Time Streaming VLA for Dynamic Manipulation🚤 #DynamicVLA is a 0.4B vision-language-action model that manipulates *moving* objects in real-time, with continuous inference and latent-aware action streaming - Project: infinitescript.com/project/dynami… - Code: github.com/hzxie/DynamicV…

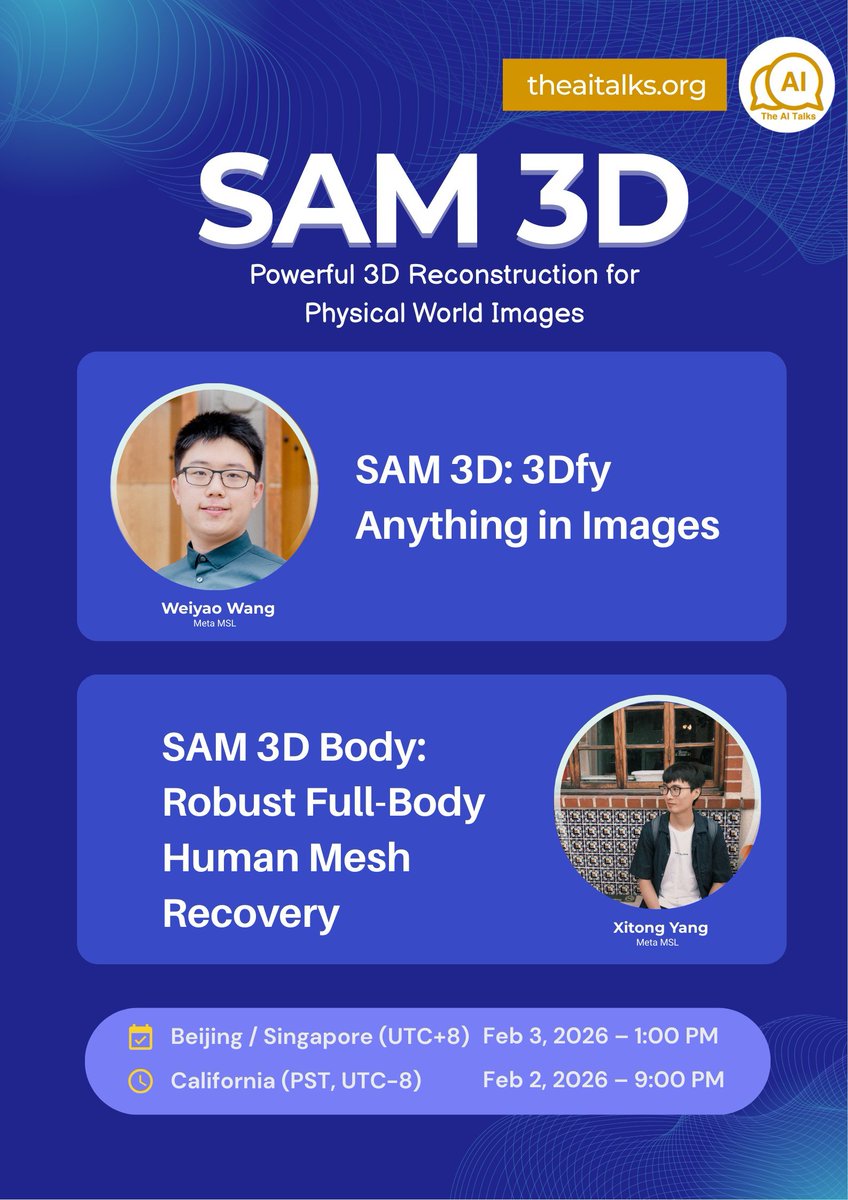

𝗧𝗵𝗲 𝗔𝗜 𝗧𝗮𝗹𝗸𝘀 will be hosting SAM 3D (weiyaow ) and SAM 3D Body (Xitong Yang) from @MetaAI. 🕐 Feb 3 (Tue) - 13:00 SGT | Feb 2 (Mon) - 21:00 PST 📩 PM me for the Zoom link 🔔 Get notified of future talks The AI Talks: theaitalks.org/subscribe/