Huiqiang Jiang

@iofu728

RSDE @MSFTResearch Shanghai

ID: 1156745200770768896

https://hqjiang.com/ 01-08-2019 01:55:21

107 Tweet

248 Followers

547 Following

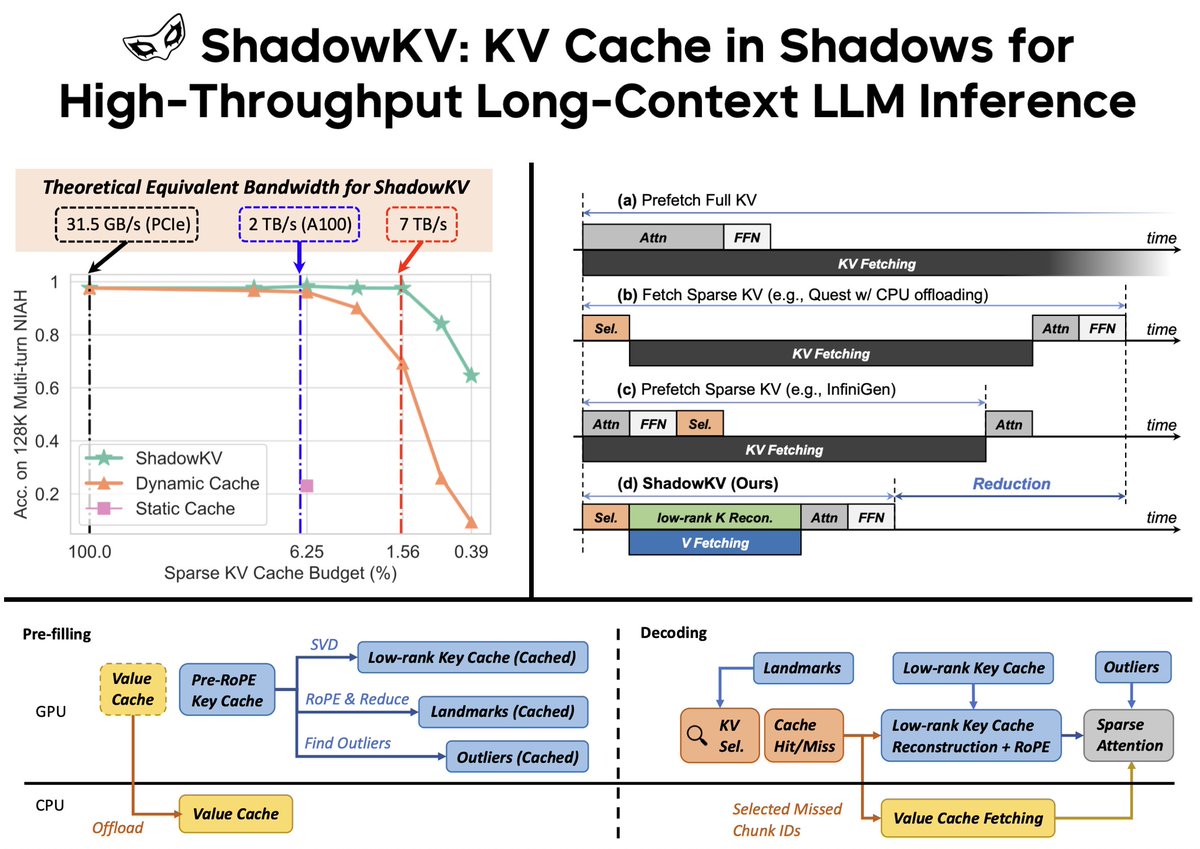

SCBench has been accepted by #ICLR2025! Now you can evaluate your long-context methods across the full KV cache lifecycle. Congratulations to Yucheng Li and all my co-authors! Find more details at aka.ms/SCBench

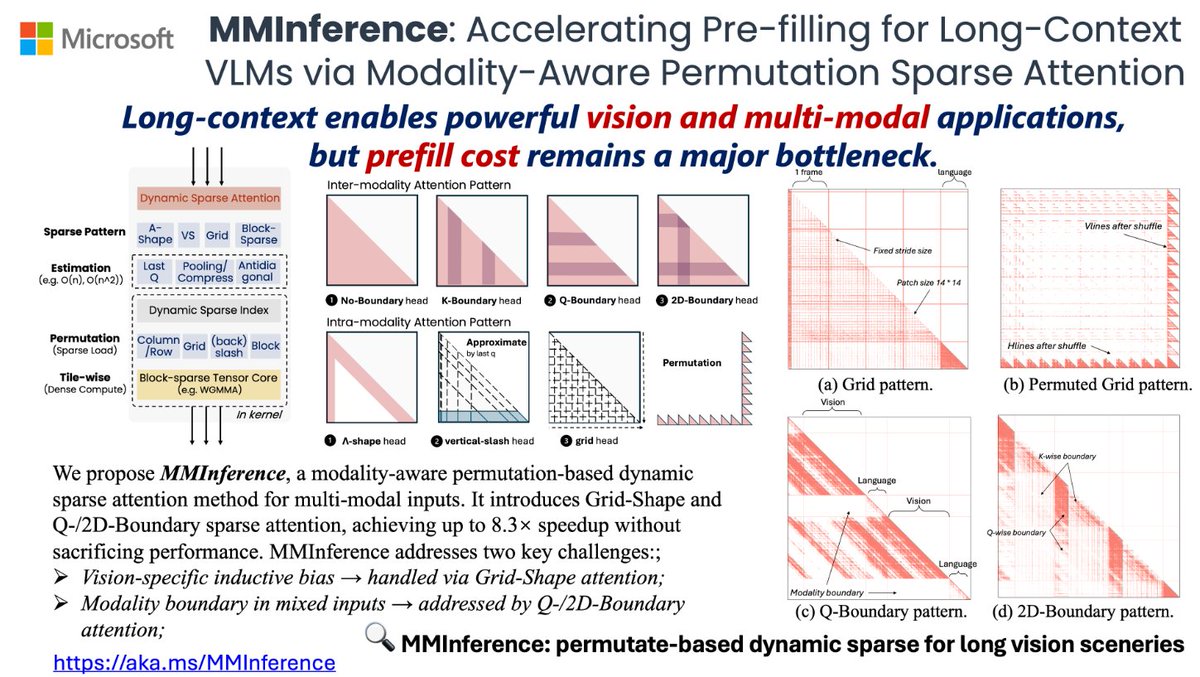

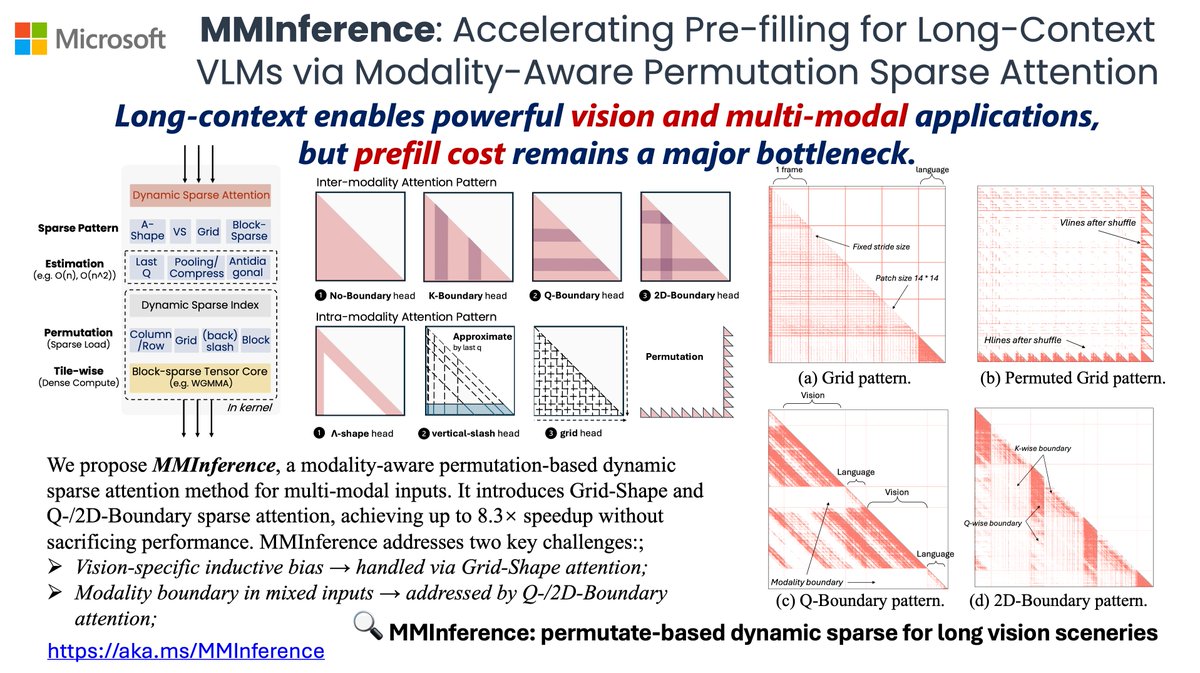

Thanks Aran Komatsuzaki for the promotion! It, a bottom-up system-algorithm co-design sparse attention methods, can process 1M tokens video 8.3x faster using Long-context VLMs. And we'll present it at ICLR'25 Microsoft Booth (Apr. 25 13:30) aka.ms/MMInference

MMInference is accepted by #ICML2025! It use permutation to solve inductive bias and modality boundary issues in multi-modality. And also unify dynamic sparse attention in sparse load + dense tensor core pipeline. Congratulations to Yucheng Li! Find more aka.ms/MMInference