Martin Gubri

@framart1

Research Lead @parameterlab working on Trustworthy AI | he/him

Active accounts: 🦋 mgubri | 🐘 @[email protected]

ID: 983565397

https://gubri.eu 01-12-2012 23:57:22

1,1K Tweet

352 Followers

891 Following

Prompt Injection and Jailbreaking are (still) not the same thing by Simon Willison simonwillison.net/2024/Mar/5/pro…

Attending #ICML2024 and looking for a research internship in trustworthy AI for LLMs? Reach out to Seong Joon Oh and Martin Gubri at the conference! x.com/parameterlab/s…

I will also have the pleasure to present with Dennis Ulmer 🦋 the Apricot 🍑 paper about calibrated confidence estimate during the today poster session at 16:00 of #acl2024 See you there!

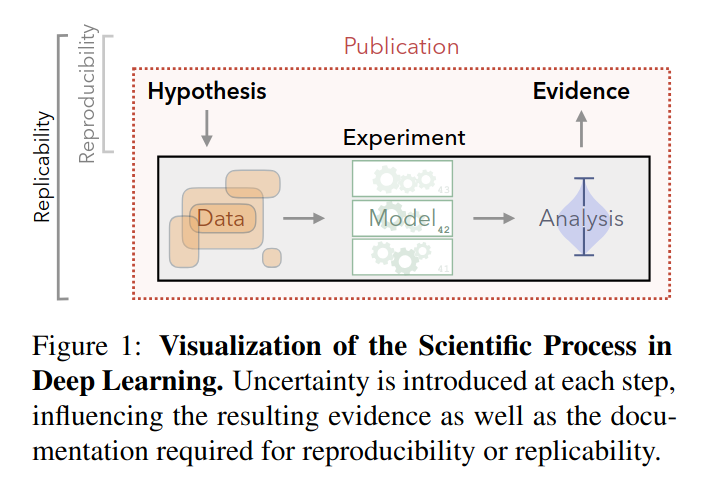

I just learned the difference between replicability and reproducibility, thanks to this remarkable paper by Dennis Ulmer 🦋 and his authors: arxiv.org/abs/2204.06251 After speaking with several researchers this week, I realized that this distinction is likely not well-known.

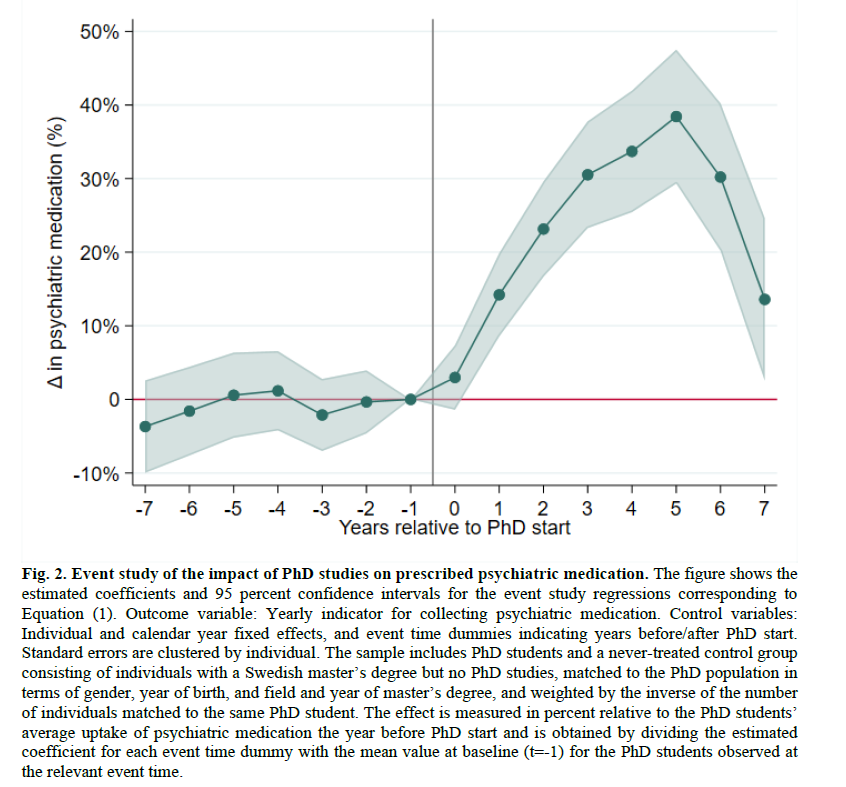

Pretty strong evidence of negative mental health effects of doing a PhD. Recent working paper by Eva Ranehill, Anna Sandberg, Sanna Bergvall, and Clara Fernström. Paper link: swopec.hhs.se/lunewp/abs/lun…

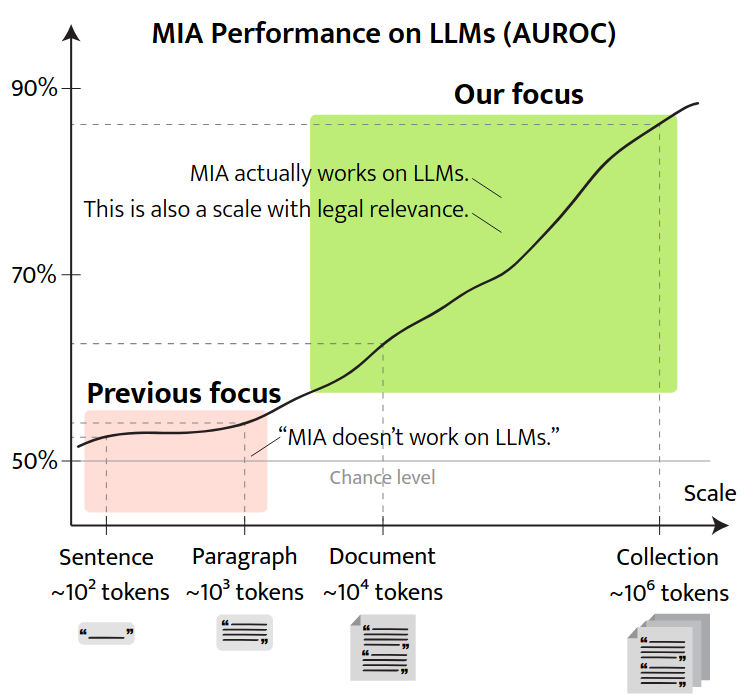

📄 Excited to share our latest paper on the scale required for successful membership inference in LLMs! We investigate a continuum from single sentences to large document collections. Huge thanks to an incredible team: Haritz Puerto, Seong Joon Oh, Sangdoo Yun!

I'm excited to announce that my internship paper at Parameter Lab was accepted to Findings of #NAACL2025 🎉 Huge thanks to Martin Gubri Seong Joon Oh and Sangdoo Yun! Amazing team!!

Delighted by this great thread from elvis presenting our new Leaky Thoughts paper! We show that reasoning models pose serious privacy risks when used as personal agents. Reasoning traces are a new attack vector. Work led by Tommaso Green during his internship Parameter Lab!